It is easy to forget how much our devices do for us until your smart assistant dims the lights, adjusts the thermostat, and reminds you to drink water, all on its own. That seamless experience is not just about convenience, but a glimpse into the growing world of agentic AI.

Whether it is a self-driving car navigating rush hour or a warehouse robot dodging obstacles while organizing inventory, agentic AI is quietly revolutionizing how things get done. It is moving us beyond automation into a world where machines can think, plan, and act more like humans, only faster and with fewer coffee breaks.

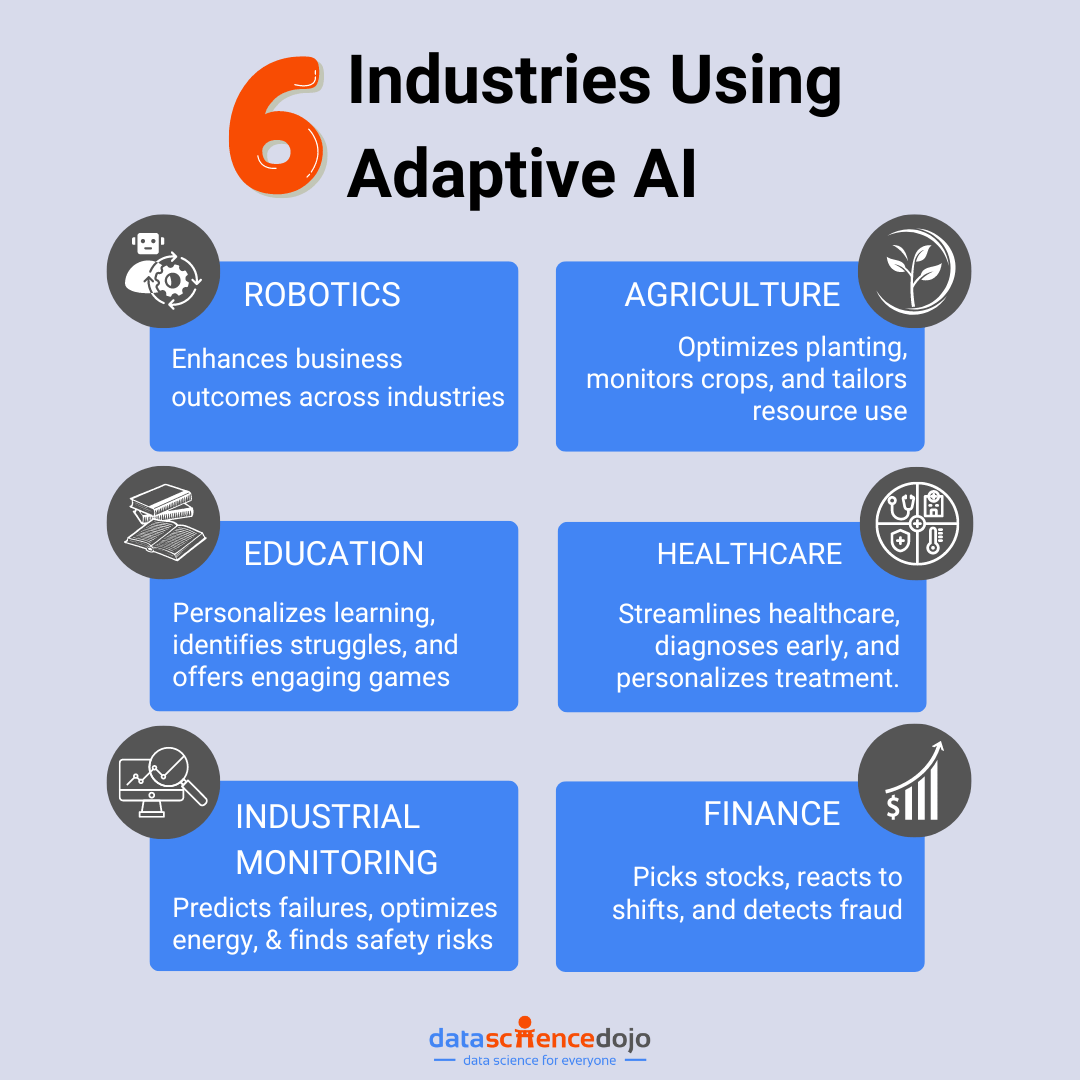

In today’s fast-moving tech world, understanding agentic AI is not just for the experts. It is already shaping industries like healthcare, finance, logistics, and beyond. In this blog, we will break down what agentic AI is, how it works, where it’s being used, and what it means for the future. Ready to explore more? Let’s dive in.

What is Agentic AI?

Agentic AI is a type of artificial intelligence (AI) that does not just follow rules but acts like an intelligent agent. These systems are designed to make their own decisions, set and pursue goals, and adapt to changes in real time. In short, they are built to chase goals, solve problems, and interact with their environment with minimal human input.

So, what makes agentic AI different from general AI?

General AI usually refers to systems that can perform specific tasks well, like answering questions, recommending content, or recognizing images. These systems are often reactive as they respond based on what they have been programmed or trained to do. While powerful, they typically rely on human instructions for every step.

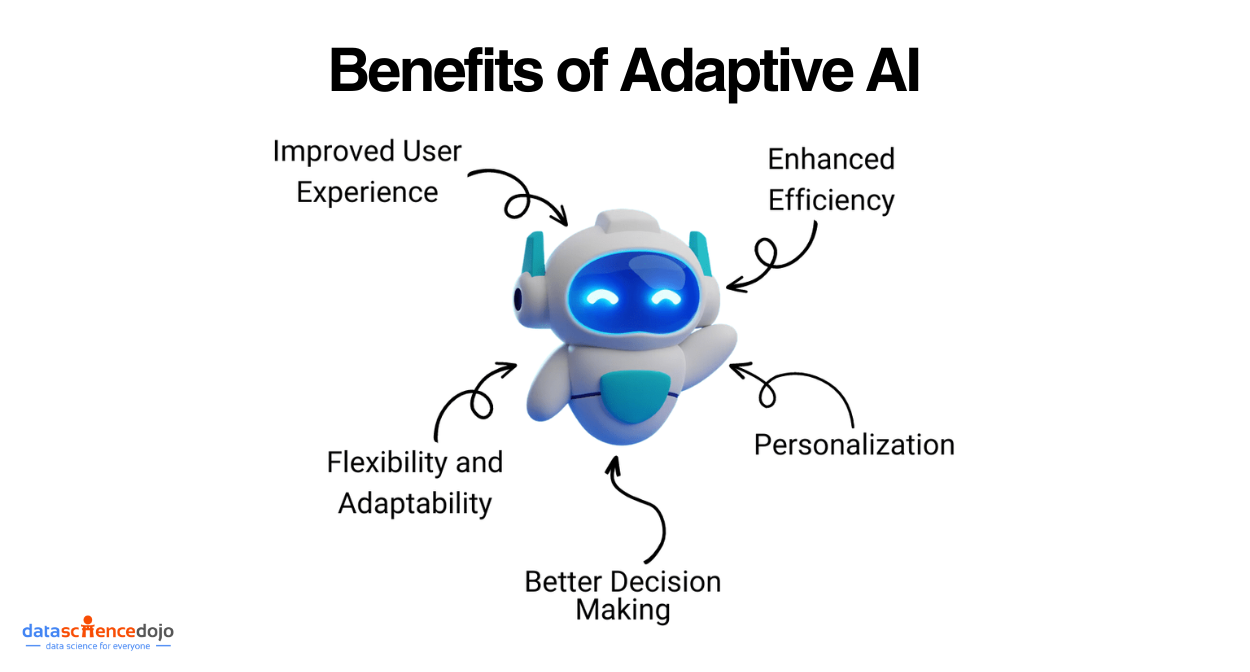

Agentic AI, on the other hand, is built to act autonomously. This means it can make decisions without needing constant human direction. It can explore, learn from outcomes, and improve its performance over time. It does not just follow commands, but figures out how to reach a goal and adapts if things change along the way.

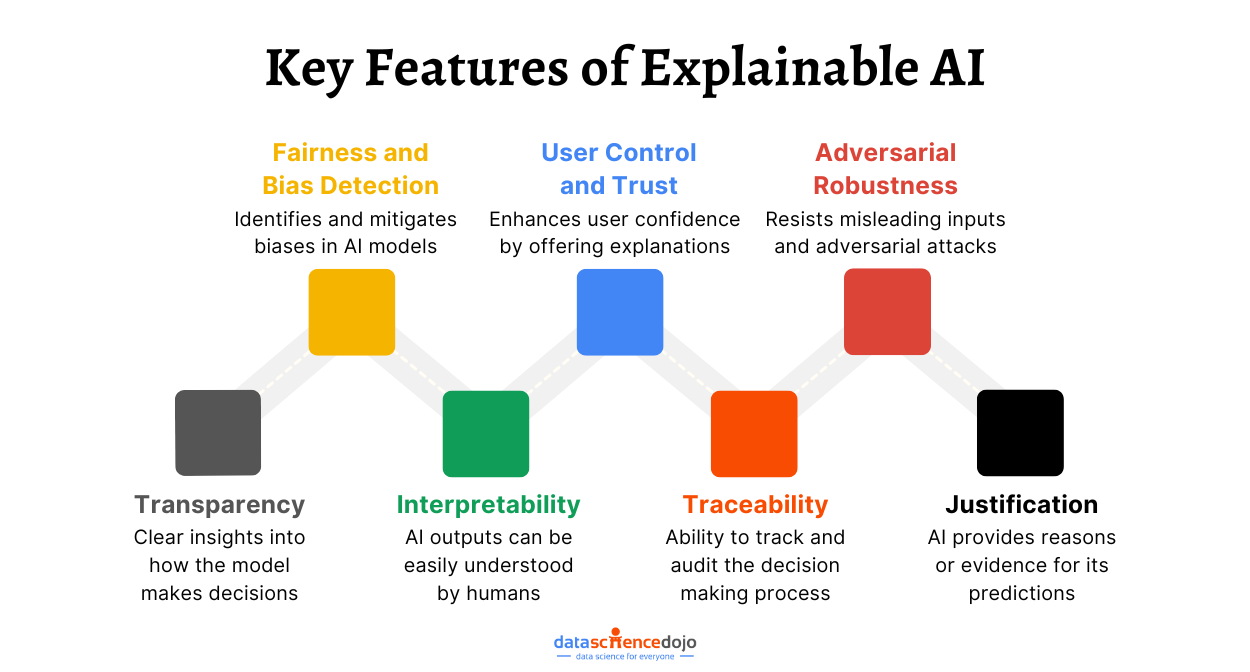

You can also learn about Explainable AI (XAI)

Key Characteristics of Agentic AI

Here are some of the core features that define agentic AI:

- Autonomy – Agentic AI can operate independently. Once given a goal, it decides what steps to take without relying on human input at every turn.

- Goal-Oriented Behavior –These systems are built to achieve specific outcomes. Whether it is automating a reply to emails or optimizing a process, agentic AI keeps its focus on the end goal.

- Learning and Adaptation – Through experience and feedback, the agent learns what works and what does not. Over time, it adjusts its actions to perform better in changing conditions.

- Interactivity – Agentic AI interacts with its environment, and sometimes with other agents. It takes in data, makes sense of it, and uses that information to plan its next move.

Hence, agentic AI represents a shift from passive machine intelligence to proactive, adaptive systems. It’s about creating AI that does not just do, but thinks, learns, and acts on its own.

Why Do We Need Agentic AI?

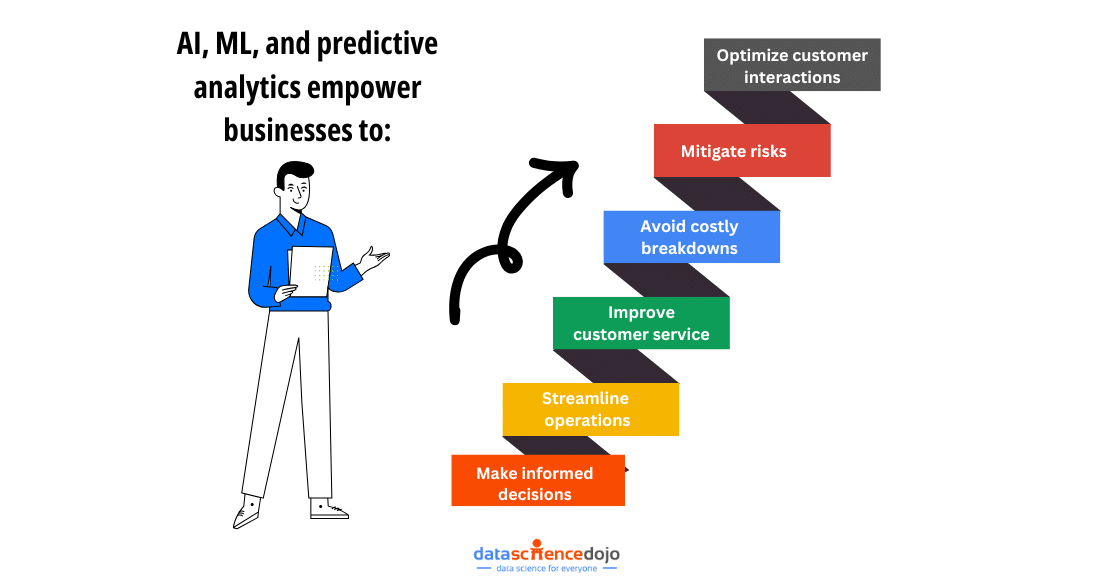

As industries grow more complex and fast-paced, the demand for intelligent systems that can think, decide, and act independently is rising. Let’s explore why agentic AI matters and how it’s helping businesses and organizations operate smarter and safer.

1. Automation of Complex Tasks

Some tasks are just too complicated or too dynamic for traditional automation. Such as autonomous driving, warehouse robotics, or financial strategy planning. These are situations where conditions are always changing, and quick decisions are needed.

Agentic AI can handle this kind of complexity as it can make split-second choices, adjust its behavior in real time, and learn from new situations. For enterprises, this means less need for constant human monitoring and faster responses to changing scenarios, saving both time and resources.

2. Scalability Across Industries

As businesses grow, so does the challenge of scaling operations. Hiring more people is not always practical or cost-effective, especially in areas like logistics, healthcare, and customer service. Agentic AI provides a scalable solution.

Once trained, AI agents can operate across multiple systems or locations simultaneously. For example, a single AI agent can monitor thousands of network endpoints or manage customer service chats around the world. This drastically reduces the need for human labor and increases productivity without sacrificing quality.

3. Efficiency and Accuracy

Humans are great at creative thinking but not always at repetitive, detail-heavy tasks. However, agentic AI can process large amounts of data quickly and act with high precision, reducing errors that might happen due to fatigue or oversight.

In industries like manufacturing or healthcare, even small mistakes can be costly. Agentic AI brings consistency and speed, helping businesses deliver better results, faster, and at scale.

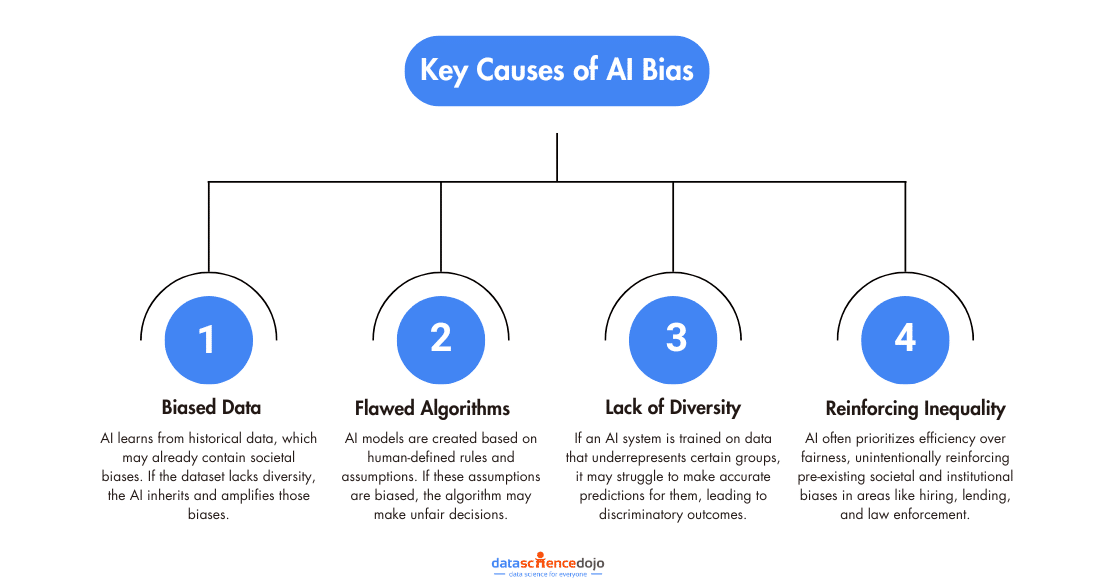

4. Reducing Human Error and Bias

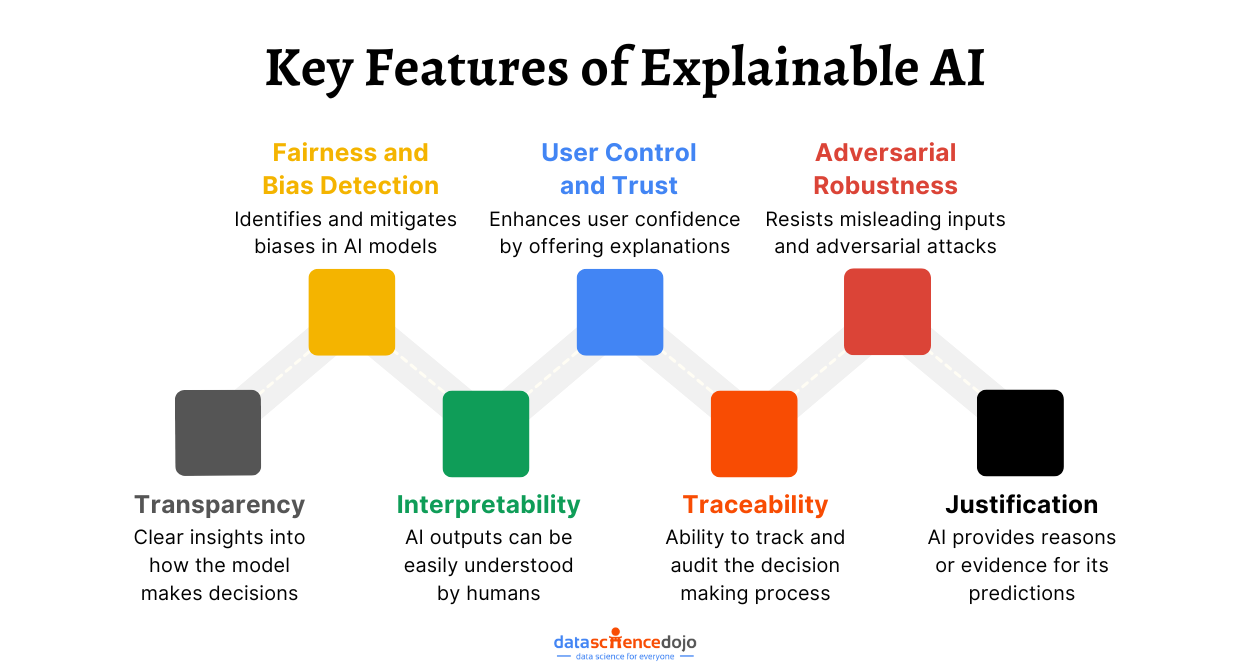

Unconscious bias can sneak into human decisions, whether it’s in hiring, lending, or law enforcement. While AI isn’t inherently unbiased, agentic AI can be trained and monitored to operate with fairness and transparency.

By basing decisions on data and algorithms rather than gut feelings, businesses can reduce the influence of bias in critical systems. That’s especially important for organizations looking to promote fairness, comply with regulations, and build trust with customers.

5. 24/7 Operations

Unlike humans, agentic AI does not need sleep, breaks, or time off. It can work around the clock, making it ideal for mission-critical systems that need constant oversight, like cybersecurity, infrastructure monitoring, or global customer support.

Enterprises benefit hugely from this 24/7 operations capability. It means faster responses, less downtime, and more consistent service without adding shifts or extra personnel.

6. Risk Reduction in Dangerous Environments

Some environments are too risky for people. Whether exploring the deep sea, handling toxic chemicals, or responding to natural disasters, agentic AI can take over where human safety is at risk.

For companies operating in high-risk industries like mining, oil & gas, or emergency services, agentic AI offers a safer and more reliable alternative. It protects human lives and ensures that critical tasks continue even in the toughest conditions.

Thus, agentic AI is a strategic advantage that helps organizations become more resilient and responsive. By taking on the tasks that are too complex, repetitive, or risky for humans, agentic systems are becoming essential tools in the modern enterprise toolkit.

Agentic Frameworks: The Backbone of Smarter AI Agents

As we move toward more autonomous, goal-driven AI systems, agentic frameworks are becoming essential. These frameworks are the building blocks that help developers create, manage, and coordinate intelligent agents that can plan, reason, and act with little to no human input.

Some key features of agentic frameworks include:

- Autonomy: Agents can operate independently, choosing their next move based on goals and context.

- Tool Integration: Many frameworks let agents use APIs, databases, search engines, or other services to complete tasks

- Memory & State: Agents can remember previous steps, conversations, or actions – crucial for long-term tasks

- Reasoning & Planning: They can decide how to best tackle a goal, often using logical steps or pre-built workflows

- Multi-Agent Collaboration: Some frameworks allow teams of agents to work together, each playing a different role

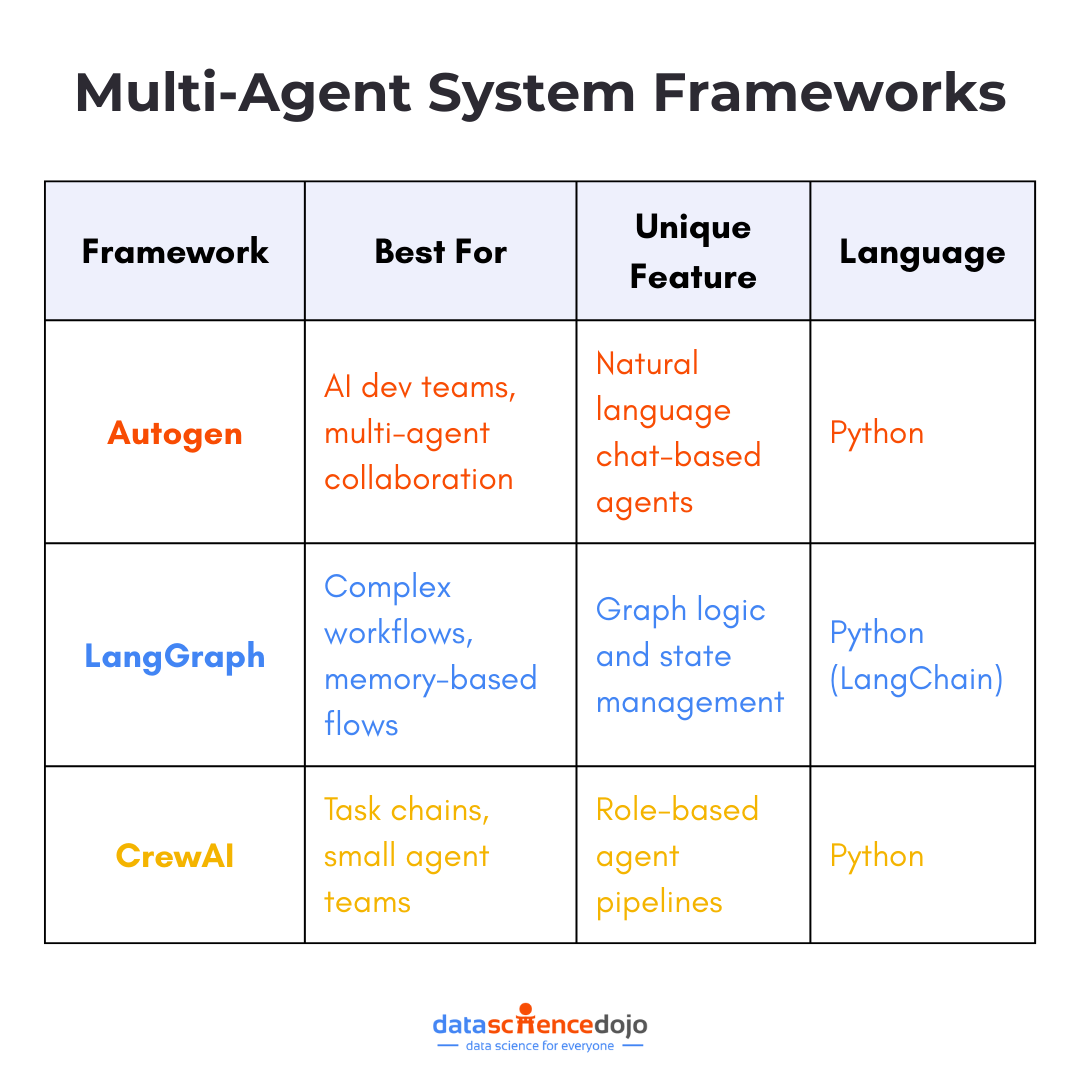

Let’s take a quick tour of some popular agentic frameworks being used:

Absolutely! Here’s a more concise and conversational version of the content:

AutoGen (by Microsoft)

AutoGen is a powerful framework developed by Microsoft that focuses on multi-agent collaboration. It allows developers to easily create and manage systems where multiple AI agents can communicate, share information, and delegate tasks to each other.

These agents can be configured with specific roles and behaviors, enabling dynamic workflows. AutoGen makes the coordination between these agents seamless, using dialogue loops and tool integrations to keep things on track. It’s especially useful for building autonomous systems that need to complete complex, multi-step tasks efficiently.

LangGraph

LangGraph allows you to build agent workflows using a graph-based architecture. Each node is a decision point or a task, and the edges define how data and control flow between them. This structure allows you to build custom agent paths while maintaining a clear and manageable logic.

It is ideal for scenarios where agents need to follow a structured process with some flexibility to adapt based on inputs or outcomes. For example, if you’re building a support system, one branch of the graph might handle technical issues, while another might escalate billing concerns. This brings clarity, control, and customizability to agent workflows.

You can also read and explore LangChain

CrewAI

CrewAI allows you to build a “crew” of AI agents, each with defined roles, goals, and responsibilities. One agent might act as a project manager, another as a developer, and another as a marketer. The magic of CrewAI lies in how these agents collaborate, communicate, and coordinate to achieve shared objectives.

It stands out due to its role-based reasoning system, where each agent has a clear purpose and autonomy to perform their part. This makes it perfect for building collaborative agent systems for content generation, research workflows, or even code development. It is a great way to simulate real-world team dynamics, but with AI.

Thus, if you are looking to build your own AI agent, agentic frameworks are where you want to start. Each of these tools makes Agentic AI smarter, safer, and more capable. The right framework can make a difference between a basic bot and a truly intelligent agent.

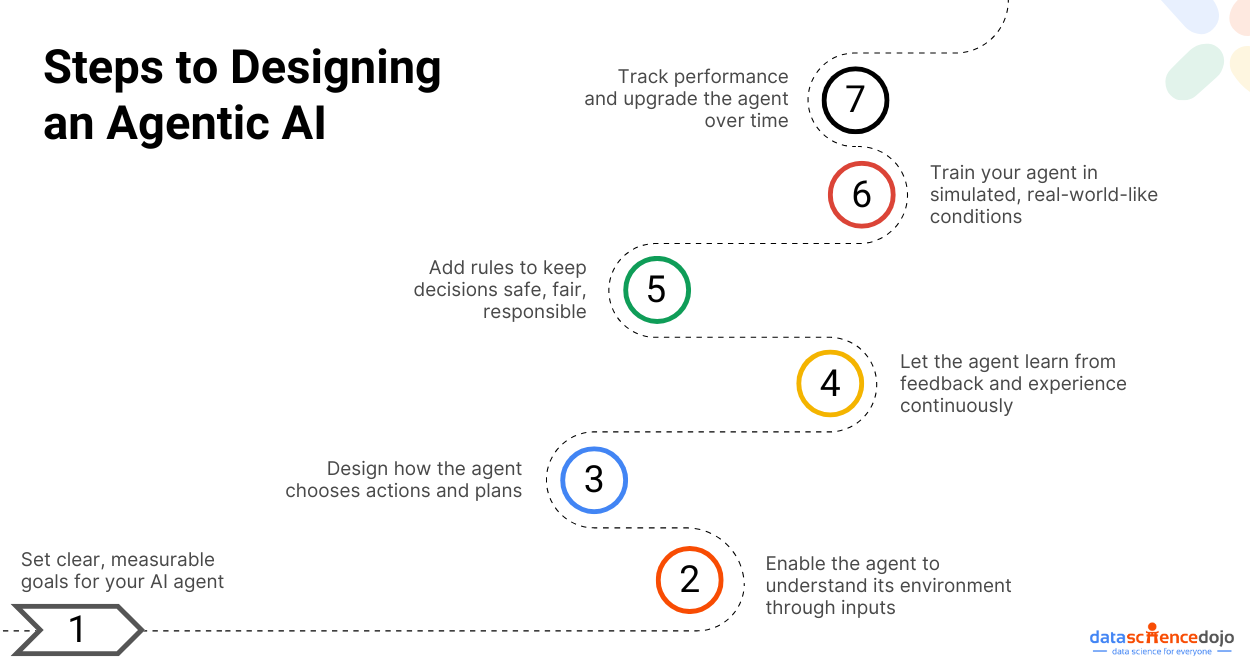

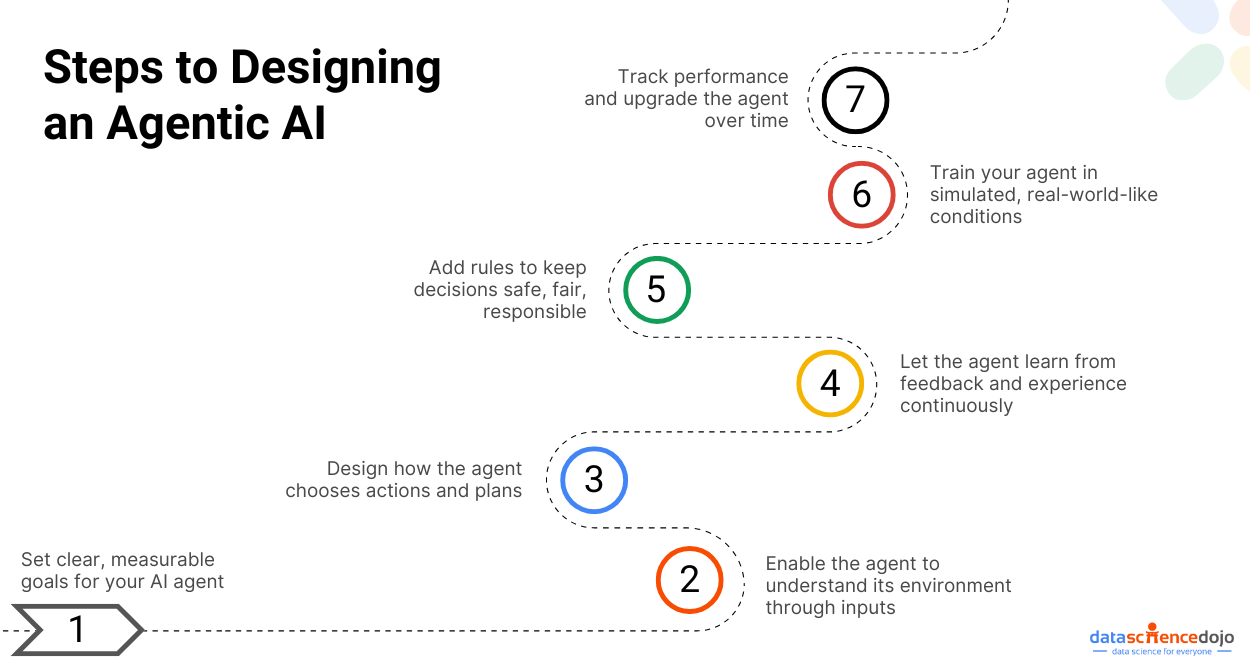

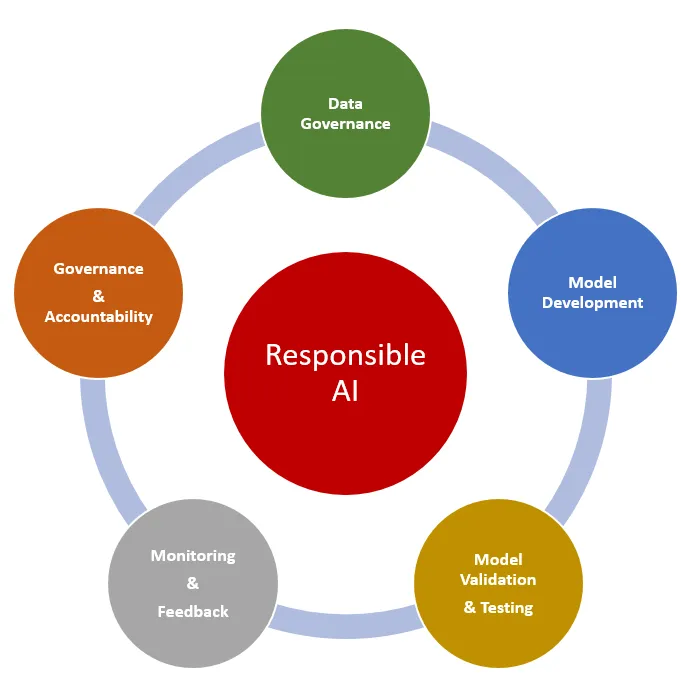

Steps to Design an Agentic AI

Designing an Agentic AI is like building a smart, independent worker that can think for itself, adapt, and act without constant instructions. However, the process is more complex than writing a few lines of code.

Below are the key steps you need to follow to design an agentic system:

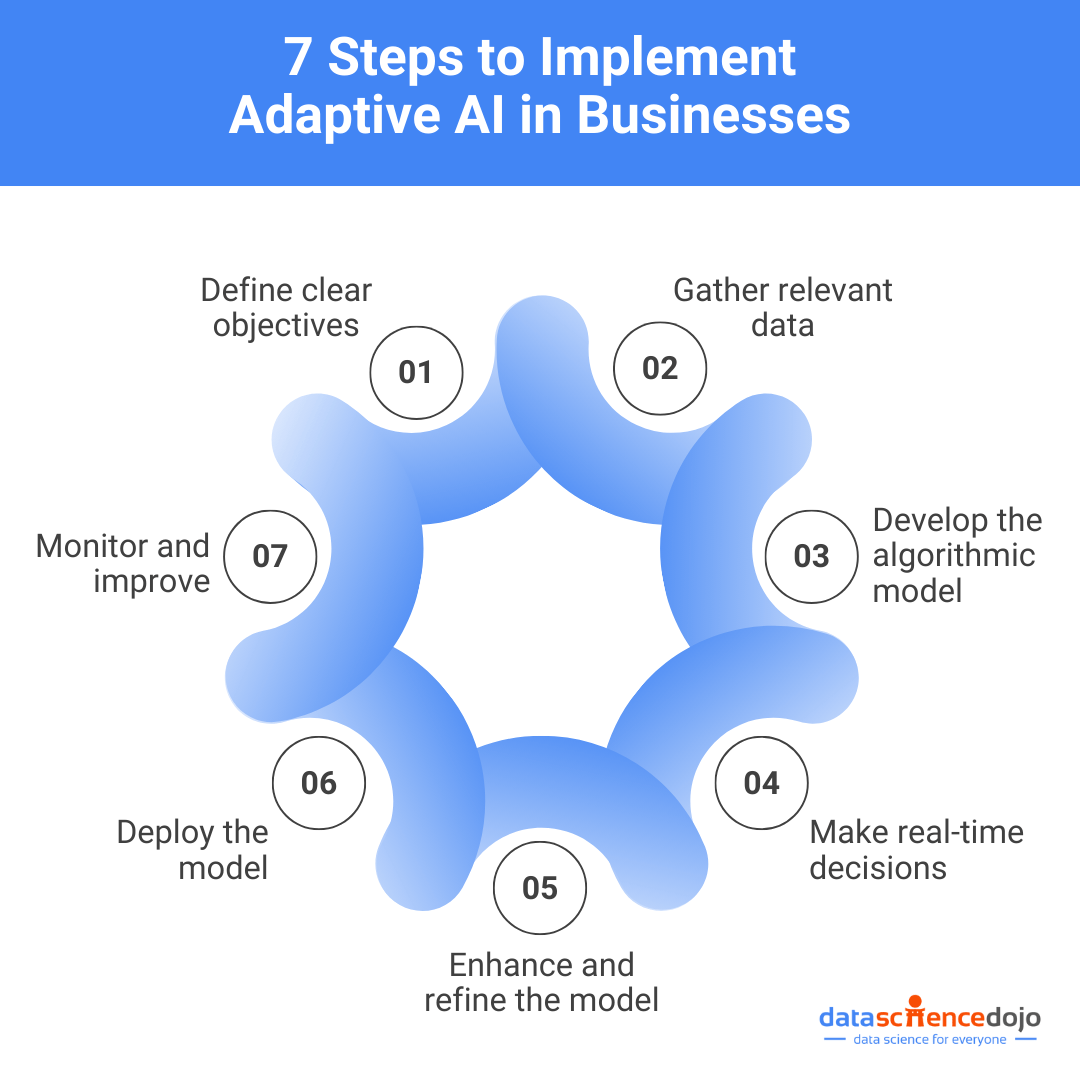

Step 1: Define the Agent’s Purpose and Goals

The process starts with a simple question: What is your agent supposed to do? It could be about navigating a delivery drone through traffic, managing customer queries, or optimizing warehouse operations. Whatever the task, you need to be clear about the outcome you’re aiming for.

When defining goals, you must make sure that those are specific and measurable, like reducing delivery time by 20% or increasing customer response accuracy to 95%. These well-defined goals will ensure that your agent is focused and helps you evaluate how well it is performing over time.

Step 2: Develop the Perception System

In the next step, you must see and understand the environment of your agent. Depending on the use case, this could involve input from cameras, sensors, microphones, or live data streams like weather updates or stock prices.

However, raw data is not helpful on its own. The agent needs to process and extract meaningful features from it. This might mean identifying objects in an image, picking out keywords from audio, or interpreting sensor readings. This layer of perception is the foundation for everything the agent does next.

Step 3: Build the Decision-Making Framework

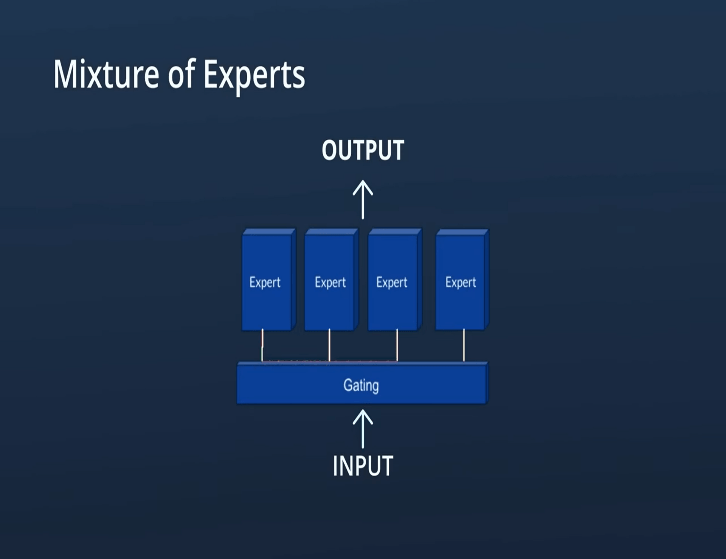

Now is the time for the agent to think for itself. You will need to implement algorithms that let it choose actions on its own. Reinforcement Learning (RL) is a popular choice because it mimics how humans learn: by trial and error.

Planning methods like POMDPs (Partially Observable Markov Decision Processes) or Hierarchical Task Networks (HTNs) can also help the agent make smart choices, especially when the environment is complex or unpredictable.

You must also ensure a balance between exploration (trying new things) and exploitation (sticking with what works). Too much of either can hold the agent back.

Step 4: Create the Learning Mechanism

Learning is an essential aspect of an agentic AI system. To implement this, you need to integrate learning systems into the agent so it can adapt to new situations. With RL, the agent receives rewards (or penalties) based on the decisions it makes, helping it understand what leads to success.

You can also use supervised learning if you already have labeled data to teach the agent. Either way, the key is to set up strong feedback loops so the agent can improve continuously. Think of it like training your agent until it can train itself.

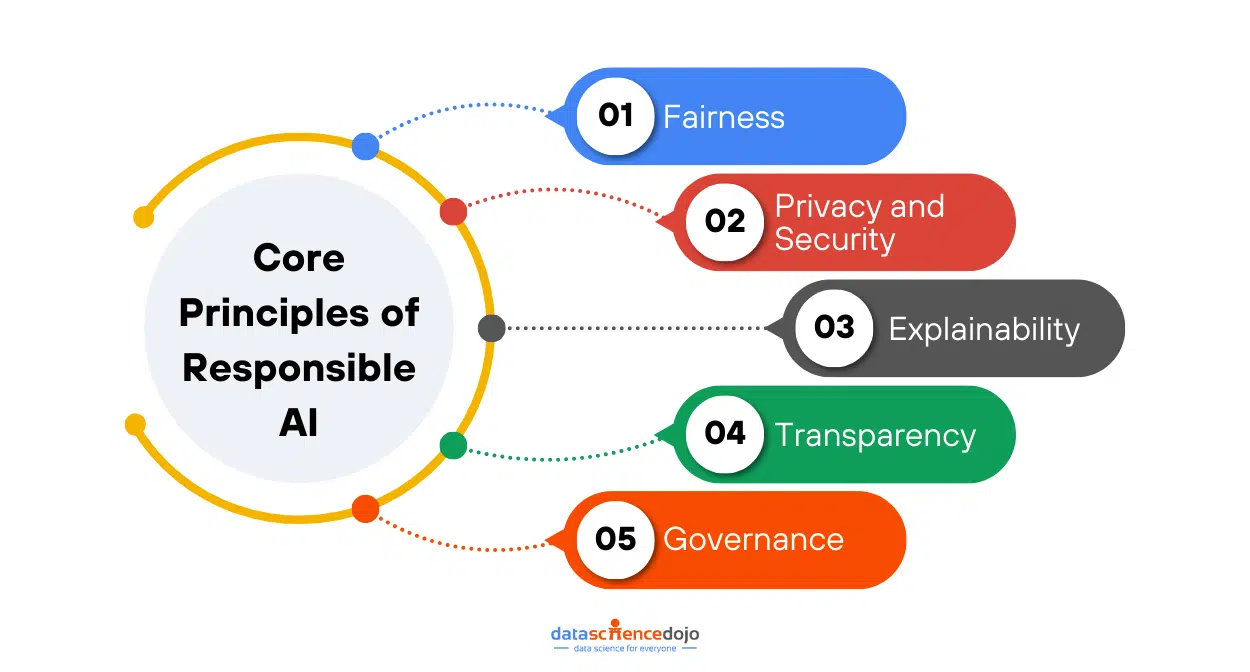

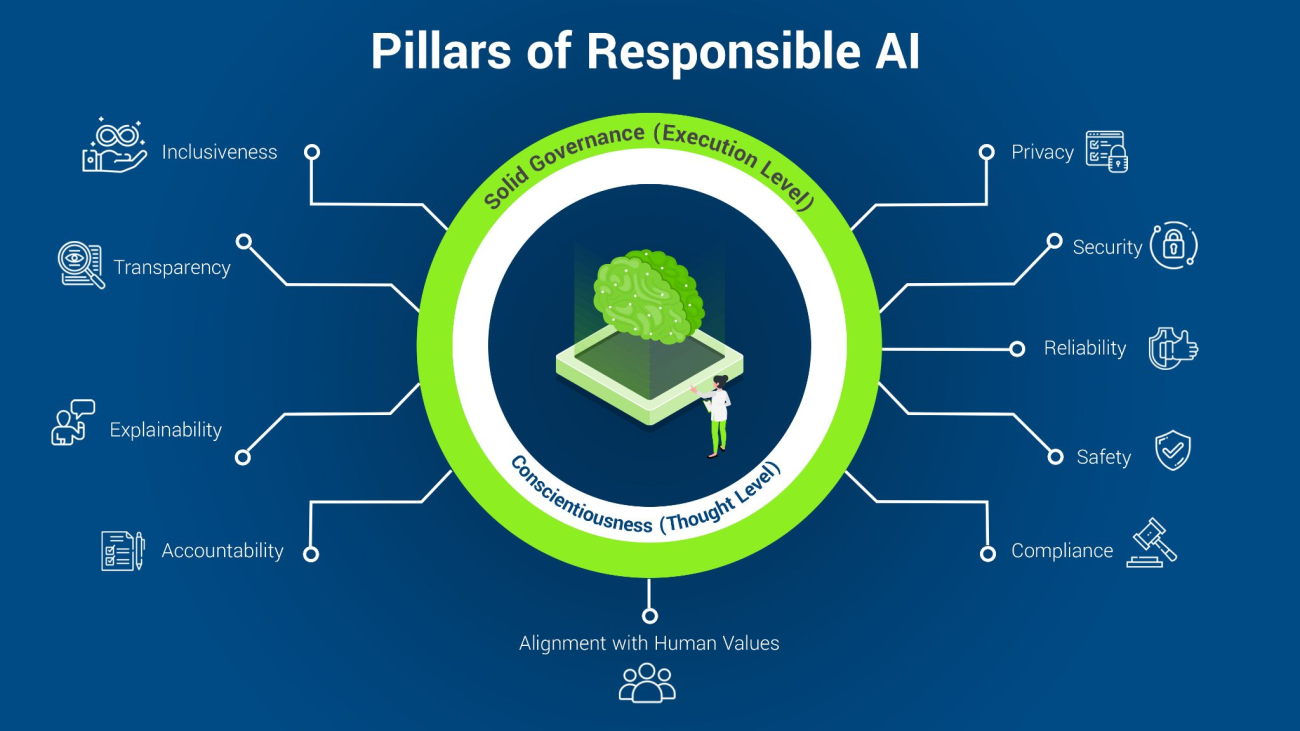

Step 5: Incorporate Safety and Ethical Constraints

Now comes the important part: making sure the agent behaves responsibly and within ethical boundaries. Especially if your AI decisions can impact people’s lives, like recommending loans, hiring candidates, or driving a car. You need to ensure your agentic AI works with safety and ethical checks in place right from the start.

You can use tools like constraint-based learning, reward shaping, or safe exploration methods to make sure your agent does not make risky or unfair decisions. You should also consider fairness, transparency, and accountability to align your agent with human values.

Step 6: Test and Simulate

Now that your agent is ready, it is time to give it a test run. Simulated environments like Unity ML-Agents, CARLA (for driving), or Gazebo (for robotics) allow you to model real-world conditions in a safe, controlled way.

It is like a practice field for your AI where it can make mistakes, learn from them, and try again. You must expose your agent to different scenarios, edge cases, and unexpected challenges to ensure it adapts and not just memorizes patterns. The better you test your agentic AI, the more reliable your agent will be in application.

Step 7: Monitor and Improve

Once you have tested your agent and you make it go live, the next step is to monitor its real-world performance and improve where possible. It is an iterative process where you must set up systems to monitor how it is doing in real-time.

Continuous learning lets the agent evolve with new data and feedback. You might need to tweak its reward signals, update its learning model, or fine-tune its goals. Think of this as maintenance and growth rolled into one. The goal is to have an agent that not only works well today but gets even smarter tomorrow.

This entire process is about responsibility, adaptability, and purpose. Whether you are building a helpful assistant or a mission-critical system, following these steps can help you create an AI that acts with autonomy and accountability.

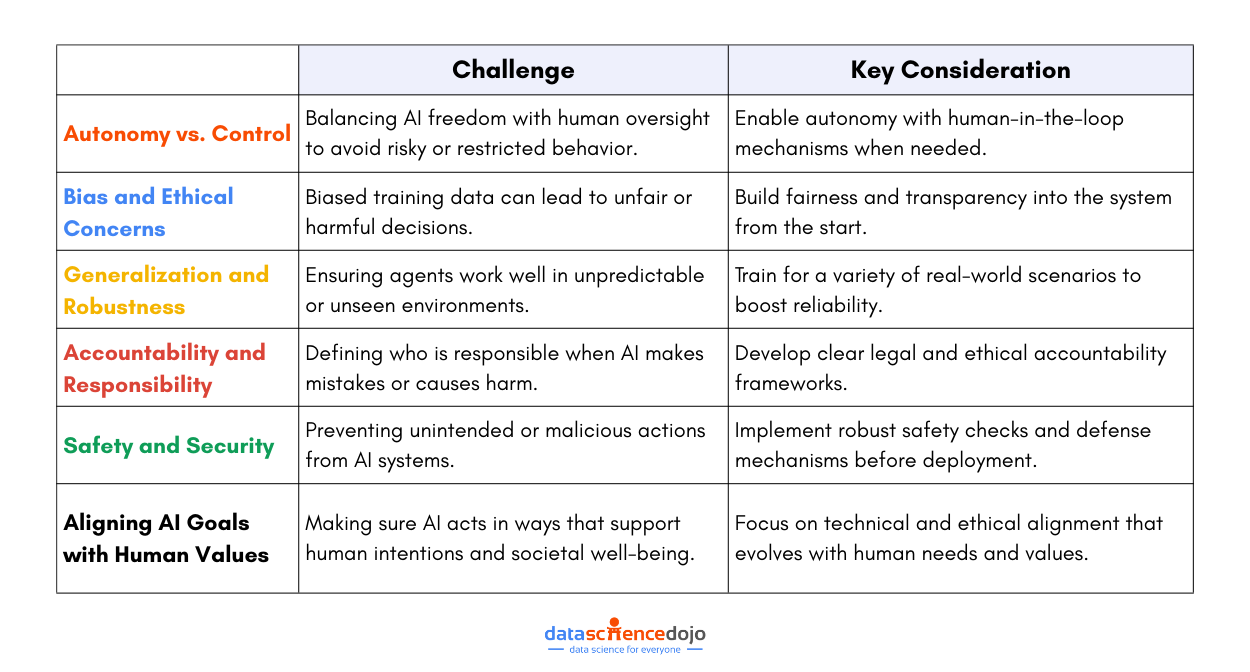

Key Challenges in Agentic AI

Building systems that can think and act on their own comes with serious challenges. With autonomy of agentic AI systems comes complexity, uncertainty, and responsibility.

Let’s break down some of the major hurdles you can face when designing and deploying agentic AI.

Autonomy vs. Control

One of the biggest challenges is finding the right balance between giving an agent the freedom to make decisions and maintaining enough control to guide it safely. With too much freedom, AI might act in unexpected or risky ways. On the other hand, too much control stops it from being truly autonomous.

For instance, a warehouse robot needs to change its route to avoid obstacles. This requires the robot to function autonomously, but if safety checks are skipped, it can lead to trouble in maintaining the operations. Thus, you must consider smart ways to allow autonomy while still keeping humans in the loop when needed.

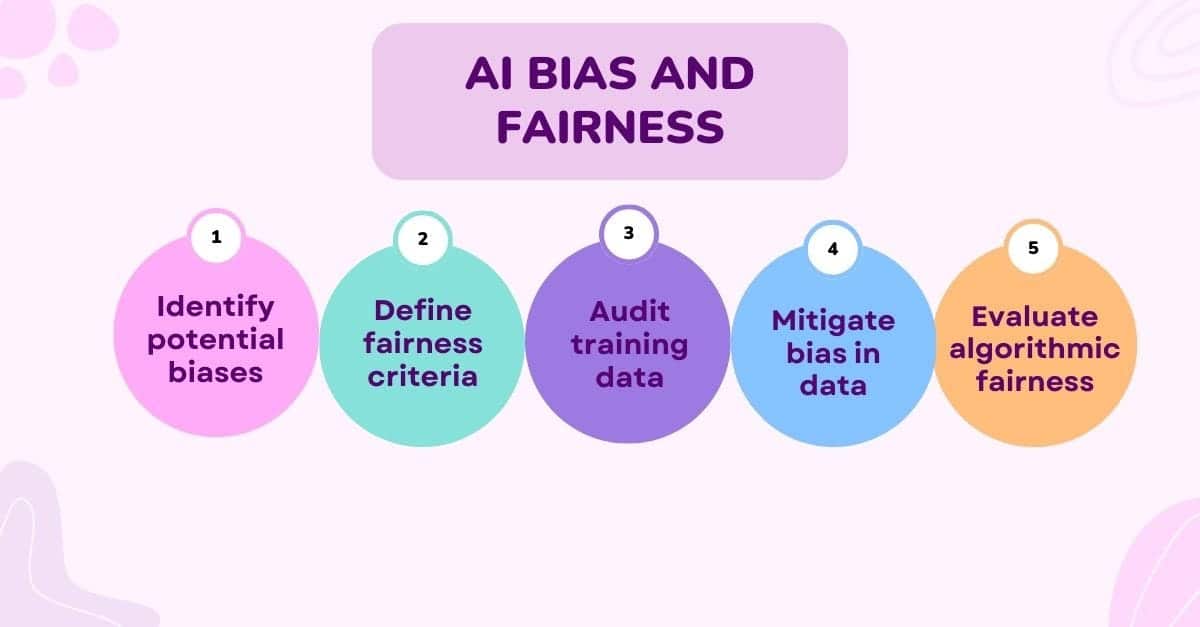

Bias and Ethical Concerns

AI systems learn from data, which can be biased. If an agent is trained on flawed or biased data, it may make unfair or even harmful decisions. An agentic AI making biased decisions can lead to real-world harm.

Unlike traditional software, these agents learn and evolve, making it harder to spot and fix ethical issues after the fact. It is crucial to build transparency and fairness into the system from the start.

Generalization and Robustness

Real-world environments are messy and unpredictable. Hence, agentic AI needs to handle new situations it was not explicitly trained on earlier. For instance, a home assistant is trained in a clean, well-lit house.

What happens when it is placed in a cluttered apartment or has to work during a power outage? To ensure smooth processing, agents need to be designed in a way that they can generalize and stay stable across diverse environments. It is key to making them truly reliable.

Accountability and Responsibility

Accountability is a crucial challenge in agentic AI. What if something goes wrong? Who to blame? The developer, the company, or the AI itself? This is a big legal and ethical gray area.

If an autonomous vehicle causes an accident or an AI advisor gives poor financial advice, there needs to be a clear line of responsibility. As agentic AI becomes more widespread, we need frameworks to address accountability in a fair and consistent way.

Safety and Security

Agentic AI has the potential to act in ways developers never intended. This opens up a whole new bunch of safety issues, ranging from self-driving cars making unsafe maneuvers to chatbots generating harmful content.

Moreover, there is the threat of adversarial attacks tricking the AI systems into malfunctioning. To avoid such instances, it is important to build robust safety mechanisms and ensure secure operation before rolling these systems out widely.

Aligning AI Goals with Human Values

This is actually more complex than it may seem. Ensuring that your agentic AI can understand and follow human goals is not a simple task. It can easily be considered one of the hardest challenges of agentic AI.

This alignment must be technical, moral, and social to ensure the agent operates accurately and ethically. An AI agent might figure out how to hit a target metric, but in ways that are not in our best interest. Like optimizing for screen time by promoting unhealthy habits.

To overcome this challenge, you must work on your agent to ensure proper alignment of its goals with human values. True alignment means teaching AI not just what to do, but also the why, while ensuring its goals evolve with human beings.

Tackling these challenges head-on is the only way to build systems we can trust and rely on in the real world. The more we invest in safety, ethics, and alignment today, the brighter and more beneficial the future of agentic AI will be.

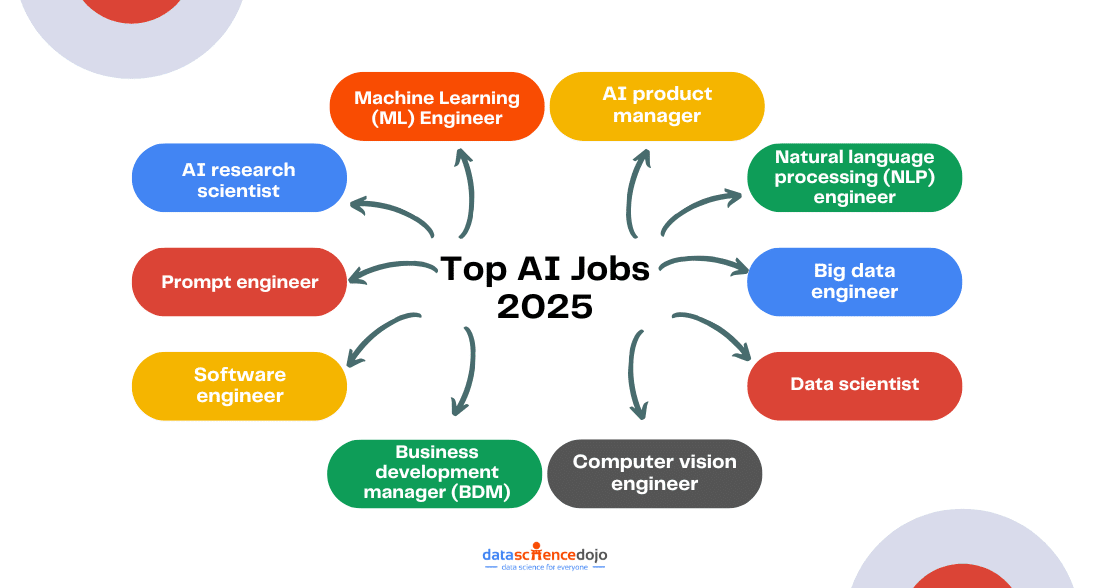

The Future Is Autonomous – Are You Ready for It?

Agentic AI is here, quietly changing the way we live and work. Whether it is a smart assistant adjusting your lights or a fleet of robots managing warehouse inventory, these systems are doing more than just following rules. They are learning, adapting, and making real decisions on their own.

And let’s be honest, this shift is exciting and a little daunting. Giving machines the power to think and act means we need to rethink how we build, manage, and trust them. From safety and ethics to alignment and accountability, there is a lot to get right.

But that is also what makes this such an important moment. The tools, the frameworks, and the knowledge are all evolving fast, and there is never been a better time to be part of the conversation.

If you are curious about where all this is headed, make sure to check out the Rise of Agentic AI Conference by Data Science Dojo, happening on May 27 and 28, 2025. It brings together AI experts, innovators, and curious minds like yours to explore what is next in autonomous systems.

Agentic AI is shaping the future. The question is – will you be leading the charge or catching up? Let’s find out together.