For a hands-on learning experience to develop Agentic AI applications, join our Agentic AI Bootcamp today. Early Bird Discount

Learn to use Python effectively for data analysis, machine learning and data engineering

The Python for Data Science Bootcamp is designed for learners who want to build a strong foundation in Python and apply it confidently to real-world data tasks—no prior coding experience required.

Start your journey with the most essential language in data science. Learn how to analyze datasets, write clean code, and automate tasks so you're set up for advanced analytics roles.

Gain practical programming skills that help you stand out in internships, university projects, and job applications across tech, business, finance, and consulting.

Breaking into data from a non-tech background? This bootcamp gives you the fundamentals—syntax, data handling, and problem-solving with Python—so you can transition smoothly into analytics and data roles.

Learn from though leaders at the forefront who build a strong foundation in Python and apply it confidently to real-world data tasks—no prior coding experience required.

Overview of the topics and practical exercises.

Working with data starts with knowing how to access, store, and manage it effectively. In this module, we will explore how to load data from different sources like databases, CSV and Excel files, JSON, and web APIs while learning the best ways to store and organize it. Understanding different file formats helps ensure smooth workflows and compatibility across systems. Whether you’re a data scientist, analyst, or engineer, these skills are essential for working with data efficiently at every stage.

What You’ll Learn

Before data can be analyzed, it needs to be clean, structured, and ready for use. This module covers essential data wrangling techniques to handle missing values, remove duplicates, merge datasets, reshape data, and manipulate text. By mastering these skills, we can ensure data quality, streamline analysis, and extract meaningful insights from raw information. Effective data wrangling is a crucial step in any data science workflow, making our datasets reliable and analysis ready.

What You’ll Learn

In this module, we’ll cover key techniques for data exploration and visualization using Python libraries like pandas, NumPy, Matplotlib, and seaborn. We’ll learn how to explore datasets, create visualizations, and uncover insights that help in understanding patterns and relationships within the data. By the end, you’ll be able to effectively use visual tools to communicate your findings.

What You’ll Learn

In this module, we will explore how to build efficient data pipelines for integrating, transforming, and managing data from various sources. We will cover the fundamentals of RESTful APIs, HTTP protocols, and data extraction techniques. We will learn how to retrieve data from web services, understand the ETL (Extract, Transform, Load) process, and apply web scraping methods. These skills will help us automate data workflows and streamline data processing for analysis and decision-making.

What You’ll Learn

In this module, we will cover the essentials of machine learning using Python. We will explore key concepts like supervised and unsupervised learning, model selection, and training, along with popular libraries like scikit-learn. We’ll work through the process of preparing data, selecting the right algorithms, evaluating model performance, and applying machine learning techniques to real-world problems. By the end of this module, you’ll have a strong foundation in applying machine learning methods and be equipped to take on machine learning projects with confidence.

What You’ll Learn

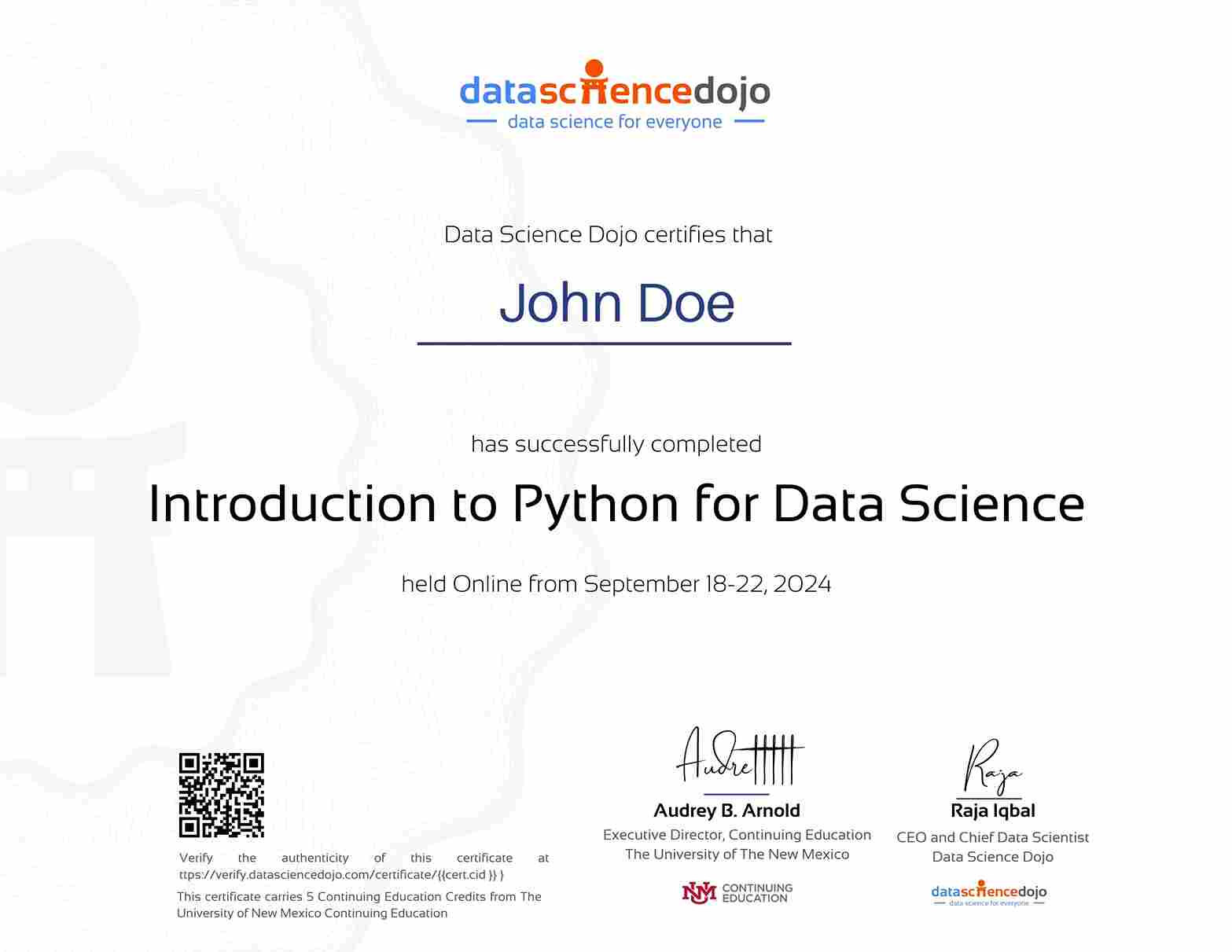

Earn a verified certificate from The University of New Mexico Continuing Education:

All of our programs are backed by a certificate from The University of New Mexico, Continuing Education. This means that you may be eligible to attend the bootcamp for FREE.

Learn Python for data science from leading experts in industry.

Access to the program content depends on the plan you choose at the time of registration. Learn more about different plans here.

Introduction to Python for Data Science program is 5 days, 3 hours per day, for a total of 15 hours of training. There is additional practice if you would like to keep refining your python skills after the program ends.

There are no prerequisites for this program, however our pre-course prep work will include tutorials on fundamental concepts of data science and Python programming to help you prepare for the training program.

Currently, this bootcamp is discontinued. We plan to relaunch it this year in a self-paced format. Once the updated structure and details are finalized, they will be shared here on our website.

The cost will depend on the plan purchased by the students and the discounts available at the time. Please contact us at [email protected] for updated information on discount availability and payment plans.

Yes, we are offering an early-bird discount on the Training Plan

To register for the program, simply view our packages and register for the upcoming cohort. The payment can be made online on our website, via invoice, or wire transfer.

Once you are registered for the program, you will receive a few emails from us. One of those emails will contain steps to create your learning portal account and access the program content. Please follow the steps in the email to create your account. If you’re facing any difficulty please email us at [email protected] for assistance.

Transfers are allowed once with no penalty. Transfers requested more than once will incur a $200 processing fee.

If, for any reason, you decide to cancel, we will gladly refund your registration fee in full if you notify us at least five business days before the start of the training. We can also transfer your registration to another cohort if preferred.

However, refunds cannot be processed if you have transferred to a different cohort after registration. Additionally, once you have been added to the learning platform and have accessed the course materials, we are unable to issue a refund, as digital content access is considered program participation.

While we do not provide exact recordings of the live sessions, all key topics and content covered during the class are available in our companion courses. We’ve created structured lesson clips that reflect the material discussed, allowing you to review everything at your convenience even if you miss a live session.