In recent years, the landscape of artificial intelligence has been transformed by the development of large language models like GPT-3 and BERT, renowned for their impressive capabilities and wide-ranging applications.

Learn the comparative analysis between GPT-3.5 and GPT-4

However, alongside these giants, a new category of AI tools is making waves—the small language models (SLMs). These models, such as LLaMA 3, Phi 3, Mistral 7B, and Gemma, offer a potent combination of advanced AI capabilities with significantly reduced computational demands.

Why are Small Language Models Needed?

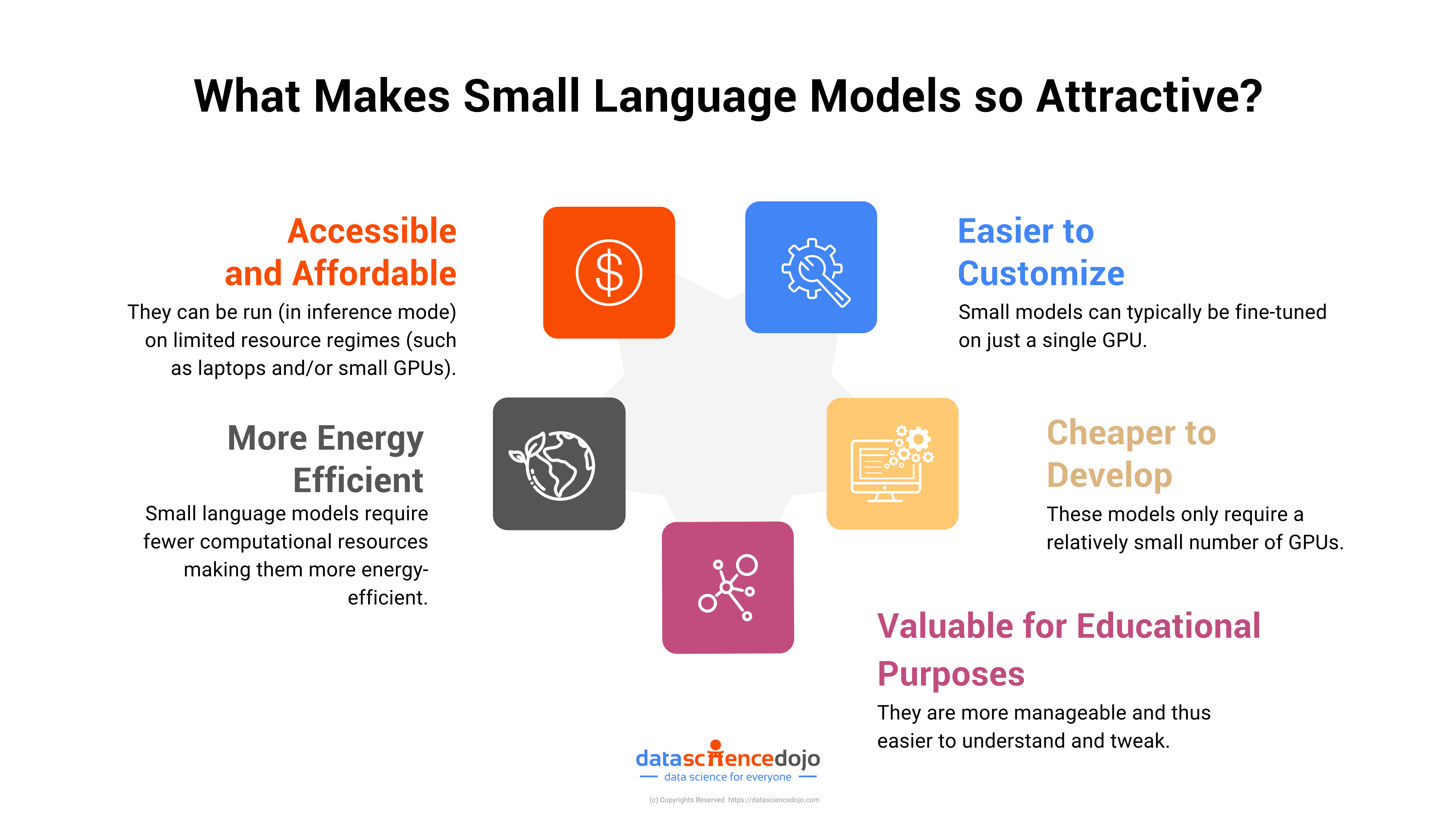

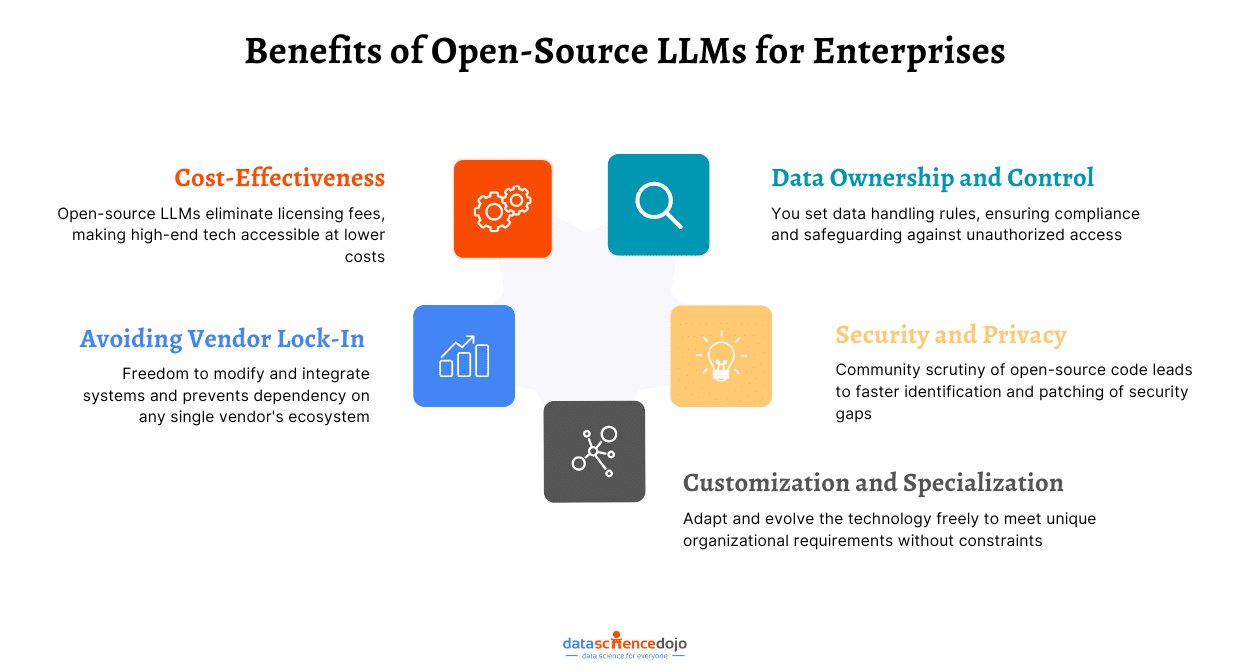

This shift towards smaller, more efficient models is driven by the need for accessibility, cost-effectiveness, and the democratization of AI technology.

Small language models require less hardware, lower energy consumption, and offer faster deployment, making them ideal for startups, academic researchers, and businesses that do not possess the immense resources often associated with big tech companies.

Moreover, their size does not merely signify a reduction in scale but also an increase in adaptability and ease of integration across various platforms and applications.

How Small Language Models Excel with Fewer Parameters?

Several factors explain why smaller language models can perform effectively with fewer parameters. Primarily, advanced training techniques play a crucial role. Methods like transfer learning enable these models to build on pre-existing knowledge bases, enhancing their adaptability and efficiency for specialized tasks.

For example, knowledge distillation from large language models to small language models can achieve comparable performance while significantly reducing the need for computational power.

Learn how LLM Development is making Chatbots Smarter

Moreover, smaller models often focus on niche applications. By concentrating their training on targeted datasets, these models are custom-built for specific functions or industries, enhancing their effectiveness in those particular contexts.

For instance, a small language model trained exclusively on medical data could potentially surpass a general-purpose large model in understanding medical jargon and delivering accurate diagnoses.

Know more about Data Science in Healthcare

However, it’s important to note that the success of a small language model depends heavily on its training regimen, fine-tuning, and the specific tasks it is designed to perform. Therefore, while small models may excel in certain areas, they might not always be the optimal choice for every situation.

Best Small Language Models in 2024

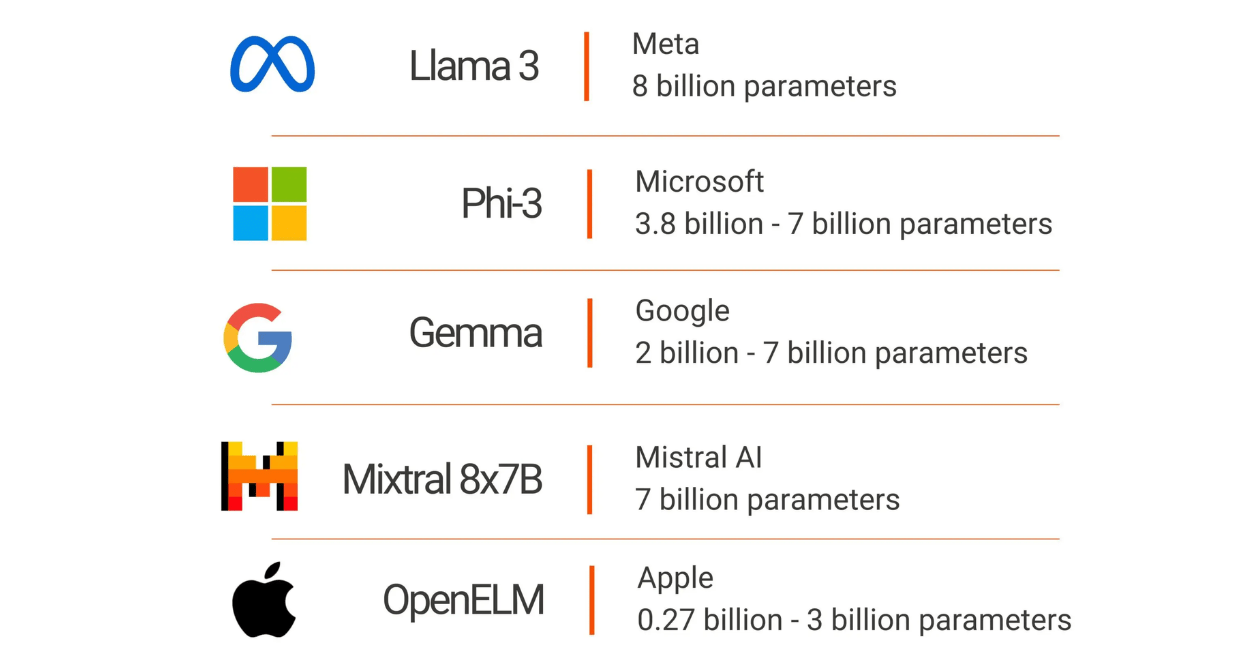

1. Llama 3 by Meta

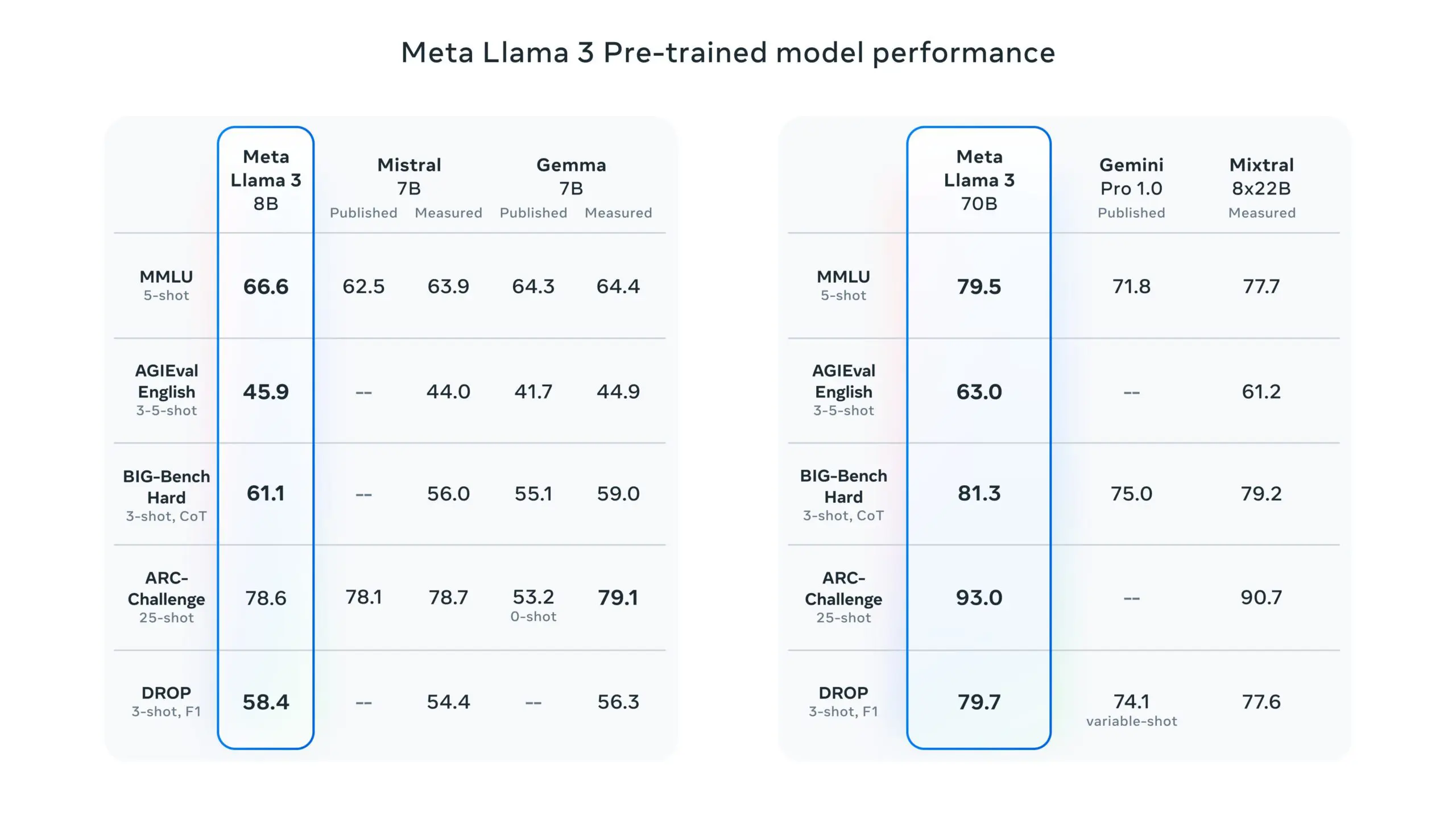

LLaMA 3 is an open-source language model developed by Meta. It’s part of Meta’s broader strategy to empower more extensive and responsible AI usage by providing the community with tools that are both powerful and adaptable.

This model builds upon the success of its predecessors by incorporating advanced training methods and architecture optimizations that enhance its performance across various tasks such as translation, dialogue generation, and complex reasoning.

Performance and Innovation

Meta’s LLaMA 3 has been trained on significantly larger datasets compared to earlier versions, utilizing custom-built GPU clusters that enable it to process vast amounts of data efficiently.

This extensive training has equipped LLaMA 3 with an improved understanding of language nuances and the ability to handle multi-step reasoning tasks more effectively. The model is particularly noted for its enhanced capabilities in generating more aligned and diverse responses, making it a robust tool for developers aiming to create sophisticated AI-driven applications.

Why LLaMA 3 Matters

The significance of LLaMA 3 lies in its accessibility and versatility. Being open-source, it democratizes access to state-of-the-art AI technology, allowing a broader range of users to experiment and develop applications.

This model is crucial for promoting innovation in AI, providing a platform that supports both foundational and advanced AI research. By offering an instruction-tuned version of the model, Meta ensures that developers can fine-tune LLaMA 3 to specific applications, enhancing both performance and relevance to particular domains.

Learn more about Meta’s Llama 3

2. Phi 3 By Microsoft

Phi-3 is a pioneering series of SLMs developed by Microsoft, emphasizing high capability and cost-efficiency. As part of Microsoft’s ongoing commitment to accessible AI, Phi-3 models are designed to provide powerful AI solutions that are not only advanced but also more affordable and efficient for a wide range of applications.

These models are part of an open AI initiative, meaning they are accessible to the public and can be integrated and deployed in various environments, from cloud-based platforms like Microsoft Azure AI Studio to local setups on personal computing devices.

Performance and Significance

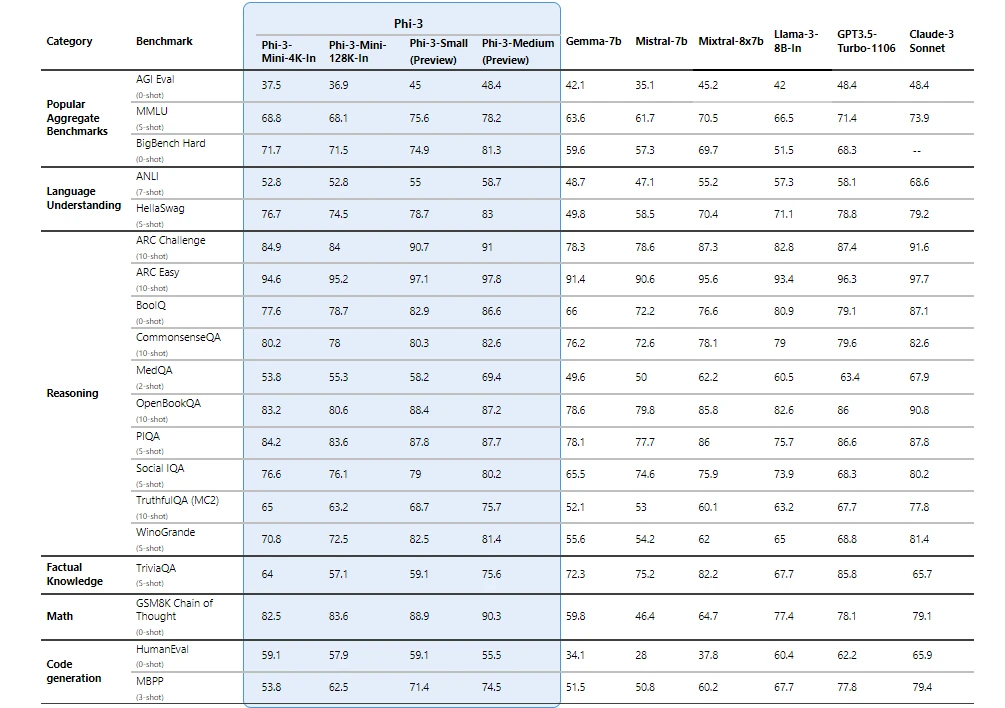

The Phi 3 models stand out for their exceptional performance, surpassing both similar and larger-sized models in tasks involving language processing, coding, and mathematical reasoning.

Notably, the Phi-3-mini, a 3.8 billion parameter model within this family, is available in versions that handle up to 128,000 tokens of context—setting a new standard for flexibility in processing extensive text data with minimal quality compromise.

Microsoft has optimized Phi 3 for diverse computing environments, supporting deployment across GPUs, CPUs, and mobile platforms, which is a testament to its versatility.

Additionally, these models integrate seamlessly with other Microsoft technologies, such as ONNX Runtime for performance optimization and Windows DirectML for broad compatibility across Windows devices.

Why Does Phi 3 Matter?

The development of Phi 3 reflects a significant advancement in AI safety and ethical AI deployment. Microsoft has aligned the development of these models with its Responsible AI Standard, ensuring that they adhere to principles of fairness, transparency, and security, making them not just powerful but also trustworthy tools for developers.

3. Mixtral 8x7B by Mistral AI

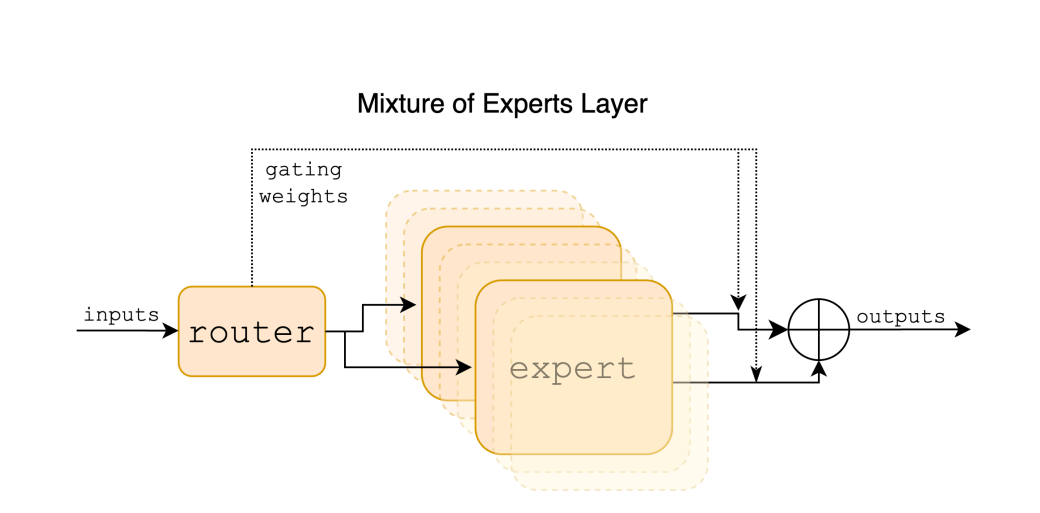

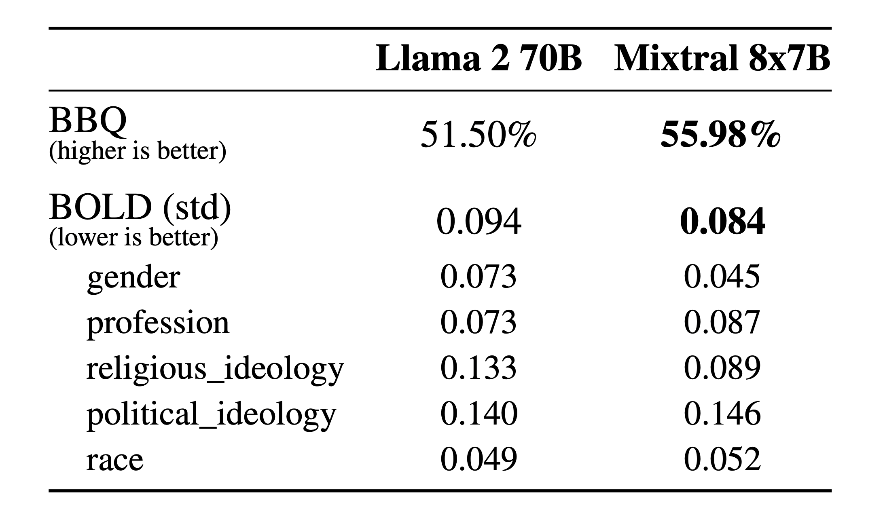

Mixtral, developed by Mistral AI, is a groundbreaking model known as a Sparse Mixture of Experts (SMoE). It represents a significant shift in AI model architecture by focusing on both performance efficiency and open accessibility.

Mistral AI, known for its foundation in open technology, has designed Mixtral to be a decoder-only model, where a router network selectively engages different groups of parameters, or “experts,” to process data.

This approach not only makes Mixtral highly efficient but also adaptable to a variety of tasks without requiring the computational power typically associated with large models.

Explore the showdown of 7B LLMs – Mistral 7B vs Llama-2 7B

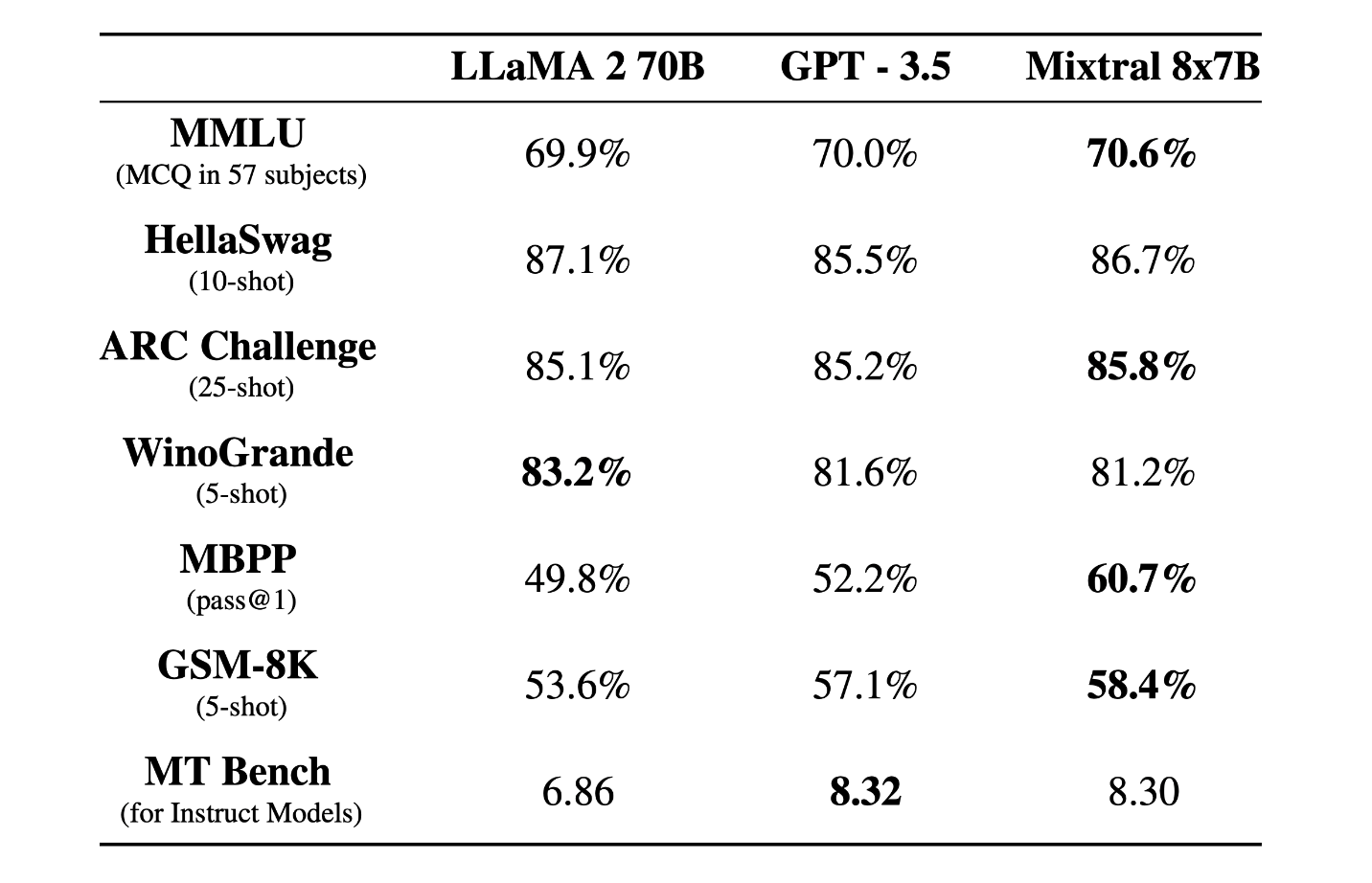

Performance and Innovations

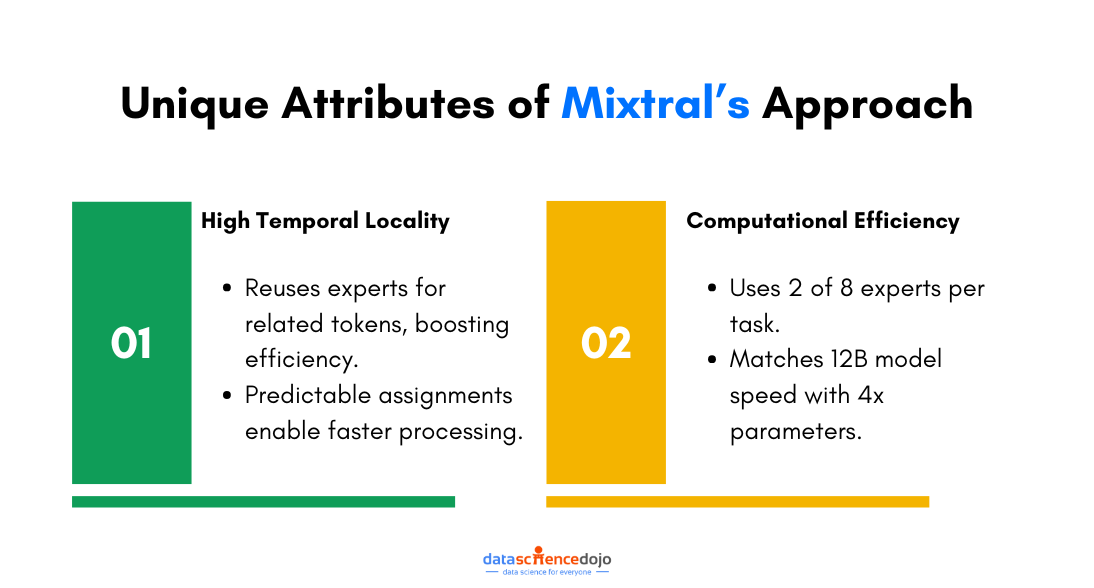

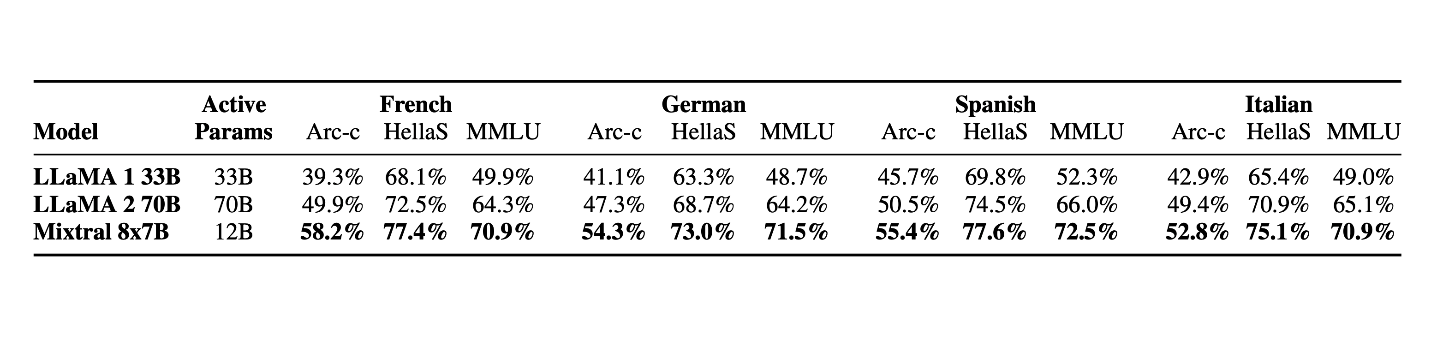

Mixtral excels in processing large contexts up to 32k tokens and supports multiple languages including English, French, Italian, German, and Spanish.

It has demonstrated strong capabilities in code generation and can be fine-tuned to follow instructions precisely, achieving high scores on benchmarks like the MT-Bench.

What sets Mixtral apart is its efficiency—despite having a total parameter count of 46.7 billion, it effectively utilizes only about 12.9 billion per token, aligning it with much smaller models in terms of computational cost and speed.

Why Does Mixtral Matter?

The significance of Mixtral lies in its open-source nature and its licensing under Apache 2.0, which encourages widespread use and adaptation by the developer community.

This model is not only a technological innovation but also a strategic move to foster more collaborative and transparent AI development. By making high-performance AI more accessible and less resource-intensive, Mixtral is paving the way for broader, more equitable use of advanced AI technologies.

Mixtral’s architecture represents a step towards more sustainable AI practices by reducing the energy and computational costs typically associated with large models. This makes it not only a powerful tool for developers but also a more environmentally conscious choice in the AI landscape.

4. Gemma by Google

Gemma is a new generation of open models introduced by Google, designed with the core philosophy of responsible AI development. Developed by Google DeepMind along with other teams at Google, Gemma leverages the foundational research and technology that also gave rise to the Gemini models.

Technical Details and Availability

Gemma models are structured to be lightweight and state-of-the-art, ensuring they are accessible and functional across various computing environments—from mobile devices to cloud-based systems.

Google has released two main versions of Gemma: a 2 billion parameter model and a 7 billion parameter model. Each of these comes in both pre-trained and instruction-tuned variants to cater to different developer needs and application scenarios.

Gemma models are freely available and supported by tools that encourage innovation, collaboration, and responsible usage.

Why Does Gemma Matter?

Gemma models are significant not just for their technical robustness but for their role in democratizing AI technology. By providing state-of-the-art capabilities in an open model format, Google facilitates a broader adoption and innovation in AI, allowing developers and researchers worldwide to build advanced applications without the high costs typically associated with large models.

Moreover, Gemma models are designed to be adaptable, allowing users to tune them for specialized tasks, which can lead to more efficient and targeted AI solutions

5. OpenELM Family by Apple

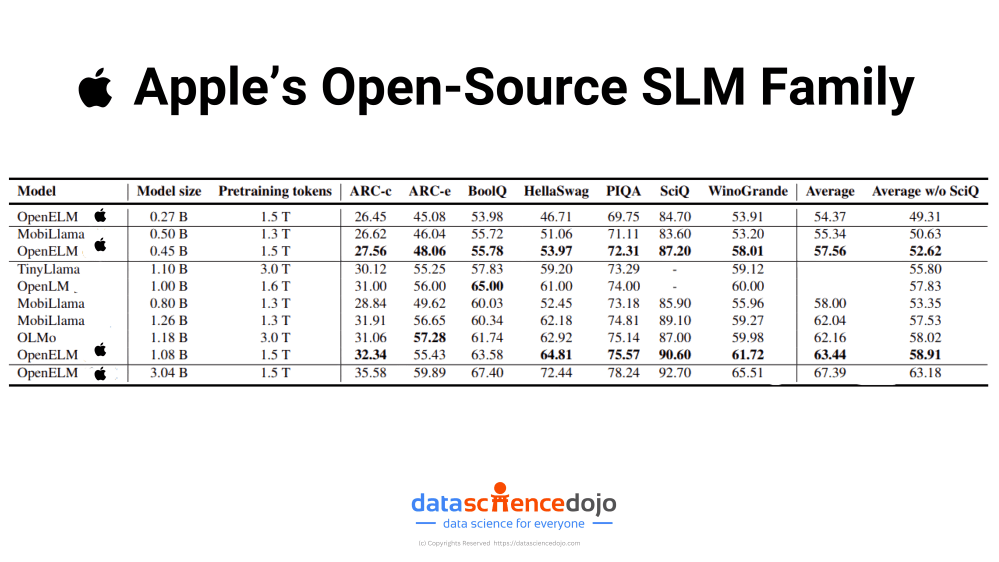

OpenELM is a family of small language models developed by Apple. OpenELM models are particularly appealing for applications where resource efficiency is critical. OpenELM is open-source, offering transparency and the opportunity for the wider research community to modify and adapt the models as needed.

Performance and Capabilities

Despite their smaller size and open-source nature, it’s important to note that OpenELM models do not necessarily match the top-tier performance of some larger, more closed-source models. They achieve moderate accuracy levels across various benchmarks but may lag behind in more complex or nuanced tasks. For example, while OpenELM shows improved performance compared to similar models like OLMo in terms of accuracy, the improvement is moderate.

Why Does OpenELM Matter?

OpenELM represents a strategic move by Apple to integrate state-of-the-art generative AI directly into its hardware ecosystem, including laptops and smartphones.

By embedding these efficient models into devices, Apple can potentially offer enhanced on-device AI capabilities without the need to constantly connect to the cloud.

This not only improves functionality in areas with poor connectivity but also aligns with increasing consumer demands for privacy and data security, as processing data locally minimizes the risk of exposure over networks.

Furthermore, embedding OpenELM into Apple’s products could give the company a significant competitive advantage by making their devices smarter and more capable of handling complex AI tasks independently of the cloud.

This can transform user experiences, offering more responsive and personalized AI interactions directly on their devices. The move could set a new standard for privacy in AI, appealing to privacy-conscious consumers and potentially reshaping consumer expectations in the tech industry.

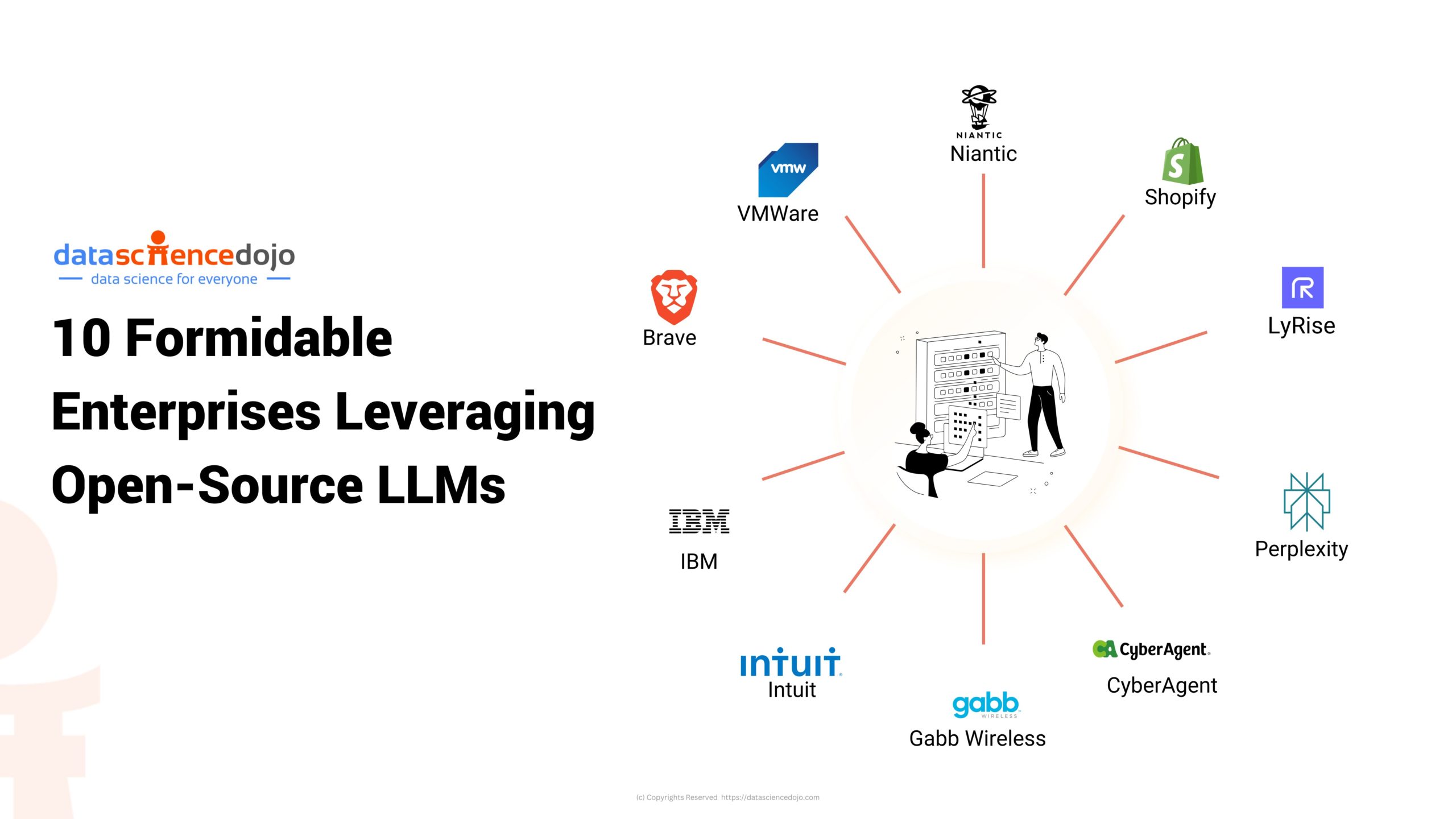

The Future of Small Language Models

As we dive deeper into the capabilities and strategic implementations of small language models, it’s clear that the evolution of AI is leaning heavily towards efficiency and integration. Companies like Apple, Microsoft, and Google are pioneering this shift by embedding advanced AI directly into everyday devices, enhancing user experience while upholding stringent privacy standards.

Explore 10 AI startups revolutionizing healthcare you should know about

This approach not only meets the growing consumer demand for powerful, yet private technology solutions but also sets a new paradigm in the competitive landscape of tech companies.