Neural networks have emerged as a transformative force across various sectors, revolutionizing industries such as healthcare, finance, and automotive technology.

Inspired by the human brain, artificial neural networks (ANNs) leverage bio-inspired computational models to solve complex problems and perform tasks previously exclusive to human intelligence.

The effectiveness of neural networks largely hinges on the quality and quantity of data used for training, underlining the significance of robust datasets in achieving high-performing models.

Ongoing research and development signal a future where neural network applications could expand even further, potentially uncovering new ways to address global challenges and drive progress in the digital age.

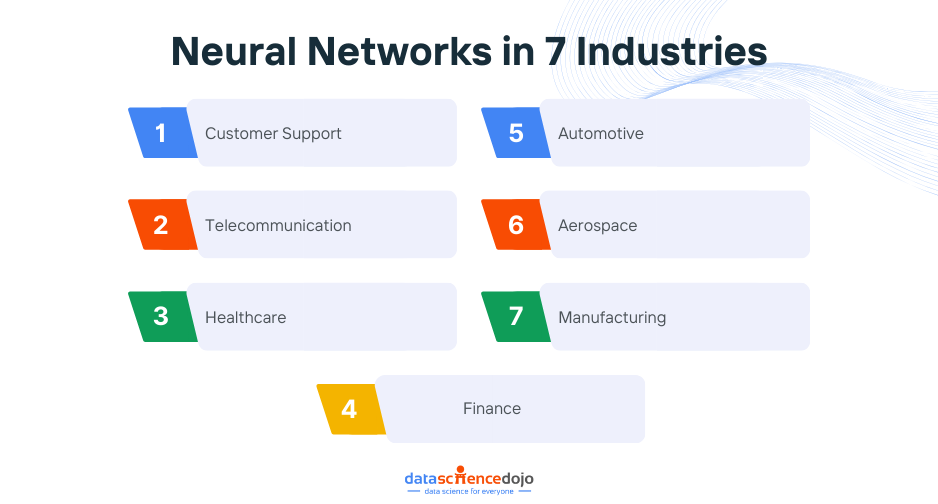

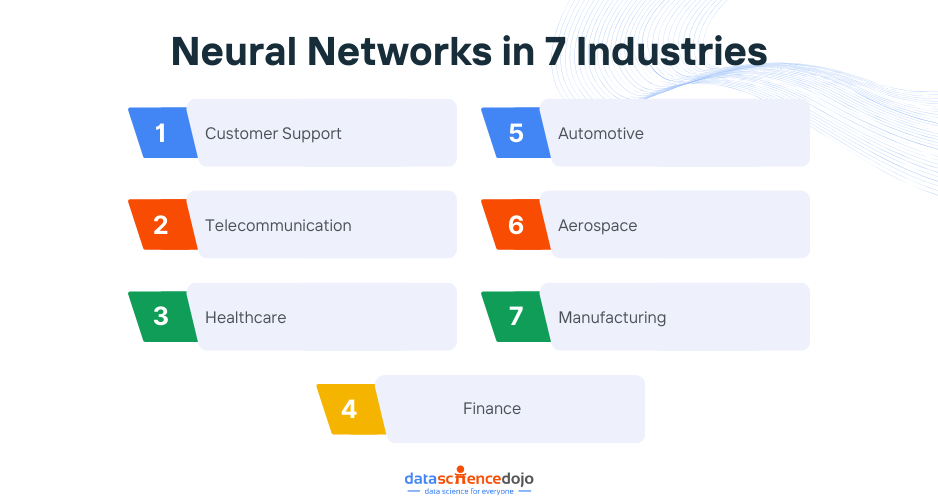

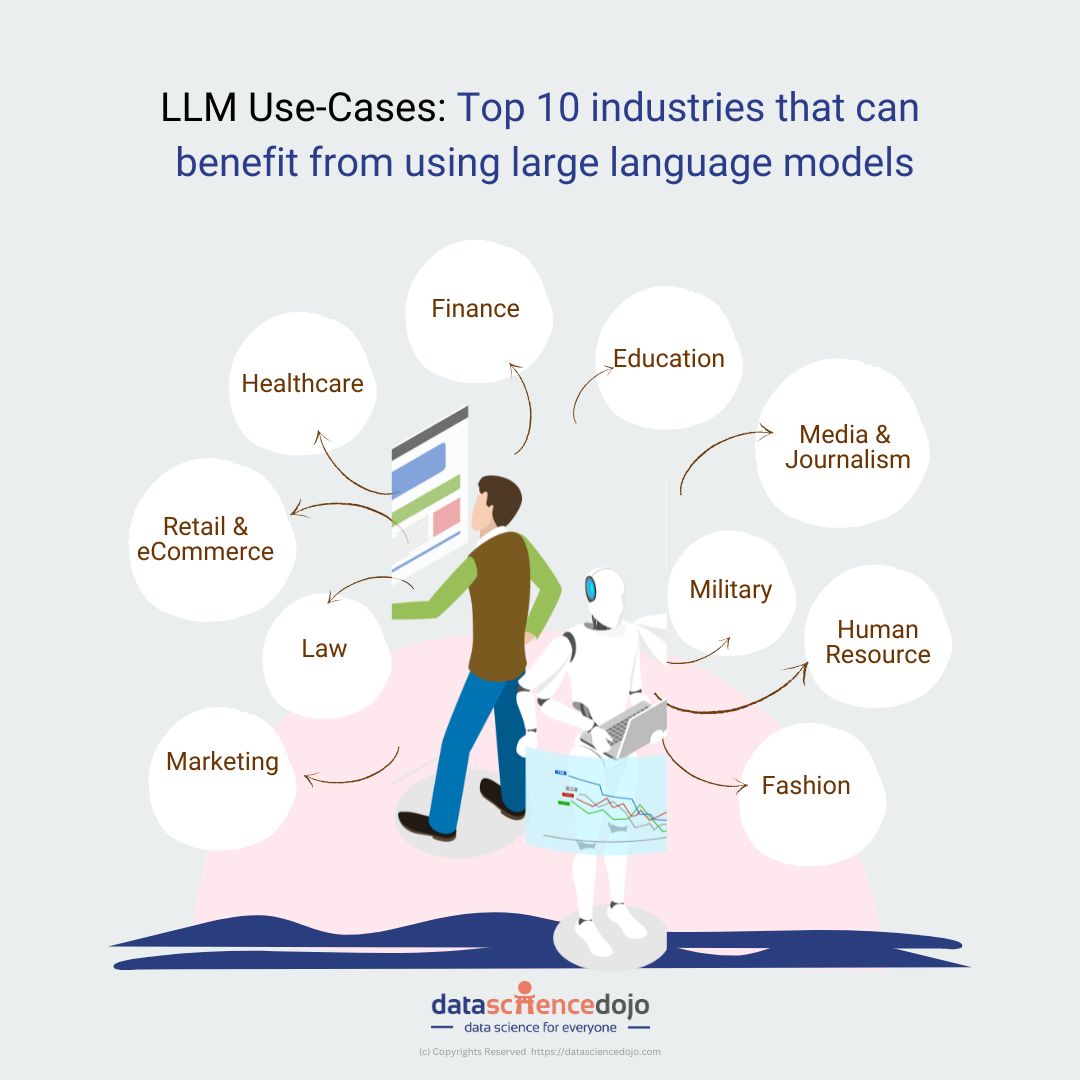

7 Industry Applications of Neural Networks

In this blog, we will explore the current applications of neural networks across 7 different industries, exploring the different enhanced aspects of each.

Customer Support

Chat Systems

They have transformed customer support through chat systems. By analyzing customer queries and past conversations, neural networks understand the context and provide relevant and accurate responses.

This technology, known as natural language processing (NLP), enables chatbots to interact with customers in a conversational manner, providing instant and personalized support.

Continuous Improvement

These systems learn from extensive datasets of customer interactions, empowering businesses to address inquiries efficiently, from basic FAQs to complex troubleshooting. Companies like Talla use neural networks to enhance their AI-driven customer support solutions.

Proactive Support

Neural networks anticipate potential issues based on historical interactions, improving the overall customer experience and reducing churn rates. This proactive approach ensures that businesses can address problems before they escalate, maintaining customer satisfaction.

Read more to explore the Basics of Neural Networks

Telecommunication

Network Operations

Neural networks boost telecommunications performance, reliability, and service offerings by managing and controlling network operations. They also power intelligent customer service solutions like voice recognition systems.

Data Compression and Optimization

Neural networks optimize network functionality by compressing data for efficient transmission and acting as equalizers for clear signals. This improves communication experiences for users and ensures optimal network performance even under heavy load conditions.

Enhanced Communication

These network architectures enable real-time translation of spoken languages. For example, Google Translate uses neural networks to provide accurate translations instantly.

This technology excels at pattern recognition tasks like facial recognition, speech recognition, and handwriting recognition, making communication seamless across different languages and regions.

Learn more about the 5 Main Types of Neural Networks

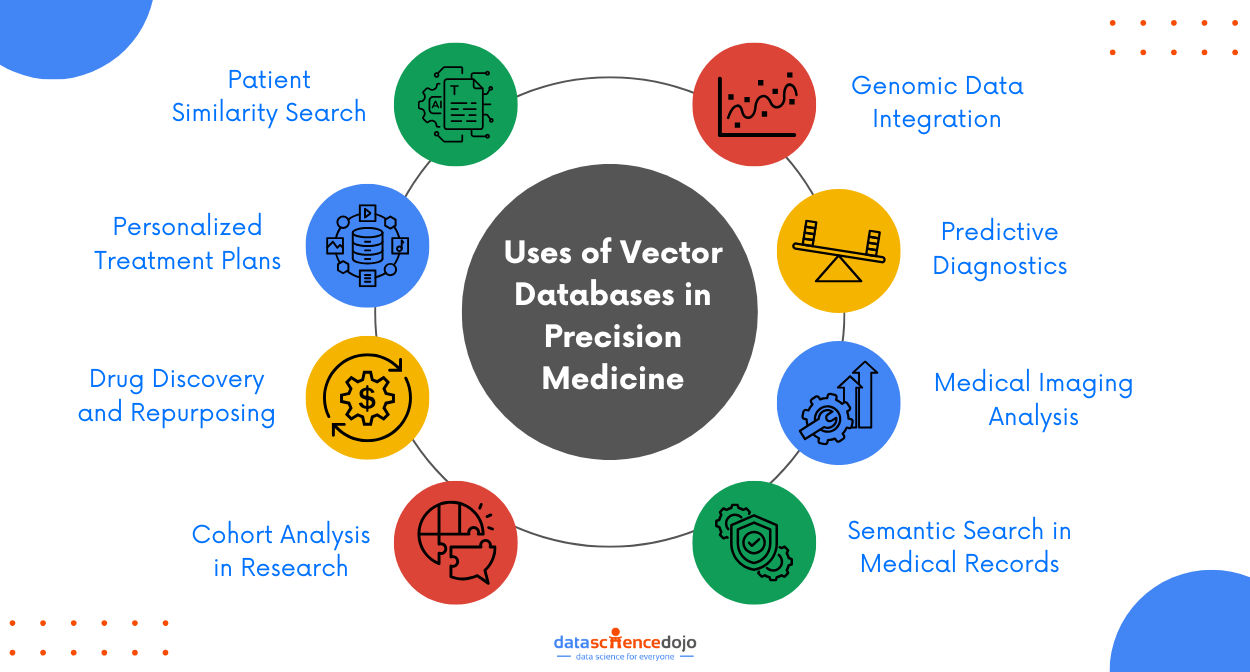

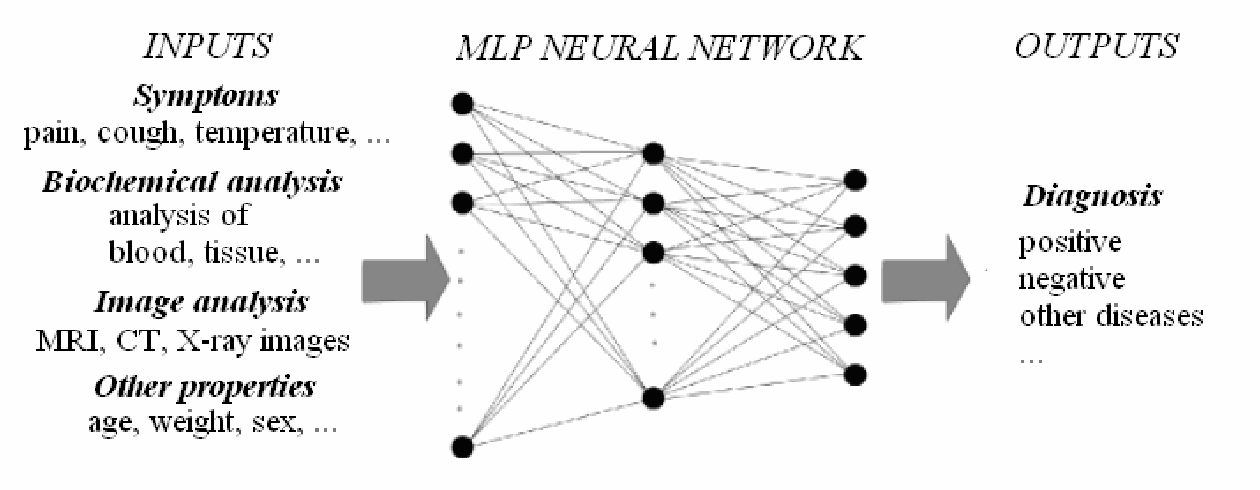

Healthcare

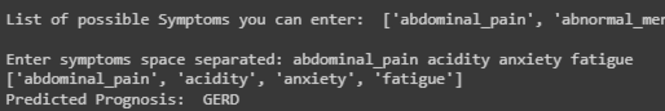

Medical Diagnosis

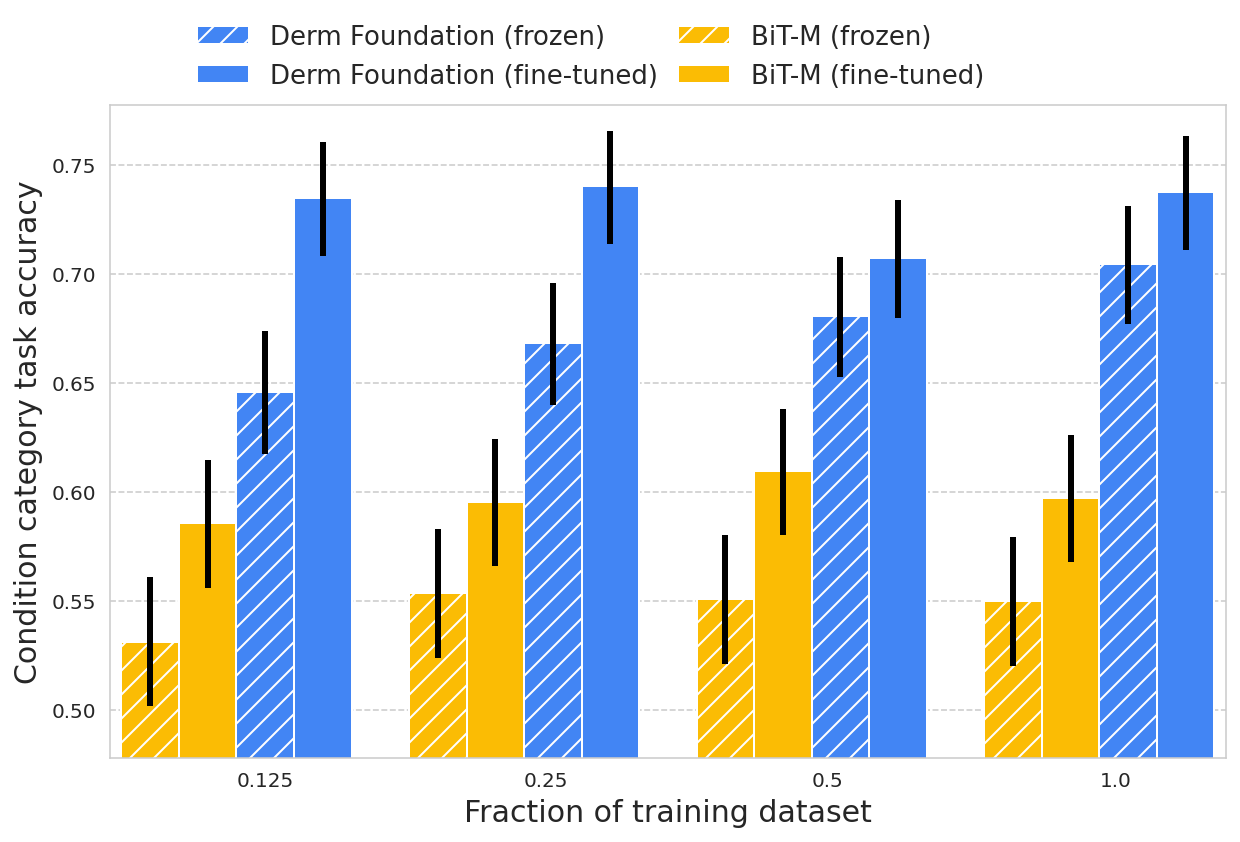

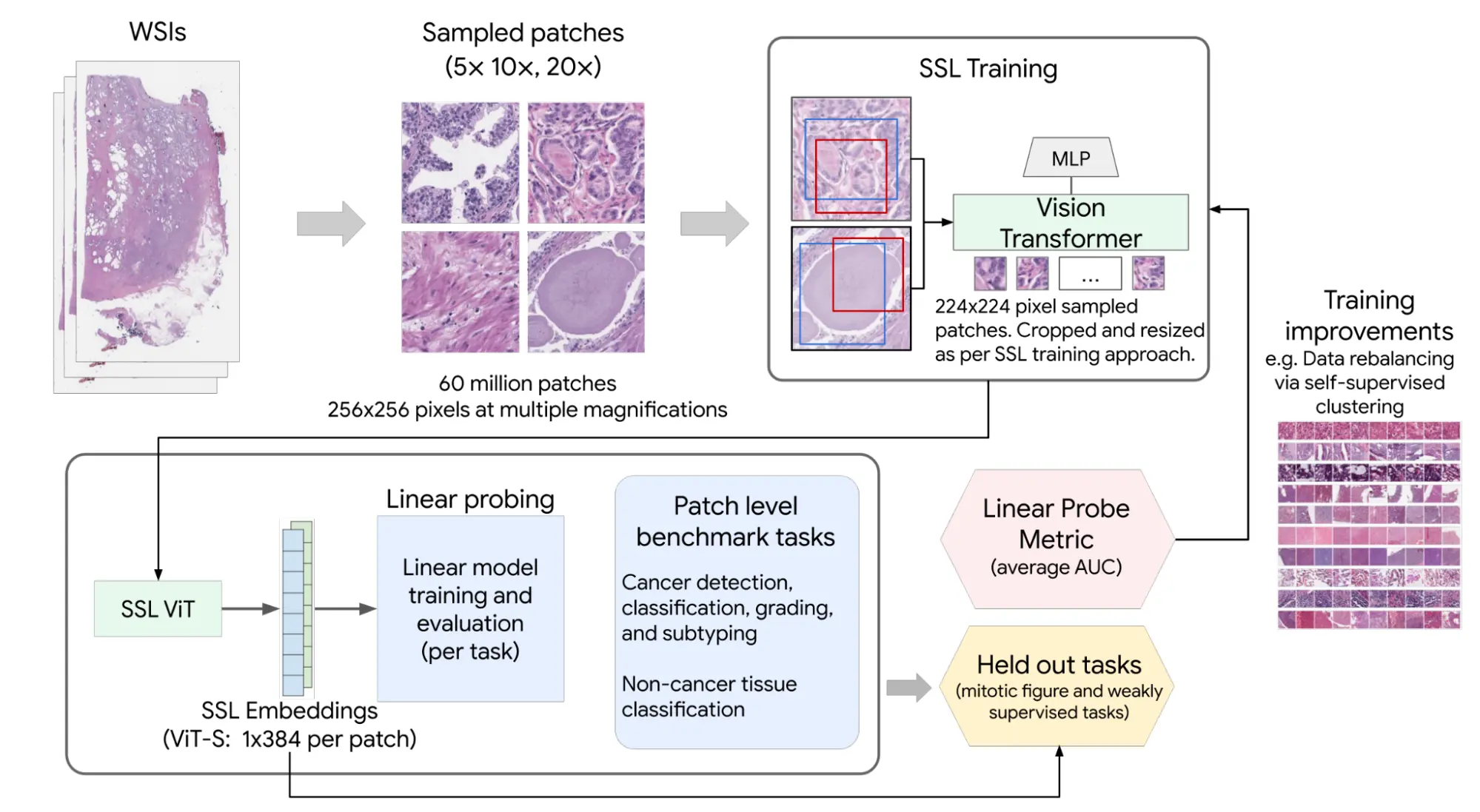

These networks drive advancements in medical diagnosis and treatment, such as skin cancer detection. Advanced algorithms can distinguish tissue growth patterns, enabling early and accurate detection of skin cancer.

For instance, SkinVision, an app that uses neural networks for skin cancer detection, has a specificity of 80% and a sensitivity of 94%, which is higher than most dermatologists.

Personalized Medicine

They analyze genetic information and patient data to forecast treatment responses, enhancing treatment effectiveness and minimizing adverse effects. IBM Watson is an example that uses neural networks to analyze cancer patient data and suggest personalized treatment plans, tailoring interventions to individual patient needs.

Medical Imaging

Neural networks analyze data from MRI and CT scans to identify abnormalities like tumors with high precision, speeding up diagnosis and treatment planning. This capability reduces the time required for medical evaluations and increases the accuracy of diagnoses.

Also Learn about Data Science in Healthcare

Drug Discovery

Neural networks predict interactions between chemical compounds and biological targets, reducing the time and costs associated with bringing new drugs to market. This accelerates the development of new treatments and ensures that they are both effective and safe.

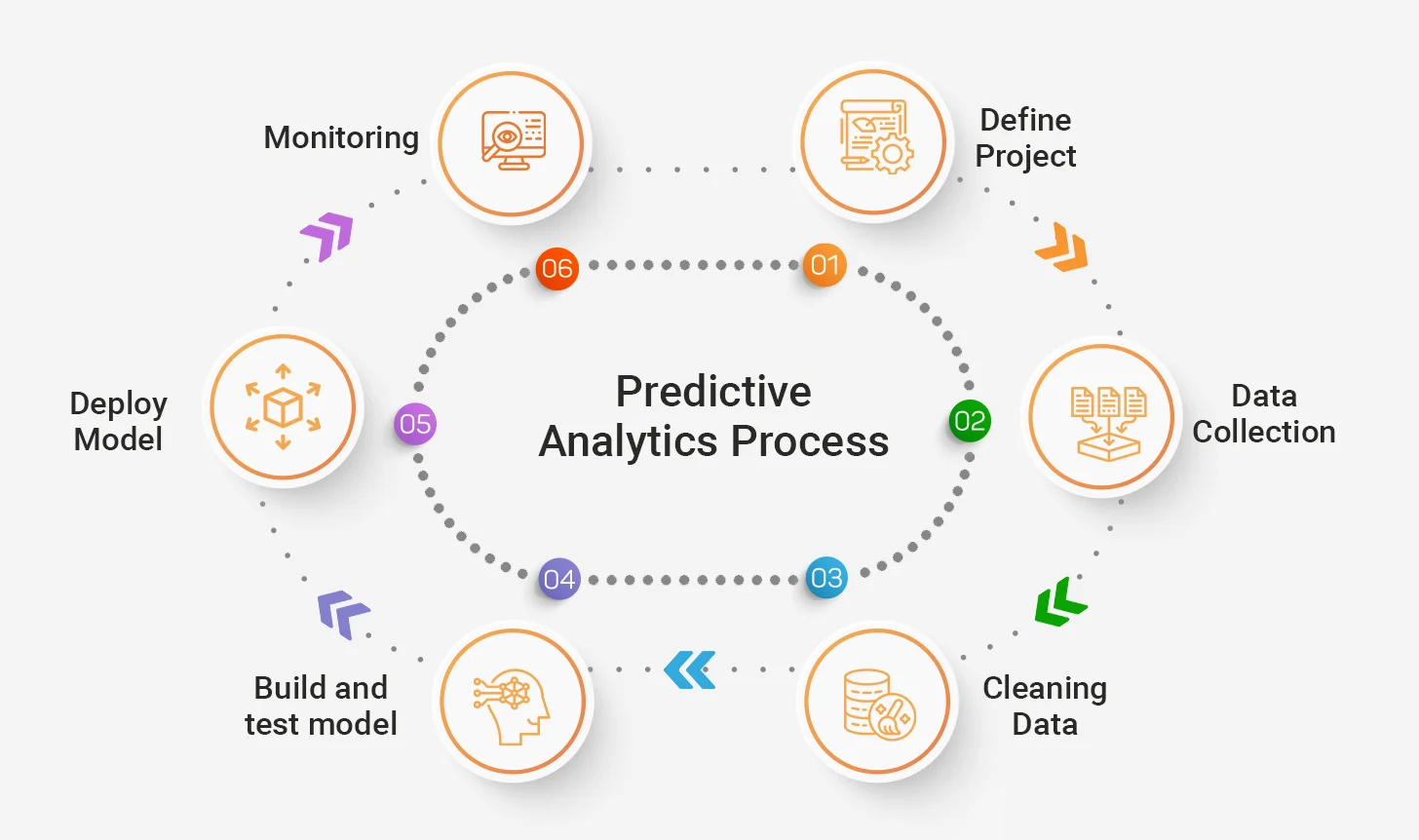

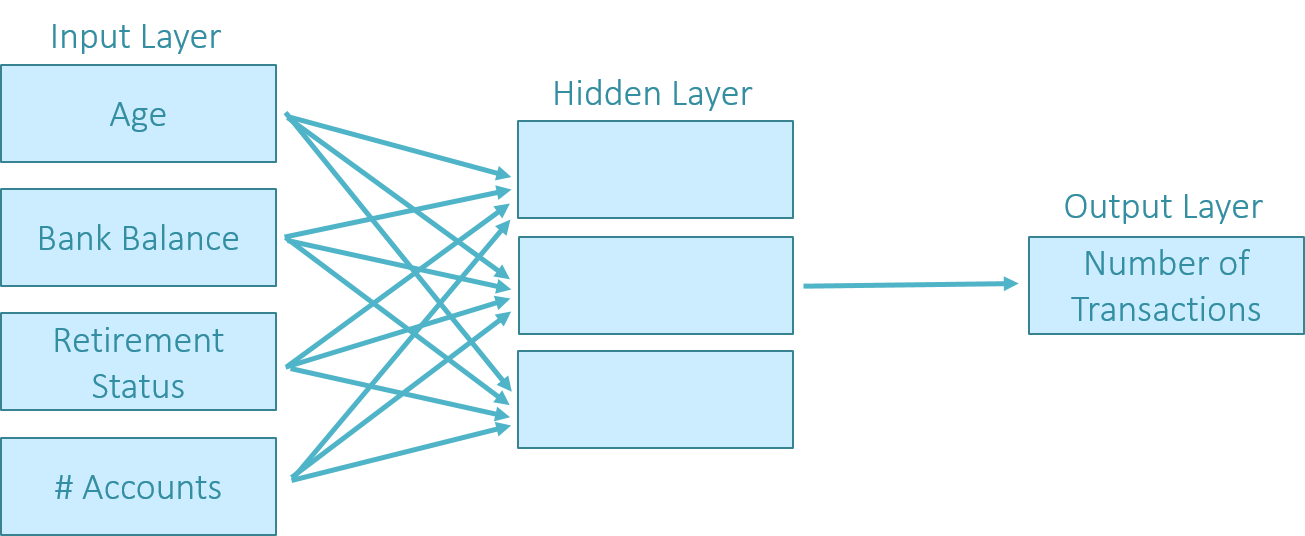

Finance

Stock Market Prediction

These deep-learning architectures analyze historical stock data to forecast market trends, aiding investors in making informed decisions. Hedge funds use neural networks to predict stock performance and optimize investment strategies.

Another interesting read: LLM Finance

Fraud Detection

They scrutinize transaction data in real-time to flag suspicious activities, safeguarding financial institutions from potential losses. Companies like MasterCard and PayPal use neural networks to detect and prevent fraudulent transactions.

Risk Assessment

Neural networks evaluate factors such as credit history and income levels to predict the likelihood of default, helping lenders make sound loan approval decisions. This capability ensures that financial institutions can manage risk effectively while providing services to eligible customers.

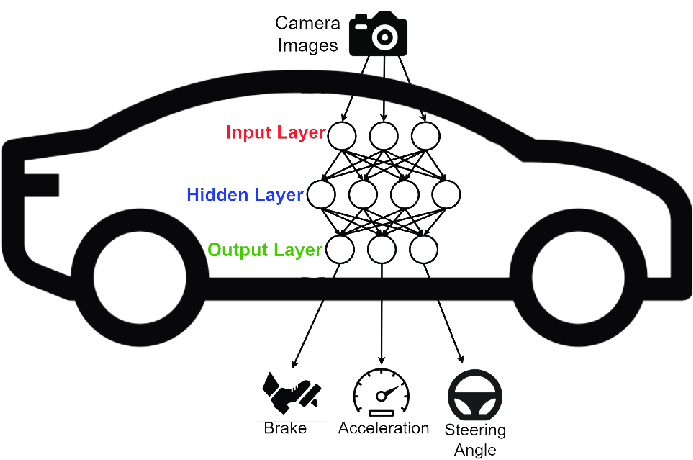

Automotive

Autonomous Vehicles

The automotive industry harnesses these networks in autonomous vehicles and self-driving cars. These networks interpret sensor data to make real-time driving decisions, ensuring safe and efficient navigation. Tesla and Waymo are examples of companies using neural networks in autonomous driving technologies.

You might also like: Saving Lives behind the Wheel

Traffic Management

Neural networks help manage traffic and prevent accidents by predicting congestion, optimizing signal timings, and providing real-time hazard information. This leads to smoother traffic flow and reduces the likelihood of traffic-related incidents.

Vehicle Maintenance

Neural networks predict mechanical failures before they occur, facilitating timely repairs and prolonging vehicle lifespan. This proactive approach helps manufacturers like BMW maintain vehicle reliability and performance.

Aerospace

Fault Detection

These networks detect faults in aircraft components before they become problems, minimizing the risk of in-flight failures. This enhances the safety and reliability of air travel by ensuring that potential issues are addressed promptly.

Autopilot Systems

They also enhance autopilot systems by constantly learning and adapting, contributing to smoother and more efficient autopiloting. This reduces the workload on human pilots and improves flight stability and safety.

Flight Path Optimization

Neural networks simulate various flight paths, allowing engineers to test and optimize routes for maximum safety and fuel efficiency. This capability helps in planning efficient flight operations and reducing operational costs.

Learn more about Deep Learning Using Python in the Cloud

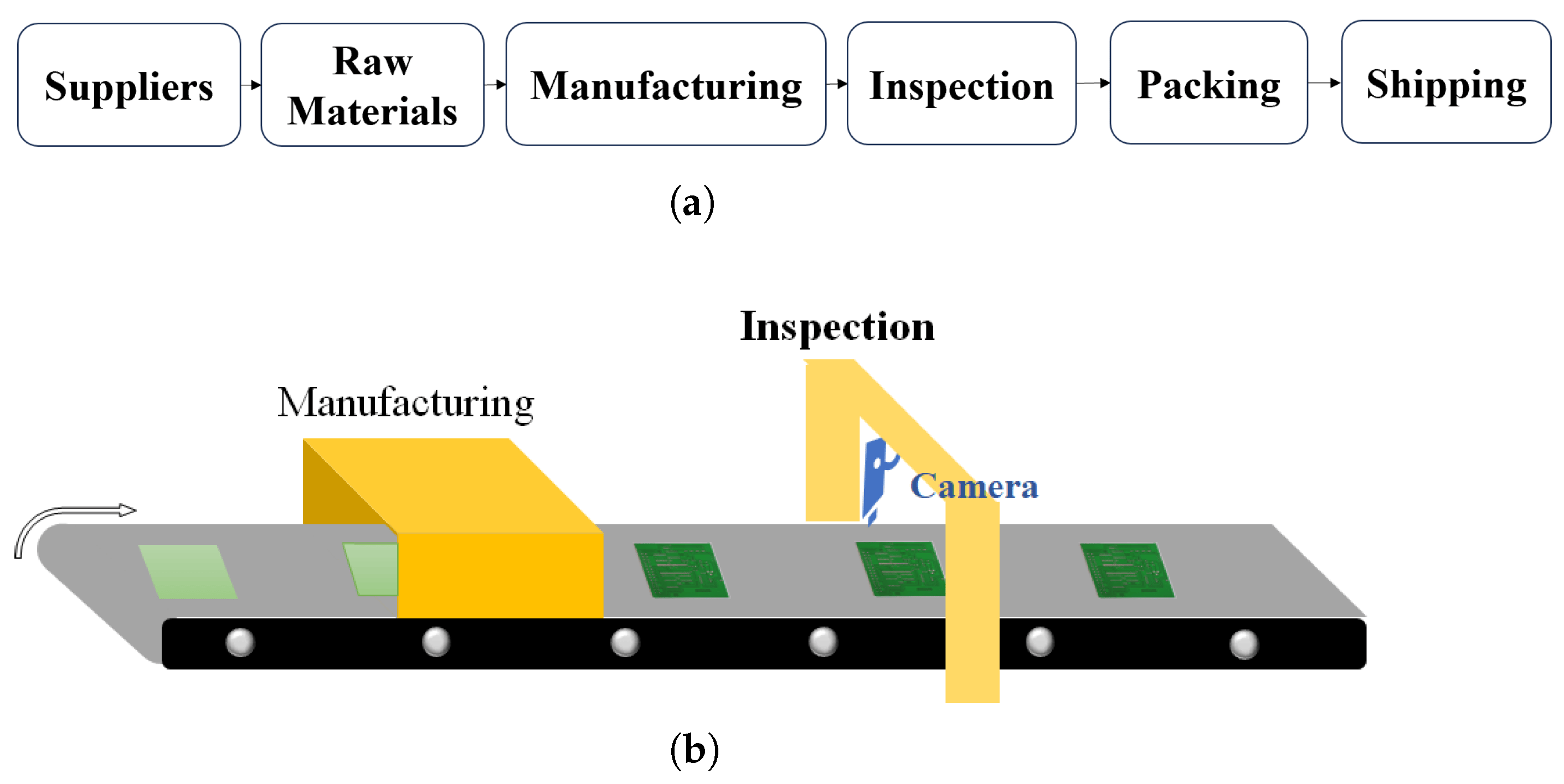

Manufacturing

Process Optimization

These networks design new chemicals, optimize production processes, and predict the quality of finished products. This leads to better product design and fewer defects. Companies like General Electric use neural networks to enhance their manufacturing processes.

Predictive Maintenance

They can also identify potential equipment problems before they cause costly downtime, allowing for proactive maintenance and saving time and money. This application is used by companies like Unilever to maintain operational efficiency.

Quality Inspection

They monitor production in real-time, ensuring consistent quality. They can even inspect visual aspects like welds, freeing up human workers for more complex tasks. This technology is widely used in the automotive and electronics industries.

What are the Future Applications of Neural Networks?

Integration with AI and Robotics

Combining neural networks with AI and robotics creates advanced autonomous systems capable of performing intricate tasks with human-like intelligence. This integration enhances productivity by allowing robots to adapt to new situations and learn from their environment.

Such systems can perform complex operations in manufacturing, healthcare, and defense, significantly improving efficiency and accuracy.

Also know about 8 industries undergoing Robotics revolution

Virtual Reality

Integration with virtual reality (VR) technologies fosters more immersive and interactive experiences in fields such as entertainment and education. By leveraging neural networks, VR systems can create realistic simulations and responsive environments, providing users with a deeper sense of presence.

This technology is also being used in professional training programs to simulate real-world scenarios, enhancing learning outcomes.

Environmental Monitoring

These networks analyze data from sensors and satellites to predict natural disasters, monitor deforestation, and track climate change patterns. These systems aid in mitigating environmental impacts and preserving ecosystems by providing accurate and timely information to decision-makers.

As neural networks continue to expand into new domains, they offer innovative solutions to pressing challenges, shaping the future and creating new opportunities for growth.