In the rapidly evolving landscape of artificial intelligence, Large Language Models (LLMs) have emerged as powerful tools for tasks like natural language understanding, question answering, and text generation.

However, harnessing their full potential can be complex and challenging. This is where orchestration frameworks come into play. These frameworks simplify the development and deployment of LLM-based applications, enhancing their performance and reliability.

In this blog, we’ll explore two prominent orchestration frameworks—LangChain and Llama Index—and discuss how they can streamline your AI projects.

LangChain and Orchestration Frameworks

LangChain is an open-source orchestration framework that is designed to be easy to use and scalable. It provides a number of features that make it well-suited for managing LLMs, such as:

- A simple API that makes it easy to interact with LLMs

- A distributed architecture that can scale to handle large numbers of LLMs

- A variety of features for managing LLMs, such as load balancing, fault tolerance, and security

Llama Index is another open-source orchestration framework that is designed for managing LLMs. It provides a number of features that are similar to LangChain, such as:

- A simple API

- A distributed architecture

- A variety of features for managing LLMs

Give it a read too: Building a React Agent with Langchain Toolkit

However, Llama Index also has some unique features that make it well-suited for certain applications, such as:

- The ability to query LLMs in a distributed manner

- The ability to index LLMs so that they can be searched more efficiently

Both LangChain and Llama Index are powerful orchestration frameworks that can be used to manage LLMs. The best framework for a particular application will depend on the specific requirements of that application.

In addition to LangChain and Llama Index, there are a number of other orchestration frameworks available, such as Bard, Megatron, Megatron-Turing NLG and OpenAI Five. These frameworks offer a variety of features and capabilities, so it is important to choose the one that best meets the needs of your application.

LlamaIndex and LangChain: Orchestrating LLMs

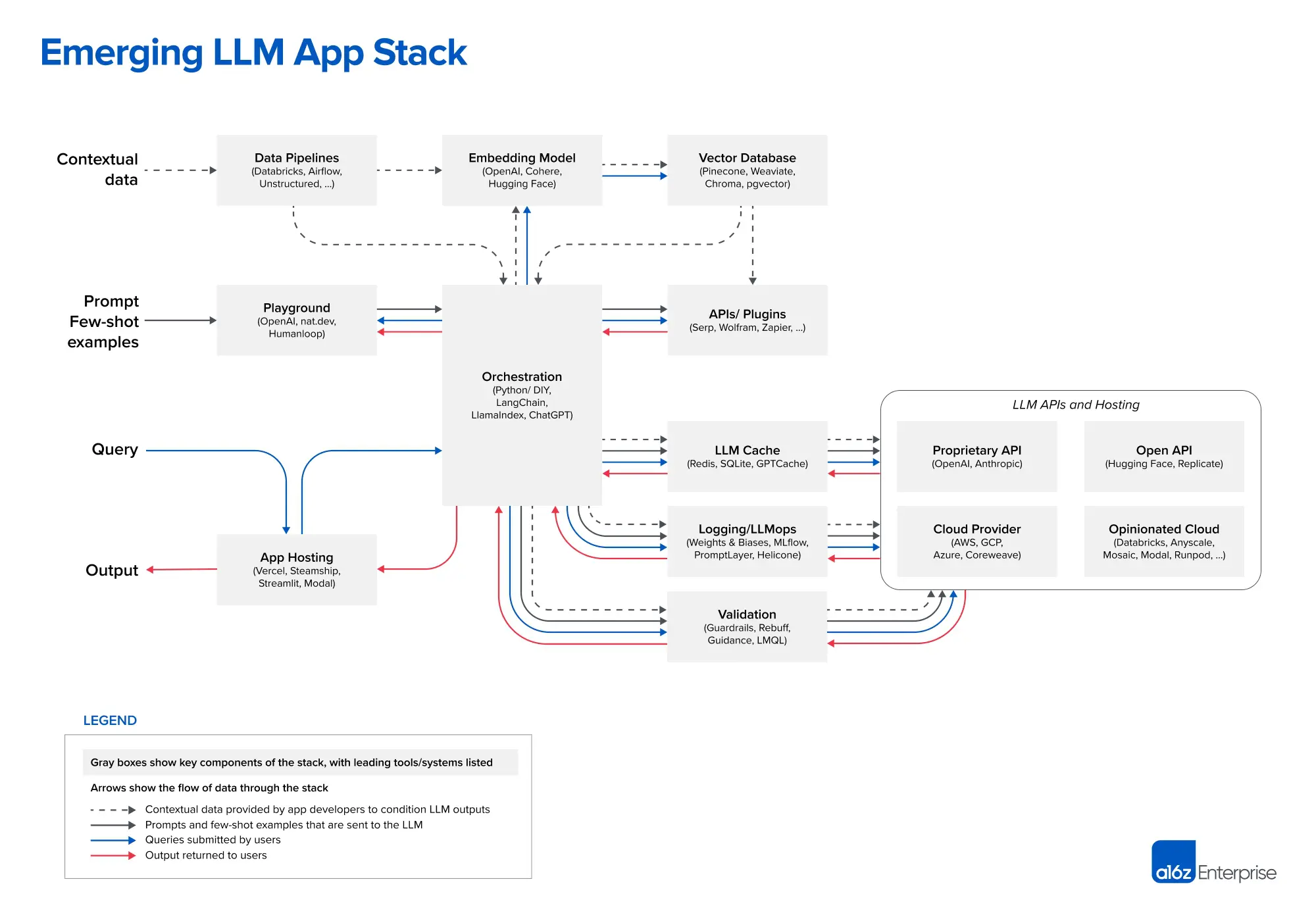

The venture capital firm Andreessen Horowitz (a16z) identifies both LlamaIndex and LangChain as orchestration frameworks that abstract away the complexities of prompt chaining, enabling seamless data querying and management between applications and LLMs. This orchestration process encompasses interactions with external APIs, retrieval of contextual data from vector databases, and maintaining memory across multiple LLM calls.

LlamaIndex: A Data Framework for the Future

LlamaIndex distinguishes itself by offering a unique approach to combining custom data with LLMs, all without the need for fine-tuning or in-context learning. It defines itself as a “simple, flexible data framework for connecting custom data sources to large language models.” Moreover, it accommodates a wide range of data types, making it an inclusive solution for diverse data needs.

Continuous evolution: LlamaIndex 0.7.0

LlamaIndex is a dynamic and evolving framework. Its creator, Jerry Liu, recently released version 0.7.0, which focuses on enhancing modularity and customizability to facilitate the development of LLM applications that leverage your data effectively. This release underscores the commitment to providing developers with tools to architect data structures for LLM applications.

The LlamaIndex Ecosystem: LlamaHub

At the core of LlamaIndex lies LlamaHub, a data ingestion platform that plays a pivotal role in getting started with the framework. LlamaHub offers a library of data loaders and readers, making data ingestion a seamless process. Notably, LlamaHub is not exclusive to LlamaIndex; it can also be integrated with LangChain, expanding its utility.

Navigating the LlamaIndex Workflow

Users of LlamaIndex typically follow a structured workflow:

- Parsing Documents into Nodes

- Constructing an Index (from Nodes or Documents)

- Optional Advanced Step: Building Indices on Top of Other Indices

- Querying the Index

You might also like: Building LLM Chatbots: A Complete Beginner’s Guide

The querying aspect involves interactions with an LLM, where a “query” serves as an input. While this process can be complex, it forms the foundation of LlamaIndex’s functionality.

In essence, LlamaIndex empowers users to feed pertinent information into an LLM prompt selectively. Instead of overwhelming the LLM with all custom data, LlamaIndex allows users to extract relevant information for each query, streamlining the process.

Power of LlamaIndex and LangChain

LlamaIndex seamlessly integrates with LangChain, offering users flexibility in data retrieval and query management. It extends the functionality of data loaders by treating them as LangChain Tools and providing Tool abstractions to use LlamaIndex’s query engine alongside a LangChain agent.

Real-World Applications: Context-Augmented Chatbots

LlamaIndex and LangChain join forces to create context-rich chatbots. Learn how these frameworks can be leveraged to build chatbots that provide enhanced contextual responses.

This comprehensive exploration unveils the potential of LlamaIndex, offering insights into its evolution, features, and practical applications.

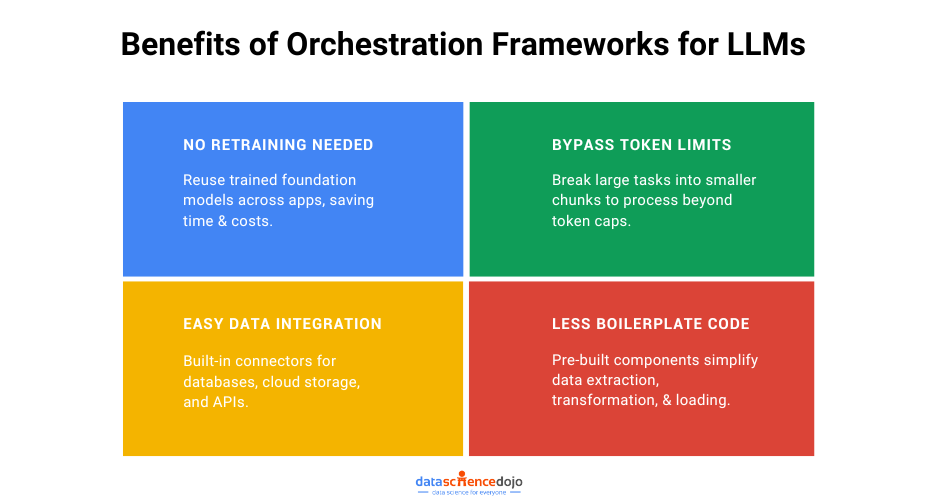

Why are Orchestration Frameworks Needed?

Data orchestration frameworks are essential for building applications on enterprise data because they help to:

-

Eliminate the need for foundation model retraining: Foundation models are large language models that are trained on massive datasets of text and code. They can be used to perform a variety of tasks, such as generating text, translating languages, and answering questions. However, foundation models can be expensive to train and retrain. Orchestration frameworks can help to reduce the need for retraining by allowing you to reuse trained models across multiple applications.

-

Overcome token limits: Foundation models often have token limits, which restrict the number of words or tokens that can be processed in a single request. Orchestration frameworks can help to overcome token limits by breaking down large tasks into smaller subtasks that can be processed separately.

Check this out too: Mastering Langchain Agents: A Beginner’s Guide

-

Provide connectors for data sources: Orchestration frameworks typically provide connectors for a variety of data sources, such as databases, cloud storage, and APIs. This makes it easy to connect your data pipeline to the data sources that you need.

-

Reduce boilerplate code: Orchestration frameworks can help to reduce boilerplate code by providing a variety of pre-built components for common tasks, such as data extraction, transformation, and loading. This allows you to focus on the business logic of your application.

Popular Orchestration Frameworks

There are a number of popular orchestration frameworks available, including:

-

Prefect is an open-source orchestration framework that is written in Python. It is known for its ease of use and flexibility.

-

Airflow is an open-source orchestration framework that is written in Python. It is widely used in the enterprise and is known for its scalability and reliability.

-

Luigi is an open-source orchestration framework that is written in Python. It is known for its simplicity and performance.

-

Dagster is an open-source orchestration framework that is written in Python. It is known for its extensibility and modularity.

Read more –> FraudGPT: Evolution of ChatGPT into an AI weapon for cybercriminals in 2023

Choosing the Right Orchestration Framework

When choosing an orchestration framework, there are a number of factors to consider, such as:

- Ease of use: The framework should be easy to use and learn, even for users with no prior experience with orchestration.

- Flexibility: The framework should be flexible enough to support a wide range of data pipelines and workflows.

- Scalability: The framework should be able to scale to meet the needs of your organization, even as your data volumes and processing requirements grow.

- Reliability: The framework should be reliable and stable, with minimal downtime.

- Community support: The framework should have a large and active community of users and contributors.

Conclusion

Orchestration frameworks are essential for building applications on enterprise data. They can help to eliminate the need for foundation model retraining, overcome token limits, connect to data sources, and reduce boilerplate code. When choosing an orchestration framework, consider factors such as ease of use, flexibility, scalability, reliability, and community support.