Data engineering tools are specialized software applications or frameworks designed to simplify and optimize the process of managing, processing, and transforming large volumes of data. These tools provide data engineers with the necessary capabilities to efficiently extract, transform, and load (ETL) data, build scalable data pipelines, and prepare data for further analysis and consumption by other applications.

By offering a wide range of features, such as data integration, transformation, and quality management, data engineering tools help ensure that data is structured, reliable, and ready for decision-making.

Data engineering tools also enable workflow orchestration, automate tasks, and provide data visualization capabilities, making it easier for teams to manage complex data processes. In today’s data-driven world, these tools are essential for building efficient, effective data pipelines that support business intelligence, analytics, and overall data strategy.

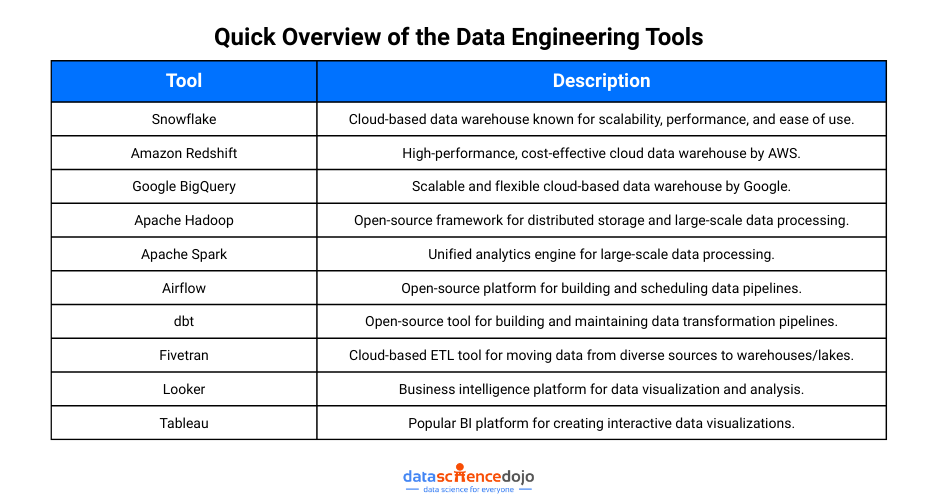

Top 10 data engineering tools

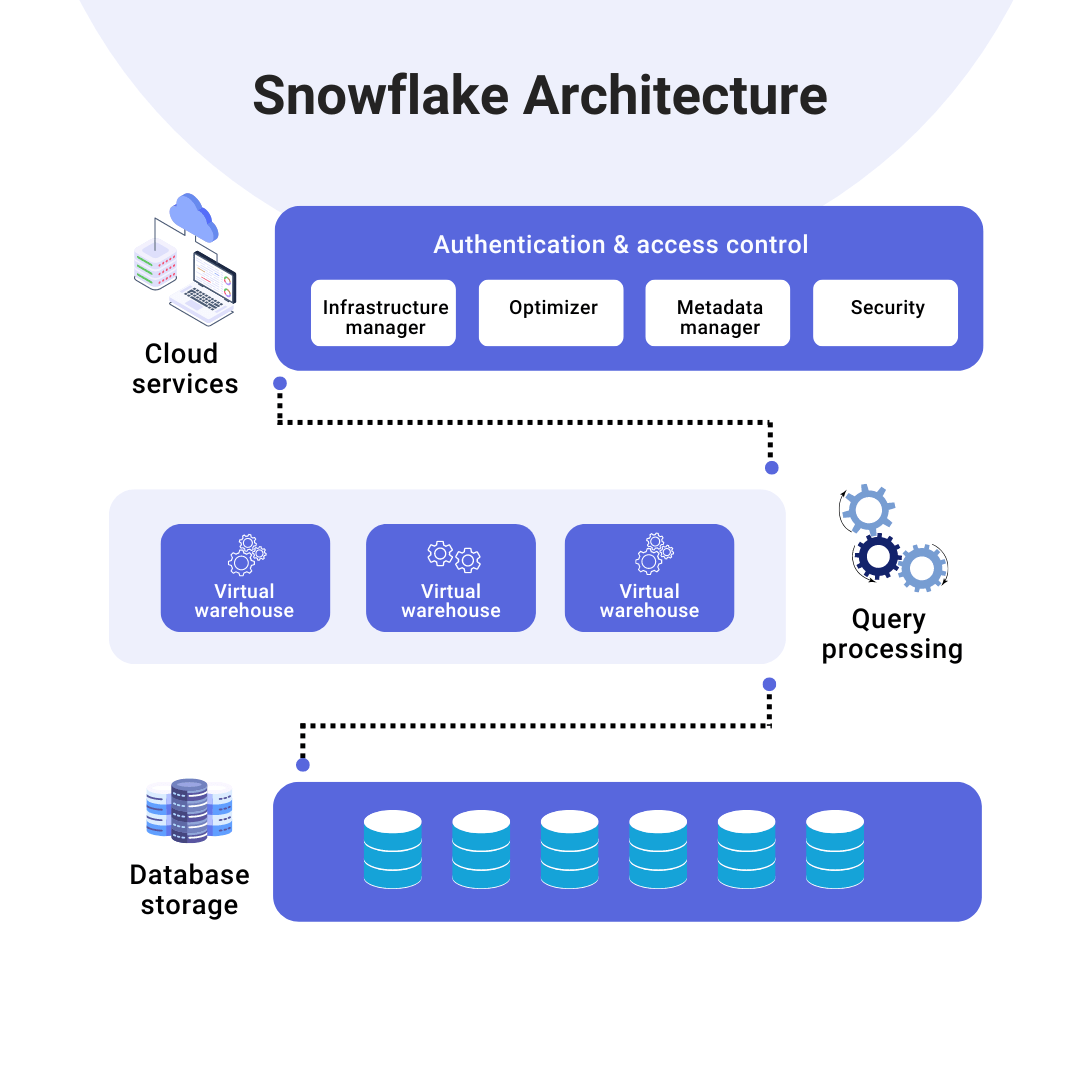

1. Snowflake

Snowflake is a cloud-based data warehouse platform that offers scalability, performance, and ease of use. Its architecture separates storage and compute, allowing for flexible scaling. It supports various data types and features advanced capabilities like multi-cluster warehouses and data sharing, making it ideal for large-scale data analysis. Snowflake’s ability to support structured and semi-structured data (like JSON) makes it versatile for various business use cases.

In addition, Snowflake provides a secure and collaborative environment with features like real-time data sharing and automatic scaling. Its native support for data sharing across organizations allows users to securely share data between departments or with external partners. Snowflake’s fully managed service eliminates the need for infrastructure management, allowing organizations to focus more on data analysis.

2. Amazon Redshift

Amazon Redshift is a powerful cloud data warehouse service known for its high performance and cost-effectiveness. It uses massively parallel processing (MPP) for fast query execution and integrates seamlessly with AWS services. Redshift supports various data workflows, enabling efficient data analysis. Its architecture is designed to scale for petabytes of data, ensuring optimal performance even with large datasets.

Amazon Redshift also offers robust security features, such as encryption at rest and in transit, to ensure the protection of sensitive data. Additionally, its integration with other AWS tools like S3 and Lambda makes it easier for data engineers to create end-to-end data processing pipelines. Redshift’s advanced compression capabilities also help reduce storage costs while enhancing data retrieval speed.

3. Google BigQuery

Google BigQuery is a serverless cloud-based data warehouse designed for big data analytics. It offers scalable storage and compute capabilities with fast query performance. BigQuery integrates with Google Cloud services, making it an excellent choice for data engineers working on large datasets and advanced analytics. It supports a fully managed environment, reducing the need for manual infrastructure management.

One of BigQuery’s key strengths is its ability to run SQL-like queries on vast amounts of data quickly. Additionally, it offers a feature called BigQuery ML, which allows users to build and train machine learning models directly in the platform without needing to export data. This integration of machine learning capabilities makes BigQuery a powerful tool for both data storage and predictive analytics.

4. Apache Hadoop

Apache Hadoop is an open-source framework for distributed storage and processing of large datasets. With its Hadoop Distributed File System (HDFS) and MapReduce, it enables fault-tolerant and scalable data processing. Hadoop is ideal for batch processing and handling large, unstructured data. It is widely used for processing log files, social media feeds, and large data dumps.

Beyond HDFS and MapReduce, Hadoop has a rich ecosystem that includes tools like Hive for querying large datasets and Pig for data transformation. It also integrates with Apache HBase, a NoSQL database for real-time data storage, enhancing its capabilities for large-scale data applications. Hadoop is a go-to solution for enterprises dealing with vast amounts of unstructured data from a variety of sources.

5. Apache Spark

Apache Spark is a high-speed, open-source analytics engine for big data processing. It provides in-memory processing and supports multiple programming languages like Python, Java, and Scala. Spark handles both batch and real-time data efficiently, with built-in libraries for machine learning and graph processing. Spark’s ability to process data in memory leads to faster performance compared to traditional disk-based processing engines like Hadoop.

Spark also integrates well with other big data technologies, such as Hadoop, and can run on multiple platforms, from standalone clusters to cloud environments. Its unified framework means that users can execute SQL queries, run machine learning algorithms, and perform data analytics all within the same environment, making it an essential tool for modern data engineering workflows.

6. Airflow

Apache Airflow is an open-source platform for orchestrating and managing data workflows. Using Directed Acyclic Graphs (DAGs), Airflow enables scheduling and dependency management of data tasks. It integrates with other tools, providing flexibility to automate complex data pipelines. Airflow also supports real-time monitoring and logging, which helps data engineers track the status and health of workflows.

Airflow’s extensibility is another significant advantage, as it allows users to create custom operators, hooks, and sensors to interact with different data sources or services. It has a strong community and ecosystem, which continuously contributes to its development and improvement. With its ability to automate and manage workflows across multiple systems, Airflow has become a key tool in modern data engineering environments.

7. dbt (Data Build Tool)

dbt is an open-source tool for transforming raw data into structured, analytics-ready datasets. It allows for SQL-based transformations, dependency management, and automated testing. dbt is crucial for maintaining data quality and building efficient data pipelines. With dbt, data engineers can write modular SQL queries, ensuring a clear and maintainable transformation process.

Another standout feature of dbt is its version control capabilities. It integrates seamlessly with Git, allowing teams to collaborate on data models and track changes over time. This ensures that the data transformation process is transparent, reliable, and reproducible. Additionally, dbt’s testing framework helps data engineers detect issues early, improving the quality and integrity of data pipelines.

8. Fivetran

Fivetran is a cloud-based data integration platform that automates the ETL process. It offers pre-built connectors for various data sources, simplifying the process of loading data into data warehouses. Fivetran ensures up-to-date and reliable data with minimal setup. It also handles schema changes automatically, allowing data engineers to focus on higher-level tasks without worrying about manual updates.

Fivetran’s fully managed service means that users don’t need to deal with the complexity of building and maintaining their own ETL infrastructure. It integrates with major data warehouses like Snowflake and Redshift, ensuring seamless data movement between systems. This ease of integration and automation makes Fivetran a highly efficient tool for modern data engineering workflows.

9. Looker

Looker is a business intelligence platform that allows data engineers to create interactive dashboards and reports. It features a flexible modeling layer for defining relationships and metrics, promoting collaboration. Looker integrates with various data platforms, providing a powerful tool for data exploration and visualization. It enables real-time analysis of data stored in different data warehouses, making it a valuable tool for decision-making.

Additionally, Looker’s semantic modeling layer helps ensure that everyone in the organization uses consistent definitions for metrics and KPIs. This reduces confusion and promotes data-driven decision-making across teams. With its scalable architecture, Looker can handle growing datasets, making it a long-term solution for business intelligence needs.

10. Tableau

Tableau is a popular business intelligence and data visualization tool. It allows users to create interactive, visually engaging dashboards and reports. With its drag-and-drop interface, Tableau makes it easy to explore and analyze data, making it an essential tool for data visualization. It connects to various data sources, including data warehouses, spreadsheets, and cloud services.

Tableau’s advanced analytics capabilities, such as trend analysis, forecasting, and predictive modeling, make it more than just a visualization tool. It also supports real-time data updates, ensuring that reports and dashboards always reflect the latest information. With its powerful sharing and collaboration features, Tableau allows teams to make data-driven decisions quickly and effectively.

Benefits of Data Engineering Tools

-

Efficient Data Management

Easily extract, consolidate, and store large volumes of data while enhancing data quality, consistency, and accessibility. -

Streamlined Data Transformation

Automate the process of converting raw data into structured, usable formats, applying business logic at scale. -

Workflow Orchestration

Schedule, monitor, and manage data pipelines to ensure seamless and automated data workflows. -

Scalability and Performance

Efficiently process growing data volumes with high-speed performance and resource optimization. -

Seamless Data Integration

Connect diverse data sources—cloud, on-premise, or third-party—with minimal effort and configuration. -

Data Governance and Security

Maintain compliance, enforce access controls, and safeguard sensitive information throughout the data lifecycle. -

Collaborative Workflows

Support teamwork by enabling version control, documentation, and structured project organization across teams.

Wrapping up

In summary, data engineering tools are vital for managing, processing, and transforming data efficiently. They streamline workflows, handle big data challenges, and ensure the availability of high-quality data for analysis. These tools enhance scalability, optimize performance, and support seamless integration, making data accessible and reliable for decision-making.

Ultimately, data engineering tools enable organizations to build effective data pipelines and maintain data security, unlocking valuable insights across teams.