Large language models (LLMs) are trained on massive textual data to generate creative and contextually relevant content. Since enterprises are utilizing LLMs to handle information effectively, they must understand the structure behind these powerful tools and the challenges associated with them.

One such component worthy of attention is the llm context window. It plays a crucial role in the development and evolution of LLM technology to enhance the way users interact with information.

In this blog, we will navigate the paradox around LLM context windows and explore possible solutions to overcome the challenges associated with large context windows. However, before we dig deeper into the topic, it’s essential to understand what LLM context windows are and their importance in the world of language models.

What Are LLM Context Windows?

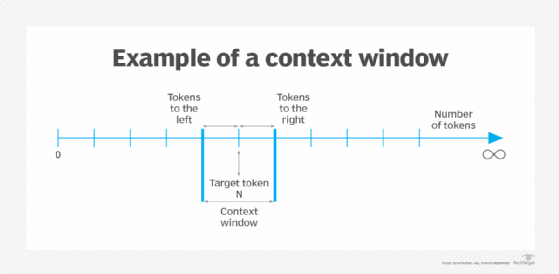

An LLM context window acts like a lens providing perspective to a large language model. The window keeps shifting to ensure a constant flow of information for an LLM as it engages with the user’s prompts and inputs. Thus, it becomes a short-term memory for LLMs to access when generating outputs.

The functionality of a context window can be summarized through the following three aspects:

- Focal word – Focuses on a particular word and the surrounding text, usually including a few nearby sentences in the data

- Contextual information – Interprets the meaning and relationship between words to understand the context and provide relevant output for the users

- Window size – Determines the amount of data and contextual information that is quickly accessible to the LLM when generating a response

Thus, context windows bae their function on the above aspects to assist LLMs in creating relevant and accurate outputs. These aspects also lay down a basis for the context window paradox that we aim to explore here.

Crack the Large Language Model’s code

What Is the Context Window Paradox?

It is a dilemma that revolves around the size of context windows. While it is only logical to expect large context windows to be beneficial, there are two sides to this argument.

Join Jerry Liu, CEO of LlamaIndex, as he simplifies the Curse of Dimensionality, Context Window Paradox, and more in LLMs.

Tune in to our podcast now!

Side One

It elaborates on the benefits of large context windows. With a wider lens, LLMs get access to more textual data and information. It enables an LLM to study more data, forming better connections between words and generating improved contextual information.

Thus, the LLM generates enhanced outputs with better understanding and a coherent flow of information. It also assists language models to handle complex tasks more efficiently.

You might also want to learn about LLM hallucinations

Side Two

While larger windows give access to more contextual information, it also increases the amount of data for LLMs to process. It makes it challenging to identify useful knowledge from irrelevant details in large amounts of data, overwhelming LLMs at the cost of degraded performance.

Thus, it makes the size of LLM context windows a paradoxical matter where users have to look for the right trade-off between improved contextual information and the high performance of LLMs. It leads one to decide how much information is a good amount for an efficient LLM.

Before we elaborate further on the paradox, let’s understand the role and importance of context windows in LLMs.

Explore and learn all you need to know about LLMs

Why Do Context Windows Matter in LLMs?

LLM context windows are important in ensuring the efficient working of LLMs. Their multifaceted role is described below.

Understanding Language Nuances

The focused perspective of context windows provides surrounding information in data, enabling LLMs to better understand the nuances of language. The model becomes trained to grasp the meaning and intent behind words. It empowers an LLM to perform the following tasks:

Machine Translation

An LLM uses a context window to identify the nuances of language and contextual information to create the most appropriate translation. It caters to the understanding of context within an entire sentence or paragraph to ensure efficient machine translation.

Question Answering

Understanding contextual information is crucial when answering questions. With relevant information on the situation and setting, it is easier to generate an informative answer. Using a context window, LLMs can identify the relevant parts of the conversation and avoid irrelevant tangents.

Also learn how you can generate code with LLMs

Coherent Text Generation

LLMs use context windows to generate text that aligns with the preceding information. By analyzing the context, the model can maintain coherence, tone, and overall theme in its response. This is important for tasks like:

Chatbots

Conversational engagement relies on a high level of coherence. It is particularly used in chatbots where the model remembers past interactions within a conversation. With the use of context windows, a chatbot can create a more natural and engaging conversation.

Here’s a step-by-step guide to building LLM chatbots.

Creative Textual Responses

LLMs can create creative content like poems, essays, and other texts. A context window allows an LLM to understand the desired style and theme from the given dataset to create creative responses that are more relevant and accurate.

Contextual Learning

Context is a crucial element for LLMs which becomes more accessible with context windows. Analyzing the relevant data with a focus on words and text of interest allows an LLM to learn and adapt their responses. It becomes useful for uses like:

Virtual Assistants

Virtual assistants are designed to help users in real time. Context window enables the assistant to remember past requests and preferences to provide more personalized and helpful service.

Open-Ended Dialogues

In ongoing conversations, the context window allows the LLM to track the flow of the dialogue and tailor its responses accordingly.

Hence, context windows act as a lens through which LLMs view and interpret information. The size and effectiveness of this perspective significantly impact the LLM’s ability to understand and respond to language in a meaningful way. This brings us back to the size of a context window and the associated paradox.

The Context Window Paradox: Is Bigger, Not Better?

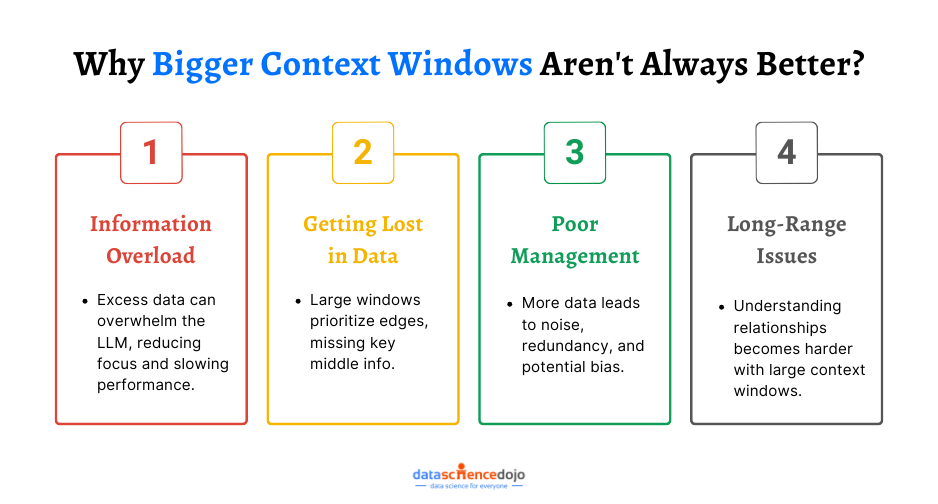

While a bigger context window ensures LLM’s access to more information and better details for contextual relevance, it comes at a cost. Let’s take a look at some of the drawbacks for LLMs that come with increasing the context window size.

Information Overload

Too much information can overwhelm a language model just like humans. Too much text leads to an information overload that includes irrelevant information that can become a distraction for an LLM.

It makes it difficult for LLMs to focus on key knowledge aspects within the context, making it difficult to generate effective responses to queries. Moreover, a large textual dataset also requires more computational resources, resulting in more expense and slower LLM performance.

Getting Lost in Data

Even with a larger window for data access, an LLM can process limited information effectively. In a wider span of data, an LLM can focus on the edges. It results in LLMs prioritizing the data at the start and end of a window, missing out on important information in the middle.

Moreover, mismanaged truncation to fit a large window size can result in the loss of essential information. As a result, it can compromise the quality of the results produced by the LLM.

Also learn how to visualize data

Poor Information Management

A wider LLM context window means a larger context that can lead to poor handling and management of information or data. With too much noise in the data, it becomes difficult for an LLM to differentiate between important and unimportant information.

It can create redundancy or contradictions in produced results, harming the credibility and efficiency of a large language model. Moreover, it creates a possibility for bias amplification, leading to misleading outputs.

Long-Range Dependencies

With a focus on concepts spread far apart in large context windows, it can become challenging for an LLM to understand relationships between words and concepts. It limits the LLM’s ability for tasks requiring historical analysis or cause-and-effect relationships.

Thus, large context windows offer advantages but with some limitations. The best approach is to find the right balance between context size, efficiency, and the specific task at hand is crucial for optimal LLM performance.

Techniques to Address Context Window Paradox

Let’s look at some techniques that can assist you in optimizing the use of large context windows. Each one explores ways to find the optimal balance between context size and LLM performance.

Prioritization and Attention Mechanisms

Attention mechanism techniques can be used to focus on crucial and most relevant information within a context window. Hence, an LLM does not have to deal with the entire flow of information and can only focus on the highlighted parts within the window, enhancing its overall performance.

A detailed guide on attention mechanism in NLP

Strategic Truncation

Since all the information within a context window is not important or equally relevant, truncation can be used to strategically remove unrelated details. The core elements of the text needed for the task are preserved while the unnecessary information is removed, avoiding information overload on the LLM.

Retrieval Augmented Generation (RAG)

This technique integrates an LLM with a retrieval system containing a vast external knowledge base to find information specifically relevant to the current prompt and context window. This allows the LLM to access a wider range of information without being overwhelmed by a massive internal window.

Another interesting read: RAG in LLM

Prompt Engineering

It focuses on crafting clear instructions for the LLM to efficiently utilize the context window. Clear and focused prompts can guide the LLM toward relevant information within the context, enhancing the LLM’s efficiency in utilizing context windows.

Here’s a 10-step guide to becoming a prompt engineer

Optimizing Training Data

It is a useful practice to organize training data, creating well-defined sections, summaries, and clear topic shifts, helping the LLM learn to navigate larger contexts more effectively. The structured information makes it easier for an LLM to process data within the context window.

These techniques can help us address the context window paradox and leverage the benefits of larger context windows while mitigating their drawbacks.

The Future of Context Windows in LLMs

We have looked at the varying aspects of LLM context windows and the paradox involving their size. With the right approach, technique, and balance, it is possible to choose the optimal context window size for an LLM. Moreover, it also highlights the need to focus on the potential of context windows beyond the paradox around their size.

The future is expected to transition from cramming more information into a context window to ward smarter context utilization. Moreover, advancements in attention mechanisms and integration with external knowledge bases will also play a role, allowing LLMs to pinpoint truly relevant information regardless of window size.

Ultimately, the goal is for LLMs to become context masters, understanding not just the “what” but also the “why” within the information they process. This will pave the way for LLMs to tackle even more intricate tasks and generate responses that are both informative and human-like.