Every cook knows how to avoid Type I Error: just remove the batteries. Let’s also learn how to reduce the chances of Type II errors.

Why type I and type II errors matter

A/B testing is an essential component of large-scale online services today. So essential, that every online business worth mentioning has been doing it for the last 10 years.

A/B testing is also used in email marketing by all major online retailers. The Obama for America data science team received a lot of press coverage for leveraging data science, especially A/B testing during the presidential campaign.

Here is an interesting article on this topic along with a data science bootcamp that teaches a/b testing and statistical analysis.

If you have been involved in anything related to A/B testing (online experimentation) on UI, relevance or email marketing, chances are that you have heard of Type i and Type ii error. The usage of these terms is common but a good understanding of them is not.

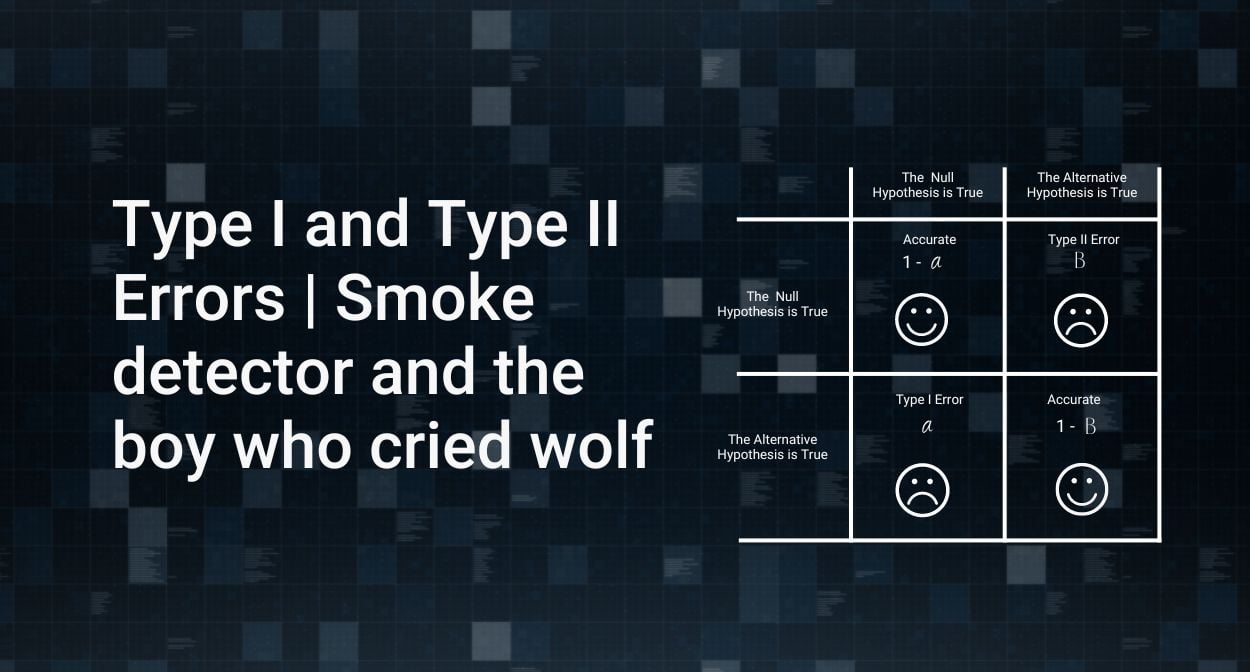

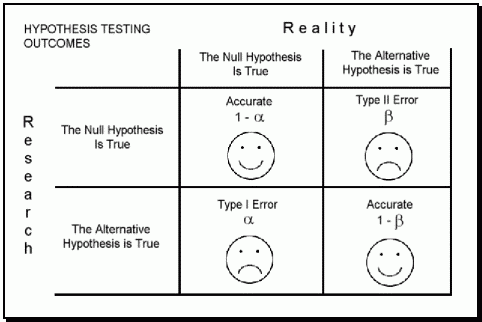

I have seen illustrations as simple as this.

Examples of type I and type II errors

I intend to share two great examples I recently read that will help you remember this especially important concept in hypothesis testing.

Type I error: An alarm without a fire.

Type II error: A fire without an alarm.

Every cook knows how to avoid Type I Error – just remove the batteries. Unfortunately, this increases the incidences of Type II error.

Reducing the chances of Type II error would mean making the alarm hypersensitive, which in turn would increase the chances of Type I error.

Another way to remember this is by recalling the story of the Boy Who Cried Wolf.

Null hypothesis testing: There is no wolf.

Alternative hypothesis testing: There is a wolf.

Villagers believing the boy when there was no wolf (Reject the null hypothesis incorrectly): Type 1 Error. Villagers not believing the boy when there was a wolf (Rejecting alternative hypothesis incorrectly): Type 2 Error

Tailpiece

The purpose of the post is not to explain type 1 and type 2 error. If this is the first time you are hearing about these terms, here is the Wikipedia entry: Type I and Type II Error.