The development of generative AI relies on important machine-learning techniques in today’s technological advancement. It makes machine learning (ML) a critical component of data science where algorithms are statistically trained on data.

An ML model learns iteratively to make accurate predictions and take actions. It enables computer programs to perform tasks without depending on programming. Today’s recommendation engines are one of the most innovative products based on machine learning.

Exploring Important Machine-Learning Techniques

The realm of ML is defined by several learning methods, each aiming to improve the overall performance of a model. Technological advancement has resulted in highly sophisticated algorithms that require enhanced strategies for training models.

Let’s look at some of the critical and cutting-edge machine-learning techniques of today.

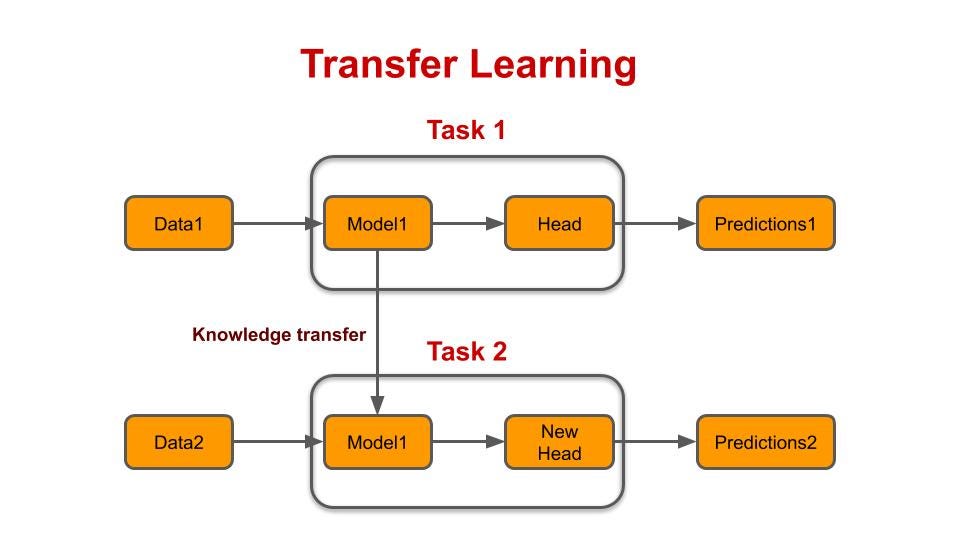

Transfer Learning

This technique is based on training a neural network on a base model and using the learning to apply the same model to a new task of interest. Here, the base model represents a task similar to that of interest, enabling the model to learn the major data patterns.

- Why use transfer learning? It leverages knowledge gained from the first (source) task to improve the performance of the second (target) task. As a result, you can avoid training a model from scratch for related tasks. It is also a useful machine-learning technique when data for the task of interest is limited.

- Pros: Transfer learning enhances the efficiency of computational resources as the model trains on target tasks with pre-learned patterns. Moreover, it offers improved model performance and allows the reusability of features in similar tasks.

- Cons: This machine-learning technique is highly dependent on the similarity of two tasks. Hence, it cannot be used for extremely dissimilar and if applied to such tasks, it risks overfitting the source task during the model training phase.

Learn more about Transfer Learning

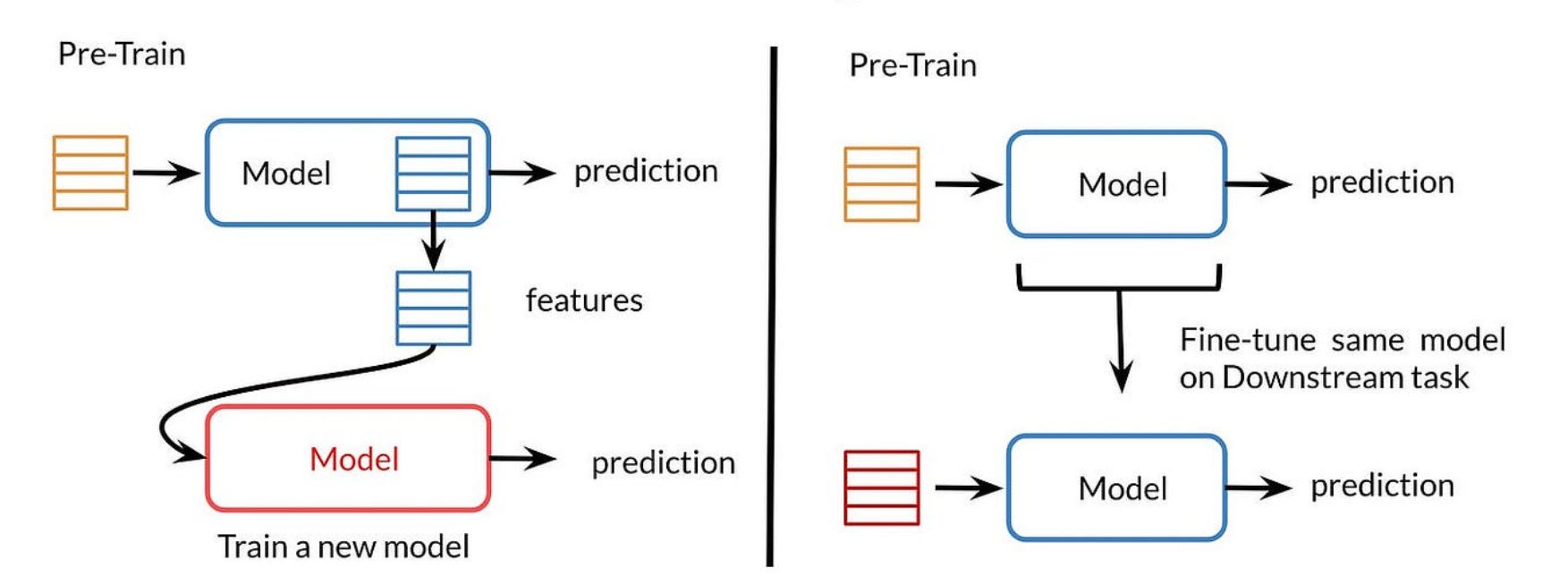

Fine-Tuning

Fine-tuning is a machine-learning technique that aims to support the process of transfer learning. It updates the weights of a model trained on a source task to enhance its adaptability to the new target task. While it looks similar to transfer learning, it does not involve replacing all the layers of a pre-trained network.

- Why use fine-tuning? It is useful to enhance the adaptability of a pre-trained model on a new task. It enables the ML model to refine its parameters and learn task-specific patterns needed for improved performance on the target task.

- Pros: This machine-learning technique is computationally efficient and offers improved adaptability to an ML model when dealing with transfer learning. The utilization of pre-learned features becomes beneficial when the target task has a limited amount of data.

- Cons: Fine-tuning is sensitive to the choice of hyperparameters and you cannot find the optimal settings right away. It requires experimenting with the model training process to ensure optimal results. Moreover, it also has the risk of overfitting and limited adaptation in case of high dissimilarity in source and target tasks.

Another interesting read: Hyperparameter tuning for ML models

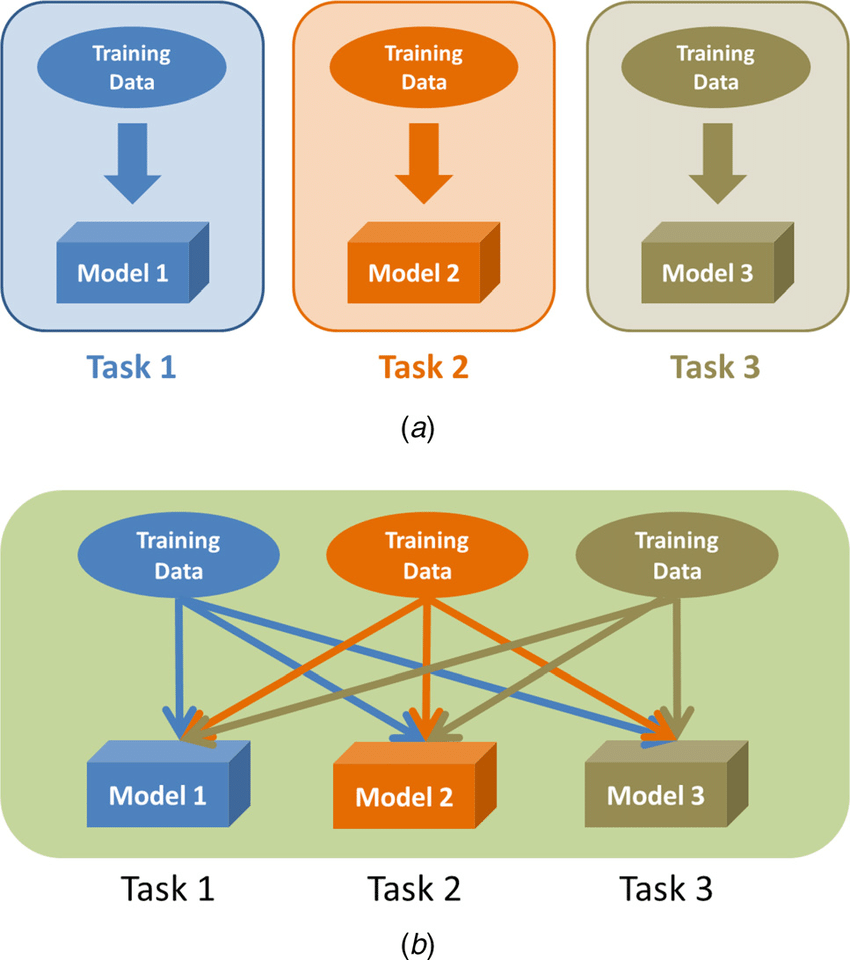

Multitask Learning

As the name indicates, the multitask machine-learning technique unlocks the power of simultaneity. Here, a model is trained to perform multiple tasks at the same time, sharing the knowledge across these tasks.

- Why use multitask learning? It is useful in sharing common representations across multiple tasks, offering improved generalization. You can use it in cases where several related ML tasks can benefit from shared representations.

- Pros: The enhanced generalization capability of models ensures the efficient use of data. Leveraging information results in improved model performance and regularization of training. Hence, it results in the creation of more robust training models.

- Cons: The increased complexity of this machine-learning technique requires advanced architecture and informed weightage of different tasks. It also depends on the availability of large and diverse datasets for effective results. Moreover, the dissimilarity of tasks can result in unwanted interference in the model performance of other tasks.

Read more about machine learning here

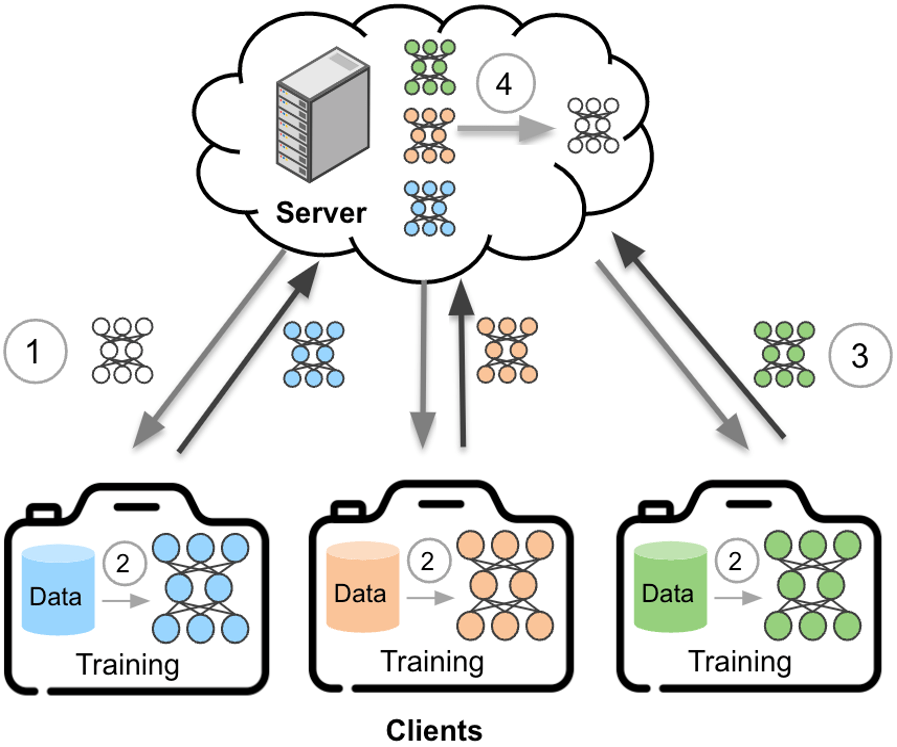

Federated Learning

It is one of the most advanced machine-learning techniques that focuses on decentralized model training. As a result, the data remains on the user-end devices, and the model is trained locally. It is a revolutionized ML methodology that enhances collaboration among decentralized devices.

- Why use federated learning? Federated learning is focused on locally trained models that do not require the sharing of raw data of end-user devices. It enables the sharing of key parameters through ML models while not requiring an exchange of sensitive data.

- Pros: This machine-learning technique addresses the privacy concerns in ML training. The decentralized approach enables increased collaborative learning with reduced reliance on central servers for ML processes. Moreover, this method is energy-efficient as models are trained locally.

- Cons: It cannot be implemented in resource-constrained environments due to large communication overhead. Moreover, it requires compatibility between local data and the global model at the central server, limiting its ability to handle heterogeneous datasets.

Factors determining the Best Machine-Learning Technique

While there are numerous machine-learning techniques available for model training today, it is crucial to make the right choice for your business. Below is a list of important factors that you must consider when selecting an ML method for your processes.

Context Matters!

Context refers to the type of problem or task at hand. The requirements and constraints of the model-training process is pivotal in choosing an ML technique. For instance, transfer learning and fine-tuning promote knowledge sharing, multitask learning promotes simultaneity, and federated learning supports decentralization.

Also learn about ML algorithms

Data Availability and Complexity

ML processes require large datasets to develop high-performing models. Hence, the amount and complexity of data determine the choice of method. While transfer learning and multitask learning need large amounts of data, fine-tuning is suitable for a limited dataset. Moreover, data complexity determines knowledge sharing and feature interactions.

Computational Resources

Large neural networks and complex machine-learning techniques require large computational power. The availability of hardware resources and time required for training are important measures of consideration when making your choice of the right ML method.

Data Privacy Considerations

With rapidly advancing technological processes, ML and AI have emerged as major tools that heavily rely on available datasets. It makes data a highly important part of the process, leading to an increase in privacy concerns and protection of critical information. Hence, your choice of machine-learning technique must fulfill your data privacy demands.

To explore more about Data Ethics, click here

Make an Informed Choice!

In conclusion, it is important to understand the specifications of the four important machine-learning techniques before making a choice. Each method has its requirements and offers unique benefits. It is crucial to understand the dimensions of each technique in the light of key considerations discussed above. Hence, make an informed choice for your ML training processes.