Imbalanced data is a common problem in machine learning, where one class has a significantly higher number of observations than the other. This can lead to biased models and poor performance on the minority class. In this blog, we will discuss techniques for handling imbalanced data and improving model performance.

Understanding imbalanced data

Imbalanced data refers to datasets where the distribution of class labels is not equal, with one class having a significantly higher number of observations than the other. This can be a problem for machine learning algorithms, as they can be biased towards the majority class and perform poorly on the minority class.

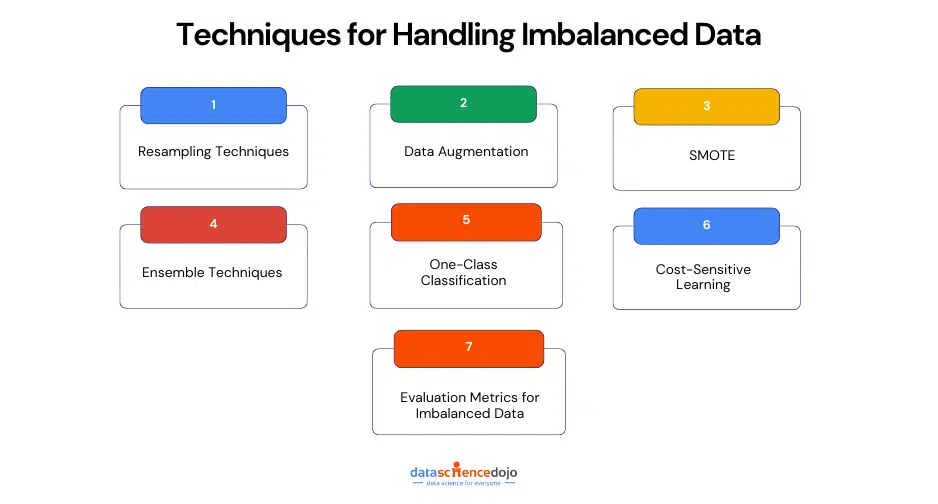

Techniques for handling imbalanced data

Dealing with imbalanced data is a common problem in data science, where the target class has an uneven distribution of observations. In classification problems, this can lead to models that are biased toward the majority class, resulting in poor performance of the minority class. To handle imbalanced data, various techniques can be employed.

1. Resampling Techniques

Resampling techniques involve modifying the original dataset to balance the class distribution. This can be done by either oversampling the minority class or undersampling the majority class.

Oversampling techniques include random oversampling, synthetic minority over-sampling technique (SMOTE), and adaptive synthetic (ADASYN). Undersampling techniques include random undersampling, nearmiss, and tomek links.

An example of a resampling technique is bootstrap resampling, where you generate new data samples by randomly selecting observations from the original dataset with replacements. These new samples are then used to estimate the variability of a statistic or to construct a confidence interval.

For instance, if you have a dataset of 100 observations, you can draw 100 new samples of size 100 with replacement from the original dataset. Then, you can compute the mean of each new sample, resulting in 100 new mean values. By examining the distribution of these means, you can estimate the standard error of the mean or the confidence interval of the population mean.

2. Data Augmentation

Data augmentation is a powerful technique used to artificially increase the size and diversity of a dataset by creating new, synthetic samples from existing ones. In the context of imbalanced data, this helps balance the representation of minority classes. Techniques vary depending on the type of data—

-

For images, this might include rotating, flipping, zooming, cropping, or adjusting brightness.

-

For text, you might perform synonym replacement, random word insertion, or back-translation.

-

For tabular data, methods like SMOTE (Synthetic Minority Over-sampling Technique) generate new data points by interpolating between existing ones.

By enriching the dataset in this way, models are less likely to overfit and can generalize better, especially when learning patterns from underrepresented classes.

Read about top statistical techniques in this blog

3. Synthetic Minority Over-Sampling Technique (SMOTE)

SMOTE is an advanced oversampling method used to balance datasets where one class is significantly underrepresented. Unlike simple oversampling, which duplicates minority class instances and may lead to overfitting, SMOTE takes a more intelligent approach by creating entirely new, synthetic examples.

How SMOTE Works

-

Identify nearest neighbors: For each minority class instance, SMOTE identifies its k nearest neighbors—typically from the same class—using distance metrics like Euclidean distance.

-

Interpolation: A random neighbor is selected, and a new synthetic data point is created by drawing a line between the original data point and the neighbor. A new point is then generated somewhere along this line.

-

Repeat: This process is repeated until the desired balance between classes is achieved.

Example Scenario

Imagine you have only 50 positive cases (minority class) and 950 negative ones (majority class). SMOTE would generate new synthetic examples between similar positive cases to bring the number of positive instances closer to 950. This helps the model understand the underlying patterns in the minority class more effectively.

Benefits of SMOTE

-

Reduces overfitting: Since new samples are not direct copies, the model is less likely to memorize the data.

-

Improves generalization: The synthetic points help in learning a more generalized decision boundary.

-

Better minority class representation: It enhances the model’s ability to correctly classify minority class instances.

Limitations

-

May create ambiguous samples if the minority class is highly overlapping with the majority class.

-

Doesn’t consider feature correlations or outliers, which might introduce noise if not handled properly.

4. Ensemble Techniques

Ensemble techniques are powerful strategies in machine learning that combine predictions from multiple models to improve overall performance, especially when dealing with imbalanced datasets. Instead of relying on a single model, ensemble methods leverage the strengths of several models to produce more robust and accurate predictions.

Key Ensemble Methods

-

Bagging (Bootstrap Aggregating): This method builds multiple models (usually of the same type, like decision trees) using different random subsets of the data. The final prediction is made through majority voting or averaging. Random Forest is a popular bagging technique that performs well even with imbalanced data when class weights are adjusted.

-

Boosting: Boosting builds models sequentially, where each new model focuses on correcting the errors of the previous ones. Techniques like AdaBoost, Gradient Boosting, and XGBoost can be tuned to give more attention to misclassified (often minority class) instances, thereby improving classification performance on the minority class.

-

Stacking: This method combines different types of models (e.g., logistic regression, decision trees, SVM) and uses a meta-model to learn how best to blend their predictions. It allows for greater flexibility and can better capture complex patterns in the data.

Why It Works for Imbalanced Data

Ensemble methods reduce variance and bias, making the model more resilient to the pitfalls of imbalanced classes. When used with class weighting, resampling, or cost-sensitive learning, these techniques can significantly enhance the detection of minority class instances without sacrificing performance on the majority class.

5. One-Class Classification

One-class classification is a specialized technique particularly useful when data from only one class (typically the majority or “normal” class) is available or reliable. The model is trained solely on this single class to learn its distribution and behavior, and then used to identify instances that deviate significantly from it—flagging them as anomalies or potential members of the minority class.

How It Works

The model essentially creates a profile of what “normal” looks like based on the training data. Any new instance that doesn’t fit this profile is considered an outlier. This is especially helpful in situations where minority class data is too rare, sensitive, or expensive to collect—for example, fraud detection or fault monitoring.

Common Algorithms

-

One-Class SVM (Support Vector Machine): Separates the training data from the origin in feature space, classifying anything outside the learned region as an anomaly.

-

Isolation Forest: Randomly isolates observations by splitting data recursively; anomalies are easier to isolate and thus have shorter path lengths.

-

Autoencoders (for deep learning): Neural networks that learn to reconstruct input data. Poor reconstruction indicates an anomaly.

Benefits

-

Doesn’t require balanced datasets.

-

Effective for anomaly and rare event detection.

-

Minimizes risk of overfitting to minority data that may be noisy or inconsistent.

Limitations

-

Less effective when the minority class has varied characteristics or when both classes are available in sufficient quality and quantity.

-

May produce false positives if the “normal” class has high variability.

6. Cost-Sensitive Learning

Cost-sensitive learning is an effective technique that directly addresses class imbalance by incorporating the cost of misclassification into the learning process. Instead of treating all errors equally, it penalizes mistakes on the minority class more heavily, encouraging the model to give greater attention to those instances.

How It Works

In imbalanced datasets, models tend to favor the majority class because minimizing overall error often means simply predicting the dominant class. Cost-sensitive learning changes this by assigning a higher misclassification cost to the minority class. For example, in a medical diagnosis scenario, missing a rare disease case (false negative) is far more serious than a false positive, so the cost of misclassification is adjusted accordingly.

Implementation Techniques

-

Weighted loss functions: Modify the loss function (e.g., cross-entropy) to include class weights, so the model is penalized more for misclassifying minority class instances.

-

Class weights in algorithms: Many machine learning libraries (like scikit-learn, XGBoost, LightGBM) offer built-in parameters to assign different weights to classes.

-

Custom cost matrices: In some cases, you can define a full cost matrix to dictate specific penalties for each type of misclassification.

Benefits

-

No need to alter the original data distribution.

-

Integrates seamlessly into most machine learning algorithms.

-

Helps in real-world cases where consequences of different types of errors vary (e.g., fraud detection, medical diagnostics).

Limitations

-

Requires domain knowledge to assign appropriate costs.

-

May lead to instability if the cost ratios are set too aggressively.

7. Evaluation Metrics for Imbalanced Data

When working with imbalanced data, traditional evaluation metrics like accuracy can be misleading. A model might achieve high accuracy by simply predicting the majority class, while completely ignoring the minority class. Therefore, it’s crucial to use metrics that truly reflect performance on imbalanced datasets.

Key Metrics to Use

-

Precision: Measures how many of the predicted positive instances are actually correct. High precision is important when false positives are costly.

-

Recall (Sensitivity): Measures how many actual positive instances were correctly identified. This is especially critical when detecting rare events in imbalanced data.

-

F1 Score: The harmonic mean of precision and recall. It provides a balanced measure, especially useful when dealing with imbalanced classes.

-

AUC-ROC (Area Under the Receiver Operating Characteristic Curve): Evaluates the model’s ability to distinguish between classes. A higher AUC-ROC indicates better performance on imbalanced data.

-

AUC-PR (Area Under the Precision-Recall Curve): More informative than ROC-AUC when dealing with severely imbalanced datasets, as it focuses on the minority class performance.

Why These Metrics Matter

In imbalanced data scenarios, focusing only on overall accuracy can hide poor performance on the minority class. These metrics ensure that the model is evaluated fairly, especially in cases where identifying rare but critical instances (like fraud, disease, or defects) is the main goal.

Choosing the Best Technique for Handling Imbalanced Data

After discussing techniques for handling imbalanced data, we learned several approaches that can be used to address the issue. The most common techniques include undersampling, oversampling, and feature selection.

Undersampling involves reducing the size of the majority class to match that of the minority class, while oversampling involves creating new instances of the minority class to balance the data. Feature selection is the process of selecting only the most relevant features to reduce the noise in the data.

In conclusion, it is recommended to use both undersampling and oversampling techniques to balance the data, with oversampling being the most effective. However, the choice of technique will ultimately depend on the specific characteristics of the dataset and the problem at hand.