Neural networks are computational models that learn to perform tasks by considering examples, generally without being pre-programmed with task-specific rules. Inspired by human brain structure, they are designed to perform as powerful tools for pattern recognition, classification, and prediction tasks.

There are several types of neural networks with vast and transformative applications spread across various sectors. These include image recognition, natural language processing, autonomous vehicles, financial services, healthcare, recommender systems, gaming and entertainment, and speech recognition.

Neural networks play a critical role in modern AI and machine learning due to their ability to model complex patterns and relationships within data. They are capable of learning and improving over time as they are exposed to more data. Hence, solving a wide array of complex and high-dimensional problems unlike traditional algorithms.

In this blog, we will discuss the 14 major types of neural networks that are put to practical use across industries. Before we dig deeper into each kind of neural network, let’s navigate their two major categories.

Shallow and Deep: Two Categories of Neural Networks

Neural networks are majorly divided into two categories: Deep neural networks and Shallow neural networks. Their distinction is based on the number of hidden layers in their architecture. As the names suggest, deep neural networks have a greater number of layers than their shallow counterparts.

Shallow Neural Networks

These refer to a class of artificial neural networks that typically consist of an input layer and an output layer, with at most one hidden layer in between. Hence, they have a simpler structure and are used for less complex tasks as they are simpler to understand and implement.

Their simpler architecture also results in the need for less computational power and resources, making them quicker to train. However, it also results in their limitations as they struggle with complex modeling problems. Moreover, they possess the risk of overfitting.

Despite these limitations, shallow neural networks are a useful resource. Some of their practical applications in the world of data analysis include:

- Linear Regression: Shallow neural networks are often used in linear regression problems where the relationship between the input and output variables is relatively simple

- Classification Tasks: They are effective for simple classification tasks where the input data can be linearly separable or nearly so

Hence, shallow neural networks are crucial for AI and ML. Despite their simplicity, they lay the groundwork for understanding more intricate neural network architectures and remain a valuable tool for specific applications where simplicity and efficiency are paramount.

Read more to explore the Basics of Neural Networks

Deep Neural Networks

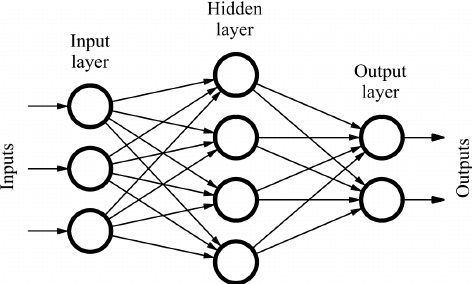

This category of neural networks is defined by several layers of neurons between the input and output layers. This depth of architecture enables them to model complex patterns and relationships within large sets of data, making them highly effective for a wide range of artificial intelligence tasks.

Thus, they offer enhanced accuracy when trained using sufficient amounts of data. Moreover, they have the ability to automatically detect and use relevant features from raw data, reducing the need for manual feature engineering. However, they also possess the risk of overfitting while also using extensive computational resources.

Owing to these benefits and limitations, deep neural networks have made their mark in the industry for the following uses:

- Image Recognition: They are extensively used in image recognition tasks, enabling applications such as facial recognition and medical image analysis

- Speech Recognition: They are pivotal in developing systems that convert spoken language into text, used in virtual assistants and automated transcription services

- Natural Language Processing (NLP): They help in understanding and generating human language, applicable in translation services, sentiment analysis, and chatbots

Hence, deep neural networks are crucial in pushing the boundaries of what machines can learn and achieve, handling tasks that require understanding complex patterns, and making intelligent decisions based on large volumes of data.

Since we have now explored the two major categories of neural networks, let’s dig deeper into the 5 main types of architectures that belong to each.

Perceptron

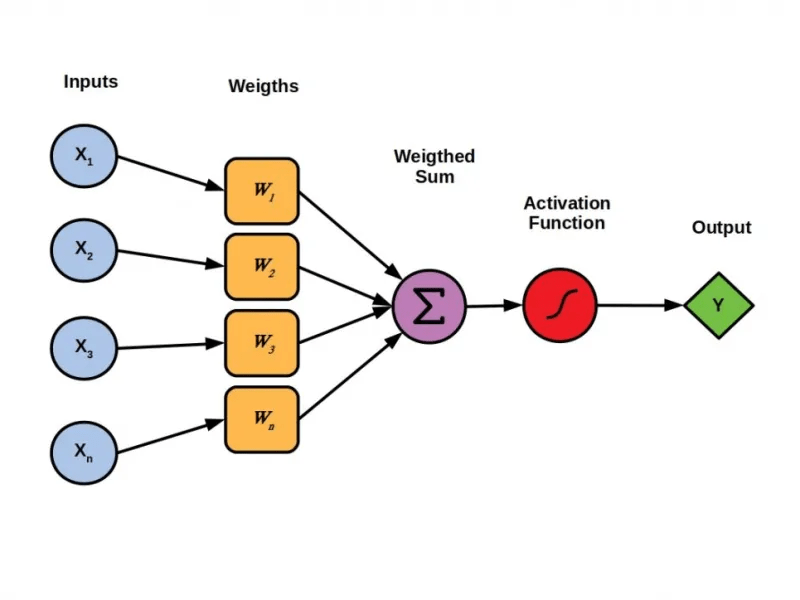

It is a shallow neural network with the simplest model consisting of just one hidden layer. Hence, it is also considered a fundamental building block for neural networks. It can perform certain computations to detect features or business intelligence in the input data.

Perceptrons take multiple numerical inputs and assign a weight to each to determine their influence on the output. The weighted inputs are then summed together and the value is passed through an activation function to determine the output.

They are also known as threshold logic units (TLUs) and serve as a supervised learning algorithm that classifies data into two categories, making them a binary classifier. The perceptron separates the input space into two categories by a hyperplane, determined by its weight and bias.

Explore use-cases of neural networks across 7 different industries

Real-world Applications of Perceptrons

While their simplicity makes them an excellent starting point for learning the basic principles of neural networks, they have several practical uses. Some of these include:

- Simple Image Recognition: Distinguishing between handwritten digits (0-9) where the data is often somewhat linearly separable.

- Spam Filtering: Classifying emails as spam or not spam based on a few key features (e.g., presence of certain keywords).

Limitations

- Linear Problems Only: Perceptrons can only learn linearly separable problems, such as the boolean AND problem. They struggle with non-linear problems, exemplified by their inability to solve the boolean XOR problem. This limitation restricts their applicability to more complex decision boundaries.

- Single Layer Limitation: Being a single-layer model, perceptrons are limited in their ability to process complex patterns or multiple layers of decision-making, a gap filled by more advanced neural network models like Multilayer Perceptrons (MLPs) and Convolutional Neural Networks (CNNs).

Thus, perceptrons are a significant concept in neural networks and a valuable learning tool for understanding the core principles of neural networks. However, for real-world applications requiring complex pattern recognition or handling non-linear data, more advanced neural network architectures are necessary.

Feed Forward Neural Networks (FNNs)

Also known as feedforward networks, they are a type of shallow neural network where connections between the nodes do not form a cycle. They form a multi-layered network where information moves in only one direction—from input to output, through one or more layers of nodes, without any cycles or loops.

The basic learning process of feedforward networks remains the same as that of a perceptron, involving the forward pass of input data through the network to generate output with each layer transforming the data based on its weights and activation function.

During training, the network adjusts its weights to minimize the error between the predicted output and the actual target value.

Real-world Applications of FNNs

- Facial Recognition: Feedforward neural networks are the foundation for facial recognition technologies, where they process large volumes of ‘noisy’ data to create ‘clean’ outputs for identifying faces.

- Natural Language Processing (NLP): They are used in natural language processing (NLP) tasks, including speech recognition and text classification, enabling computers to understand and interpret human language.

- Computer Vision: In the field of computer vision, feedforward networks are employed for image classification and object detection, aiding in the automation of visual understanding tasks.

Limitations

- Lack of Feedback Connections: One of the primary limitations of feedforward neural networks is the absence of feedback connections, which means they are not suitable for tasks where previous outputs are needed to influence future outcomes, such as in sequence prediction problems.

- Difficulty in Capturing Temporal Sequence Information: Due to their feedforward nature, these networks struggle with problems that involve time series data or any data where the sequence order is important.

- Risk of Overfitting: With a large number of parameters (weights and biases) in complex FNNs, there’s a chance of overfitting the training data, leading to poor performance on unseen data.

Hence, FNNs represent a crucial development in the field of artificial intelligence and machine learning, providing the groundwork for more complex neural network models. Despite their limitations, they have found widespread application across various domains, demonstrating their versatility and effectiveness in solving real-world problems.

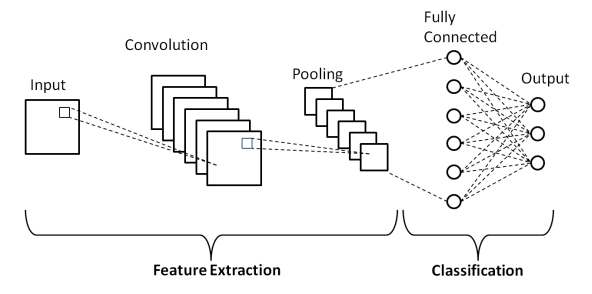

Convolutional Neural Networks (CNNs)

They are a specialized type of deep neural network used for processing data that has a grid-like topology, such as images. Hence, they excel at image recognition and analysis tasks. CNNs utilize a mathematical operation known as convolution, a specialized kind of linear operation.

They can recognize patterns with extreme variability (such as handwritten characters), and thanks to their deep architecture, they can recognize patterns at different levels of abstraction (such as edges or shapes in the lower layers and more complex objects in the higher layers).

Real-world Applications of CNNs

- Automated Driving: CNNs are used in self-driving cars for detecting objects such as stop signs and pedestrians to make driving decisions.

- Facial Recognition: They are employed in security systems for facial recognition purposes, helping in identifying persons in surveillance videos.

- Agriculture: In agriculture, CNNs help in crop and soil monitoring, disease detection, and automated harvesting systems.

- Medical Image Analysis: They analyze medical scans for disease detection, tumor classification, and drug discovery.

Another interesting read: AI and Computer Vision in Road Safety

Limitations

- High Complexity: CNNs are quite complex to design and maintain. They require considerable expertise and knowledge to set up and tune properly.

- Expensive Computation: They are computationally intensive, requiring significant resources, which can be a barrier in terms of hardware requirements for training on large datasets.

- Overfitting Risk: Due to their deep and complex architecture, there’s a high risk of overfitting, especially when not enough training data is available.

- Limited to Grid-like Data: CNNs are primarily designed for analyzing grid-like data like images. They might not be the best choice for tasks involving sequential data or non-grid-structured inputs.

However, despite their limitations, CNNs have revolutionized the field of machine vision by their ability to automatically learn the optimal features and structures from the training data, leading to numerous applications across various industries.

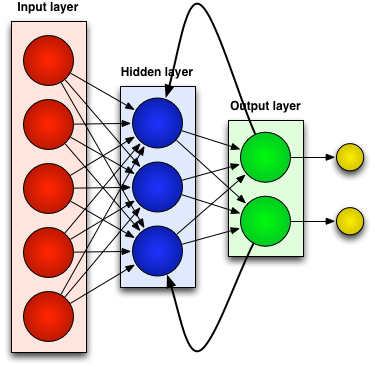

Recurrent Neural Networks (RNNs)

RNNs belong to the category of deep neural networks. They excel in processing sequential data for tasks such as speech recognition, natural language processing, and time series prediction.

They are known for their “memory” which allows them to retain information from previous inputs to influence the current output, making them ideal for applications where data points are interdependent.

They are built on the foundation of FNNs with hidden layers. The hidden layer output loops back into itself, creating a memory of past inputs that informs how the network processes new information. This internal state allows RNNs to handle sequential data like text or speech, where understanding the order is crucial.

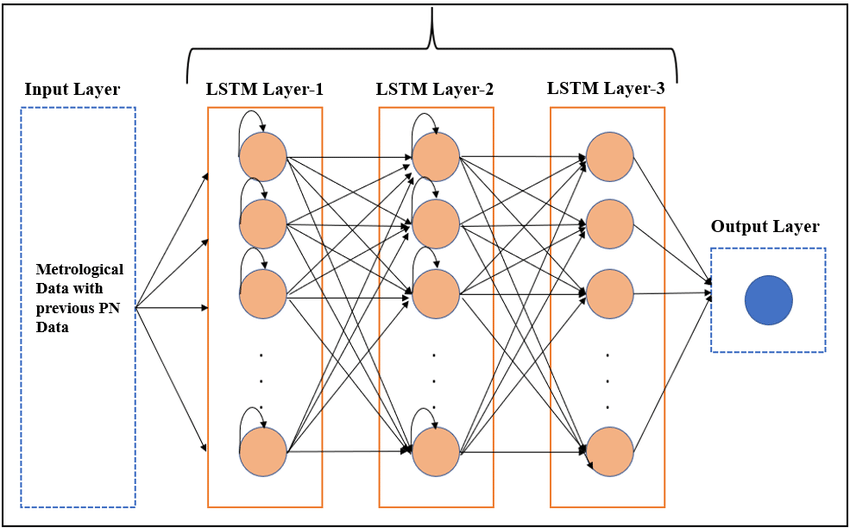

Long Short-Term Memory (LSTM) Networks – A Specific Type of RNN

They are a special kind of RNN that is capable of learning and remembering over long sequences. Unlike traditional RNNs, LSTMs are designed to avoid the long-term dependency problem, allowing them to remember information for longer periods of time.

It is achieved through special units called LSTM units that include components like input, output, and forget gates. These gates control the flow of information into and out of the cell, deciding what to keep in memory and what to discard, thus enabling the network to make more precise decisions based on historical data.

Real-world Applications of RNNs

- Speech Recognition: RNNs are employed in speech recognition systems, enabling devices to understand and respond to spoken commands.

- Text Generation: They are used in applications that generate human-like text, enabling features like chatbots or virtual assistants to provide more engaging user interactions.

- Music Composition: RNNs can also be used to compose music by learning patterns in musical notations and predicting subsequent notes, thus generating new pieces of music.

- Time Series Forecasting: They can predict future stock prices, weather patterns, or sales trends.

Another interesting read: AI in Stock Market Predictions

Limitations

- Vanishing Gradient Problem: During training, RNNs can suffer from the vanishing gradient problem, where gradients shrink as they are propagated back through each timestep of the input sequence. This makes it difficult for RNNs to learn long-range dependencies within the input data.

- High Computational Burden: Training RNNs is computationally intensive, particularly for long sequences, which can make them less scalable compared to other neural network architectures.

- Difficulty in Parallelization: The sequential nature of RNNs means that they cannot be easily parallelized, which limits the speed of computation and training compared to networks like CNNs that can process inputs in parallel.

In summary, RNNs are powerful in handling sequential data with dependencies over time, making them invaluable in diverse fields such as language processing, financial forecasting, and creative applications like music generation. Despite their challenges, they are uniquely suited for tasks involving sequential data.

Generative Adversarial Networks (GANs)

GANs are a unique class of deep-learning architectures used for generative modeling through unsupervised learning. They utilize an adversarial training process to generate new, realistic data. GANs involve two main components:

- Generator Network (G): This network creates synthetic data that is indistinguishable from real data but resembles its distribution.

- Discriminator Network (D): This network plays the role of a critic, distinguishing between genuine and synthesized data.

Real-world Applications of GANs

- Entertainment and Media: In the film industry, GANs can be used to create realistic special effects or to generate new content for video games. They are also used in virtual reality to enhance the user experience by generating immersive environments.

- Fashion and Design: GANs assist in generating new clothing designs or in simulating how clothes would look on bodies of different shapes without actual physical production.

- Medical Imaging: They are used to generate medical images for training healthcare professionals without exposing patient data, thus adhering to privacy concerns.

Limitations

- Training Difficulty: GANs are notoriously difficult to train. The balance between the generator and discriminator can be delicate; if either the generator or discriminator becomes too good too quickly, the other may fail to learn effectively.

- Mode Collapse: A common issue where the generator starts producing a limited diversity of samples or even the same sample repeatedly, which can reduce the usefulness of the generated data.

- Evaluation Challenges: Assessing the performance of GANs is non-trivial as it often requires subjective human judgment, which can be inconsistent and unreliable.

Overall, GANs have shown remarkable success in generating realistic, high-quality outputs across various domains. Despite their challenges in training and evaluation, their potential to revolutionize fields such as media, design, and medicine makes them a fascinating area of ongoing research and application.

Learn more about Deep Learning Using Python in the Cloud

Hence, from the basic Perceptron to the more complex CNNs and RNNs, each type serves a unique purpose and has contributed significantly to the technological advancements we witness today.

How to Choose from Different Types of Neural Networks?

When choosing a type of neural network, several factors must be considered to ensure the selected model aligns with the specific needs of your application or the problem being addressed. These factors include:

Depth and Complexity of the Problem

Simpler problems may be by shallow networks like Perceptrons, while more complex problems, involving image or speech recognition, might necessitate deeper networks, such as CNNs or RNNs.

Type of Input Data

The nature of the input data is crucial in selecting the appropriate neural network. For instance, CNNs are well-suited for grid-like data, while RNNs are useful for sequential data.

Computational Resources

The availability of computational resources is another important consideration as the choice of a neural network might be constrained by the hardware available, influencing the decision towards more resource-efficient models.

Learning Task

Different neural networks are optimized for different types of learning tasks. For example, FNNs are widely used for straightforward prediction and classification problems, whereas networks like LSTM are designed to excel in tasks that require an understanding of long-term dependencies in the data.

Data Availability

The amount and quality of available training data can influence the choice of neural networks. Deep learning models, in general, require large amounts of data to perform well. However, some models are more efficient in learning from limited data through techniques like transfer learning or data augmentation.

Hence, it is important to consider these factors when navigating through the different types of neural networks to ensure their architecture is utilized optimally.

What is the Future of Neural Networks?

The practicality of neural networks lies in their ability to process and learn from large sets of data, making them invaluable tools for solving complex problems that were once thought to be the exclusive domain of human intelligence.

Hence, the impact of neural networks on the future of industrial growth is not just promising; it is inevitable. Their continued development and integration into various sectors will undoubtedly lead to more efficient processes, groundbreaking discoveries, and a deeper understanding of the world around us.