Key Takeaways

- Anthropic shipped /goal in Claude Code v2.1.139 on May 12, 2026 — set a completion condition once, and the agent keeps working across turns until it’s met

- OpenAI’s Codex CLI shipped a comparable /goal feature weeks earlier in April 2026, with persistent state that survives process restarts

- The real story isn’t who got there first — it’s that both frontier labs converged on the same interaction model independently, signaling a structural shift in how AI coding tools are built

Two of the most widely used AI coding tools shipped the same feature within weeks of each other.

Anthropic added /goal to Claude Code on May 12 with version 2.1.139. OpenAI shipped a comparable feature to Codex CLI in April. Neither team was copying the other — they arrived at the same design because the problem they were solving is identical.

AI coding assistants have been optimized for a one-prompt-one-response rhythm. That rhythm breaks down the moment a task requires more than a few turns to complete. The broader shift toward agentic AI — systems that pursue goals rather than respond to prompts — has been building for years, and /goal is the first widely-deployed mechanism to bring that model directly into a developer’s terminal.

/goal is the fix for that.

You define a completion condition — something like “all tests in test/auth pass and the lint step is clean” — and the agent keeps working until a small, fast evaluator model confirms the condition has been satisfied. No manual prompting to continue. No babysitting.

How do you keep Claude working until the job is done? Claude Code helps with this in a few ways, including one we shipped recently: /goal. pic.twitter.com/QtVPmwoKct

— ClaudeDevs (@ClaudeDevs) May 13, 2026

How Claude Code’s /goal Works

The mechanics are clean and deliberate.

Run /goal followed by the condition you want satisfied. After each turn, a lightweight evaluator model checks whether the condition holds. If it doesn’t, Claude starts another turn automatically instead of returning control to you. The goal clears once the condition is met.

Key things to know about the session behavior:

- One goal per session — a new /goal command replaces the active one

- Status indicator — a ◎ /goal active badge shows elapsed time and tokens spent while a goal is running

- Evaluator transparency — after each turn, the evaluator returns a short reason explaining why the condition is or isn’t met yet, visible in both the status view and the transcript

- Manual override — run /goal clear to cancel anytime, or /goal with no argument to check progress

What matters about the design is how Anthropic framed what /goal is actually for.

The official docs position it for “substantial work with a verifiable end state” — not vague tasks, not exploration. Work that already has a clear finish line.

Use cases Anthropic explicitly calls out:

- Migrating a module until every call site compiles and tests pass

- Implementing a design doc until all acceptance criteria hold

- Splitting a large file into focused modules until each is under a size budget

- Running through a labeled issue backlog until the queue is empty

That framing defines the right mental model: /goal is a control surface for work that can be verified, not a shortcut for tasks you haven’t fully defined.

Writing Conditions That Actually Work

This is where most people will get tripped up early on.

A condition that holds across many turns needs three things:

- One measurable end state — a test result, a build exit code, a file count, an empty queue

- A stated check — how Claude should prove it (“npm test exits 0”, “git status is clean”)

- Constraints that matter — anything that must not change along the way (“no other test file is modified”)

The condition can be up to 4,000 characters. You can also include a turn or time clause to bound how long a goal runs — “or stop after 20 turns” is a simple guardrail worth building into most conditions by default.

Writing effective /goal conditions is an extension of good prompt engineering. The same principles that make a standard prompt precise — specificity, clear success criteria, explicit constraints — apply here, but the stakes are higher because the agent will keep acting on a vague condition until it runs out of turns. If you’re newer to crafting structured instructions for LLMs, this primer on prompt engineering strategies covers the foundations well.

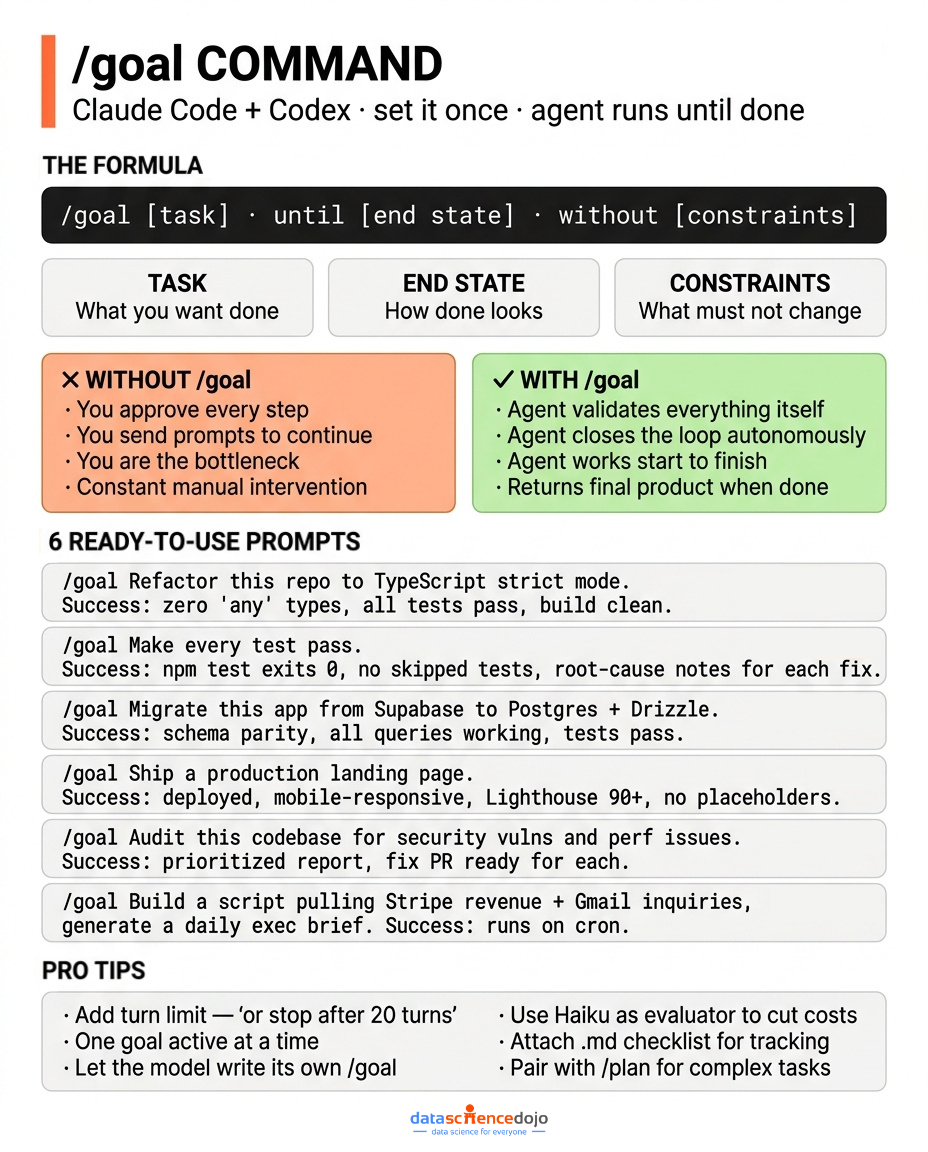

A few examples from the cheatsheets circulating on X that illustrate the pattern well:

- /goal Refactor this repo to TypeScript strict mode. Success: zero ‘any’ types, all tests pass, no functional regressions, build clean, summary of changes.

- /goal Make every test in this repo pass. Success: npm test exits 0, no skipped tests, root-cause notes for each fix, no test-mocking shortcuts.

- /goal Migrate this app from Supabase to Postgres + Drizzle. Success: schema parity, all queries working, seed data preserved, tests pass, migration guide written.

Each of those conditions has a clear binary outcome. The agent either hits it or it doesn’t — and the evaluator can tell the difference.

The Trust and Safety Model

/goal is deliberately gated.

The feature only runs in workspaces where the trust dialog has been accepted, because the evaluator is part of the hooks system. It’s also unavailable when disableAllHooks is set at any settings level, or when allowManagedHooksOnly is set in managed settings.

This isn’t a footnote — it tells you something about how Anthropic is thinking about autonomous workflows. The trust dialog is the boundary. Teams deploying Claude Code in managed environments need to account for this before building /goal into any pipeline.

Security becomes a first-order concern as agents run longer and touch more of your codebase unsupervised. The trust model here is also relevant for teams using Claude Code Remote Control, where the agent is running locally but being accessed from another device — a long /goal run in that context means your machine is executing code autonomously while you’re away from it.

For individual developers, the practical implication is simple: if /goal silently does nothing when you run it, check the trust settings first.

How Codex’s /goal Is Different

Codex shipped its version roughly a month earlier, and the key architectural difference is persistence.

Where Claude Code’s goal lives within an active session, Codex’s implementation is built on app-server APIs and runtime continuation. The agent can survive process restarts, reboots, and terminal crashes. You can pick up where you left off even if your session died mid-task.

Other meaningful differences:

- Checkpoint model — Codex defaults to “plan-mode nudges,” pausing at key decision points to confirm direction rather than running fully unattended. Full-auto mode is available via codex –approval-mode full-auto but isn’t the default.

- Setup — Claude Code: launch CLI, type /goal. Codex Desktop: Settings → Configuration → goals = true. Different surfaces, different onboarding friction.

- Multi-agent scope — Codex’s May 2026 release expanded MultiAgentV2 support, so multiple goals can be active across different environments, each tied to its own thread.

The philosophical difference between the two implementations is real.

Codex leans toward inline confirmation at decision points — the agent checks in before making consequential moves. Claude Code leans toward a blanket trust model — grant trust at the workspace level, then let it run.

Neither is wrong. They reflect different assumptions about who is using the tool and how much they want to stay in the loop during a long-running task.

The Formula Both Tools Share

Despite the architectural differences, the prompt structure that works is essentially the same across both tools.

The three-element formula:

/goal [do the work] until [measurable end state] without [constraints]

For more complex tasks, both tools benefit from an extended structure:

/goal [primary objective]

Context: [what the project is]

Success criteria: [measurable outcome 1] [outcome 2]

Constraints: [rule 1] [rule 2]

Checklist: [attach .md file for tracking]

Tips that apply regardless of which tool you’re using:

- One goal at a time — scope it tightly. A goal that tries to do too many things at once is harder for the evaluator to verify.

- Let the model write its own /goal — describe the task in plain language and ask Claude or Codex to generate the condition. The model often writes a tighter condition than a human would.

- Pair with /plan — run /goal → /plan → /goal clear for complex tasks where you want the agent to map the work before executing it.

- Attach a .md checklist — the agent can use it as a running log, which makes the evaluator’s job easier and gives you a readable audit trail.

- Add turn limits — “or stop after 20 turns” is a cheap safeguard against runaway sessions.

The Token Cost Risk Is Real

This is the part that doesn’t show up in the launch posts.

Neither Codex nor Claude Code currently has a native “set budget cap per goal” feature. A poorly scoped condition running across 50 turns with Sonnet as the evaluator model can cost significantly more than expected.

Part of what makes this worth understanding is the underlying model architecture. The /goal evaluator is itself a language model — a small one, but it’s running on every turn. If you’re using a larger model as the evaluator, costs compound fast. The shift toward using SLMs for evaluator-style tasks in agentic systems is exactly why tools like these tend to route lightweight verification work to smaller, cheaper models rather than the primary reasoning model.

Practical mitigations:

- Hardcode a turn limit directly into the condition — the single most effective safeguard

- Use Haiku as the evaluator model — evaluation speed and costs stay predictable; Sonnet as the evaluator spikes overhead fast

- Set platform-level budget alerts before kicking off any long-running goal

- Start with a dry run — test the condition on a small scope before pointing /goal at your entire codebase

The community is calling out token consumption as the main friction point right now. One widely shared take on X: “Already active in Claude Code and Codex — you need to use it now.” The enthusiasm is warranted. The cost awareness isn’t always there alongside it.

Comparing the Two Side by Side

| Claude Code | Codex CLI | |

|---|---|---|

| Shipped | May 12, 2026 (v2.1.139) | April 2026 |

| Persistence | Session-scoped | Survives restarts/crashes |

| Default approval mode | Trust dialog (workspace-level) | Plan-mode nudges (inline) |

| Full-auto mode | Auto mode (approve tool calls) | codex --approval-mode full-auto |

| Turn tracking | ◎ /goal active + evaluator reason |

Terminal title indicator |

| Multi-agent | One goal per session | Multiple goals across environments |

| Mobile | Yes (Claude Code Mobile) | Desktop CLI focus |

| Remote Control | Yes | N/A |

| Works with | Claude Code CLI, Remote Control, -p flag | Codex CLI, Codex Desktop |

The Actual Story: A Pattern Becoming Infrastructure

The more significant thing happening here is not the feature — it’s the convergence.

When two competing labs ship the same interaction primitive within the same month without coordinating, that’s independent validation. /goal is becoming the default way to express “keep working on this until it’s done” across agentic coding tools. The fact that it’s also appeared in Hermes reinforces that this is a cross-platform pattern, not a product feature.

This is a natural extension of how agentic LLMs have been evolving — from models that respond to prompts, to models that reason across steps, to models that now pursue defined objectives autonomously across an unbounded number of turns. /goal is essentially the user-facing surface of that architectural shift. That has real implications for how developers should think about workflows going forward:

- Tasks that previously required babysitting — multi-file refactors, migration jobs, test cleanup backlogs — are now first-class use cases with native tooling

- The “keep going” prompt is effectively deprecated. You define the condition once and hand it off.

- The session model of AI coding tools is shifting from discrete exchanges to durable objectives

Anthropic doubled Claude Code’s five-hour rate limits for paid plans in early May — a timing that makes more sense nowthat /goal is live and encouraging longer unsupervised runs. If those limits extend further, it signals Anthropic is prepared to bet on multi-hour autonomous workflows as a core product pattern.

The underlying reason both labs arrived here simultaneously is that the Model Context Protocol and the broader agentic tooling ecosystem have matured enough to make persistent, verifiable agent loops tractable. A year ago, the infrastructure to reliably evaluate conditions across many turns didn’t exist in a form that shipped cleanly to developers. It does now.

What Practitioners Should Do Right Now

If you’re on Claude Code:

- Update to v2.1.139 if you haven’t already

- Pick one task you currently babysit — anything where you keep prompting “continue” — and reframe it as a /goal condition

- Start with test-driven refactoring — passing tests make a natural, verifiable end state

- Add “or stop after 20 turns” to every condition until you’ve calibrated what your typical goals cost

If you’re on Codex:

- Enable goals in Settings → Configuration → goals = true

- Use the persistence layer for anything long enough that your terminal might close mid-task

- Keep plan-mode on by default unless you’re confident in the condition — it’s a useful safety net for new task types

If you’re evaluating both:

- Choose Codex if persistence across restarts matters for your workflow

- Choose Claude Code if you want cleaner Remote Control integration or mobile access

- Both work. The formula is the same. Start with whichever tool you’re already using.

What to Watch Next

A few signals worth tracking over the coming months:

- Rate limit expansion — Anthropic’s May rate limit doubling looks like preparation for longer /goal runs. Further increases would confirm autonomous workflows as a priority.

- Native budget caps — neither tool has this yet. The first to ship a “max spend per goal” control wins the trust of teams running this in production.

- Evaluator model choice — both tools currently handle evaluator model selection implicitly. Explicit developer control over which model evaluates the condition would meaningfully change the cost calculus.

- Cross-vendor standardization — if Hermes, Cursor, and other tools adopt the same /goal primitive, it may evolve into a shared spec rather than competing implementations.

The pattern is validated. The tooling will keep improving around it.

FAQ

What is the /goal command in Claude Code?

/goal is a command introduced in Claude Code v2.1.139 that lets you define a completion condition for an agent. After each turn, a lightweight evaluator model checks whether the condition is met. If not, Claude continues working automatically — no prompting required. The goal clears once the condition is satisfied.

How is Claude Code’s /goal different from Codex’s /goal?

The biggest difference is persistence. Codex’s implementation survives process restarts and terminal crashes using app-server APIs. Claude Code’s goal is session-scoped. Codex also defaults to inline confirmation checkpoints; Claude Code uses a workspace trust dialog as the access control layer.

What kinds of tasks is /goal designed for?

Tasks with a verifiable end state — migrating a module until every call site compiles, running tests until a suite passes, cleaning a backlog until it’s empty. It’s not well-suited for open-ended tasks without a clearly defined finish line.

Is /goal available in Claude Code Remote Control and mobile?

Yes. As of v2.1.139, /goal works in interactive mode, the -p flag, Remote Control, and Claude Code Mobile.

What’s the biggest risk with /goal?

Token cost. Neither Claude Code nor Codex has a native per-goal budget cap. A long-running goal with a large model as the evaluator can consume significantly more tokens than expected. Always include a turn limit in your condition and set platform-level budget alerts before running anything substantial.

Does /goal work the same way in both Claude Code and Codex?

The underlying pattern is the same — define a condition, let the agent work until it’s met — but the implementations differ in persistence, approval model, and setup. The three-element formula (/goal [task] until [end state] without [constraints]) works in both.